Snowflake has emerged as a transformative force in data warehousing. Founded in 2012, Snowflake has revolutionized data storage and analysis, offering cloud-based solutions that separate storage and compute functionalities, allowing businesses to scale dynamically based on their needs.

This blog aims to unpack the innovative architecture of Snowflake, which combines elements of both shared-disk and shared-nothing models to provide a flexible, scalable solution for modern data needs. We will explore how each layer of this architecture works and the unique advantages it brings to businesses. From its ability to handle vast amounts of data with ease to its cost-effective scalability options, understanding Snowflake’s architecture will reveal why it stands out as a leading platform in the data warehousing space.

Unveiling Snowflake’s Hybrid Architecture

The revolutionary data design of Snowflake combines the shared-disk and shared-nothing architectures, two conventional models. This method takes advantage of every approach’s strength to create an effective and scalable solution for warehousing information.

Shared-Disk vs. Shared-Nothing Architectures

Shared-Disk Architecture: In this model, a central storage repository is accessed by all computing nodes. Data management is simplified because each node can read from or write to the same data source. However, there may be bottlenecks when many nodes try to use the central storage concurrently.

Shared-Nothing Architecture: Each node has its own CPU, memory, and disk storage in this highly scalable and fault-tolerant architecture. Nodes do not contend for resources as much as they would if data were not partitioned across them; however, partitioning also necessitates more advanced strategies for distributing queries and processing them.

Snowflake’s Hybrid Model

Snowflake fuses these two architectures together into one system by having a central data repository (like shared-disk systems) but spreading query processing over multiple independent computer clusters (like shared-nothing systems). Here are some details about this setup that are mentioned in different sources:

Database Storage Layer

It forms the base layer which uses shared-disk model to manage cloud infrastructure-based storage of data so that it remains flexible as well as cost-effective. The information is automatically split into compressed micro-partitions which are optimized for performance.

Query Processing Layer (Virtual Warehouses)

Each virtual warehouse acts as a standalone computer cluster where a shared-nothing model is implemented. These warehouses do not have any resource sharing hence no typical performance problems associated with shared disks systems happen here; they handle queries in parallel hence allowing massive scalability with concurrent processing without affecting each other.

Core Features of Snowflake Architecture

Snowflake’s architecture is renowned for its robust features that enhance scalability, enable efficient data sharing, and provide advanced data cloning capabilities. These features collectively contribute to operational efficiency and overall performance, making Snowflake a competitive choice for modern data warehousing needs.

1. Scalability

Elastic Scalability: One of Snowflake’s standout features is its ability to scale computing resources up or down without interrupting service. Users can scale their compute resources independently of their data storage, meaning they can adjust the size of their virtual warehouses to match the workload demand in real-time. This flexibility helps in managing costs and ensures that performance is maintained without over-provisioning resources.

Multi-Cluster Shared Data Architecture: This architecture allows multiple compute clusters to operate simultaneously on the same set of data without degradation in performance, facilitating high concurrency and parallel processing.

2. Data Sharing

Secure Data Sharing: Snowflake enables secure and easy sharing of live data across different accounts. This feature does not require data movement or copies, which enhances data governance and security. Companies can share any slice of their data with consumers inside or outside their organization, who can then access this data in real-time without the need to transfer it physically.

Cross-Region and Cross-Cloud Sharing: Snowflake supports data sharing across different geographic regions and even across different cloud providers, which is a significant advantage for businesses operating in multiple regions or with customers in various locations.

3. Data Cloning

Zero-Copy Cloning: Snowflake’s data cloning feature allows users to make full copies of databases, schemas, or tables instantly without duplicating the data physically. This capability is particularly useful for development, testing, or data analysis purposes, as it allows businesses to experiment and develop in a cost-effective and risk-free environment. Cloning happens in a matter of seconds, regardless of the size of the data set, which dramatically speeds up development cycles and encourages experimentation.

Key Layers of Snowflake Architecture

1. Storage Layer

In Snowflake, the storage layer forms the core part of its architecture that takes care of arranging and managing information. It acts as a central repository which holds all the data stored by users and applications on Snowflake. This layer is unique in that it uses what are known as micro-partitions – small compact units for efficient data management.

Micro-partitions: These are smaller divisions of data that Snowflake automatically creates and optimizes internally through compression. The structure supports columnar storage where each column has its own file. The columnar format accelerates read speeds especially for analytic queries which usually scan specific columns rather than entire rows.

2. Compute Layer

The Compute Layer is different from other components because it does not depend on the storage infrastructure; instead, virtual warehouses define this layer. Virtual Warehouses are clusters of computing resources used to process queries and perform computational tasks.

Virtual Warehouses: These compute resources can be scaled up or down depending on demand and they are created whenever needed. Each warehouse operates independently so that no two activities interfere with one another; thus, many queries could run at once without any performance degradation due to resource contention.

3. Cloud Services Layer

This is where all background services that hold Snowflake’s architecture together are managed by snowflake itself i.e., Cloud Services Layer. This level also ensures various operations within the platform are well coordinated hence comprising multiple services ranging from system security up-to query optimization among others.

Services Provided: User authentication is carried out in this layer; infrastructure management is done here too besides metadata management being done here as well as Query parsing & optimization – everything necessary to make sure all parts work together seamlessly so there can be a smooth user experience while using snowflake.

Integration of Components: Cloud service layers separate storage and compute layers by taking care of essential operations like authentication or even query optimization thus allowing them to work independently without duplicating each other’s roles within snowflake which doesn’t only improve security & performance but also simplifies scalability and maintenance.

Advantages of Snowflake’s Hybrid Architecture

This design gives users ease of use with shared disks models and the performance and scalability enhancements of shared nothing architectures. Some benefits are:

1. Scalability

The ability to scale storage and compute independently enables organizations to flex their resources up or down as required without significant disruption or loss in productivity.

2. Performance

Snowflake can provide faster access speeds and better query performance by reducing resource contention.

3. Cost-effectiveness

Companies are only charged for what they consume in terms of storage and compute power due to Snowflake’s unique architecture, thus helping them optimize their investments into data infrastructure.

4. Unified Platform

Snowflake supports a variety of data formats and structures, allowing you to consolidate data from various sources into a single platform. This simplifies data management, improves accessibility, and facilitates comprehensive data analysis.

5. Enhanced Security

Snowflake offers robust security features like fine-grained access control. You can manage data access precisely, ensuring sensitive information is protected according to industry standards and regulations.

6. Streamlined Data Operations

Snowflake’s ability to handle structured and semi-structured data within the same platform eliminates the need for complex data transformation processes. This simplifies data preparation and accelerates insights generation.

7. Improved Query Performance

Snowflake’s architecture is optimized for fast query performance. You can perform complex queries on large datasets efficiently, allowing you to gain valuable insights from your data quickly.

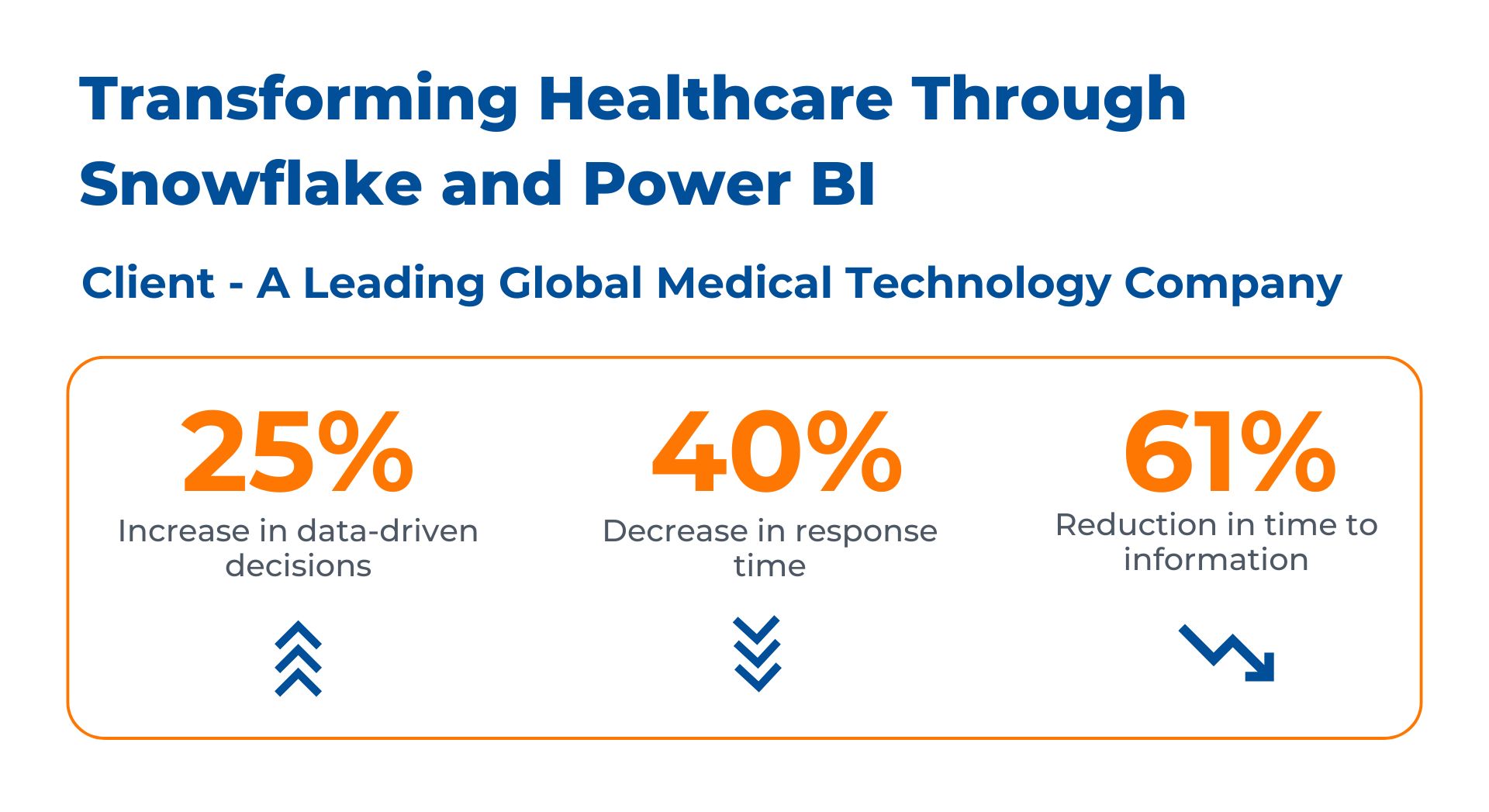

Case Study: Healthcare Giant Streamlines Analytics with Snowflake and Power BI

Challenge: A leading healthcare organization, grappling with a complex and fragmented data landscape, sought help extracting actionable insights from their extensive patient data. The data, scattered across numerous departments and geographical locations, presented a significant hurdle to effective decision-making and the enhancement of patient care.

Solution: Kanerika helped the organization embark on a data modernization project, implementing a two-pronged approach:

- Centralized Data Management with Snowflake: We adopted Snowflake’s cloud-based data warehouse solution. Snowflake’s ability to handle massive datasets and its inherent scalability made it ideal for consolidating data from disparate sources.

- Empowering Data Visualization with Power BI: Once the data was centralized in Snowflake, we leveraged Power BI, a business intelligence tool, to gain insights from the unified data set.

Results:

By leveraging Snowflake and Power BI together, Kanerika helped the healthcare expert achieve significant improvements:

- Faster and More Accurate Insights: Snowflake’s unified data platform and Power BI’s user-friendly interface enabled faster and more accurate analysis of patient data.

- Improved Decision-Making: Data-driven insights from Power BI empowered healthcare professionals to make better decisions regarding patient care, resource allocation, and overall healthcare strategy.

- Enhanced Patient Care: Having a holistic view of patient data allowed for improved care coordination and the development of more personalized treatment plans.

Real-world Use Cases of Snowflake Architecture

Companies like Western Union and Cisco have adopted Snowflake to leverage its powerful data warehousing capabilities, achieving significant operational benefits, including cost reduction and enhanced data governance. These examples illustrate the practical impacts of Snowflake’s innovative architecture in diverse business environments.

1. Western Union

- Scalability and Flexibility: Western Union uses Snowflake to manage its extensive global financial network, handling vast amounts of financial data and transactions. Snowflake’s architecture allows Western Union to scale resources dynamically, accommodating spikes in data processing demand without compromising performance.

- Cost Efficiency: By utilizing Snowflake’s on-demand virtual warehouses, Western Union can optimize its computing costs. This flexibility in scaling ensures that they only pay for the compute resources they use, significantly reducing overhead costs associated with data processing.

- Data Consolidation: Western Union consolidated over 30 different data stores into Snowflake, streamlining data management and improving accessibility. This consolidation has not only reduced infrastructure complexity but also enhanced data analysis capabilities, providing deeper insights at lower costs.

2. Cisco

- Enhanced Data Governance: Cisco utilizes Snowflake’s fine-grained access control features to improve data security and compliance. This capability allows Cisco to manage data access precisely, ensuring that sensitive information is protected according to industry standards and regulations.

- Operational Efficiency: Snowflake’s support for various data structures and formats has enabled Cisco to streamline its data operations. The ability to perform complex queries and analytics on structured and semi-structured data within the same platform reduces the need for data transformation and speeds up insight generation.

- Cost Reduction: Cisco has benefited from Snowflake’s storage and compute separation, which allows for more cost-effective data management. By fine-tuning the storage and compute usage based on actual needs, Cisco has minimized unnecessary expenses, enhancing overall financial efficiency.

Challenges and Considerations in Snowflake’s Architecture

While Snowflake offers a robust data warehousing solution with numerous benefits, it’s not without its challenges and limitations. Companies considering adopting Snowflake should be aware of these potential issues and plan accordingly to mitigate them.

Challenges

- Complexity in Cost Management: Although Snowflake’s pay-as-you-go model is advantageous, it can also lead to unpredictability in costs. Without proper management, costs can escalate, especially if large volumes of data transfers occur or if there is inefficient use of virtual warehouses.

- Data Transfer Costs: While Snowflake provides significant advantages in data storage and access, data transfer costs can be a concern, especially when integrating with data sources across different clouds or regions. This might require additional planning and budgeting to manage effectively.

- Learning Curve: Despite its user-friendly interface, Snowflake has a unique architecture that can be complex to understand and implement effectively. Organizations might face challenges with initial setup and optimization unless they invest in training or hire experienced personnel.

Considerations for Adoption

- Evaluate Data Strategy: Before adopting Snowflake, companies should evaluate their current and future data needs. Understanding the type of data, volume, and analysis requirements can help in designing an architecture that leverages Snowflake’s strengths effectively.

- Budget Planning: Due to the potential variability in costs, it is crucial for companies to plan their budgets carefully. Implementing monitoring tools and setting up alerts for abnormal spikes in usage can help in managing costs effectively.

- Integration with Existing Systems: Companies need to consider how Snowflake will fit into their existing data ecosystem. This includes evaluating how it integrates with existing applications and whether additional tools or services are needed to bridge any gaps.

- Security and Compliance: While Snowflake provides robust security features, companies must ensure that these features align with their security policies and compliance requirements. It’s important to configure these settings correctly to protect sensitive data and meet regulatory standards.

Kanerika: Your Reliable Partner for Efficient Snowflake Implementations

Kanerika, with its expertise in building efficient enterprises through automated and integrated solutions, plays a crucial role in enhancing the implementation and optimization of Snowflake architectures for global brands.

Strategic Integration and Customization

Kanerika leverages its proprietary digital consulting frameworks to design tailored Snowflake solutions that align with an organization’s specific data strategies and goals. By automating routine data operations and integrations, we can help organizations reduce operational overhead and accelerate data workflows in Snowflake. This includes automating data ingestion, transformation, and the management of virtual warehouses, enhancing the overall efficiency of the data platform.

Enhanced Decision-Making and Market Responsiveness

Leveraging advanced analytics capabilities, we transform Snowflake’s stored data into actionable insights, empowering decision-makers with accurate and timely information to respond swiftly to evolving market conditions. We can develop custom analytics and reporting solutions that integrate seamlessly with Snowflake.

Security and Compliance

We ensure that Snowflake implementations comply with the latest security standards and regulatory requirements. This includes configuring Snowflake’s security settings to match an organization’s specific compliance and governance frameworks.

Through strategic partnership with Kanerika, organizations using Snowflake can enhance their data warehousing capabilities significantly. Kanerika’s role in streamlining integration, optimizing costs, and enabling informed decision-making helps businesses maximize their investment in Snowflake, ensuring they are not only prepared to meet current data challenges but are also positioned to capitalize on future opportunities.

Frequently Asked Questions

What is Snowflake architecture?

Snowflake architecture is a cloud-native data platform design that separates compute, storage, and services into independent layers. This multi-cluster shared data architecture allows organizations to scale each component independently without impacting performance or availability. Unlike traditional data warehouses, Snowflake runs entirely on cloud infrastructure from AWS, Azure, or Google Cloud, eliminating hardware management. The architecture enables concurrent workloads, automatic scaling, and near-unlimited storage capacity. Kanerika helps enterprises leverage Snowflake architecture for modern analytics—connect with our team to design your cloud data platform.

What is the 3-tier architecture of Snowflake?

Snowflake’s 3-tier architecture consists of the database storage layer, query processing layer, and cloud services layer. The storage layer holds data in compressed, columnar format across cloud object storage. The query processing layer uses virtual warehouses—independent compute clusters that execute queries without resource contention. The cloud services layer manages authentication, metadata, query optimization, and infrastructure coordination. This separation allows each tier to scale independently, delivering consistent performance regardless of workload demands. Kanerika’s Snowflake specialists can architect your three-layer deployment for optimal cost and performance—schedule a consultation today.

What is the difference between traditional architecture and Snowflake architecture?

Traditional data warehouse architecture tightly couples compute and storage on fixed hardware, requiring capacity planning and manual scaling. Snowflake architecture decouples these resources entirely, enabling independent scaling of processing power and storage on demand. Traditional systems suffer performance degradation during concurrent queries, while Snowflake’s multi-cluster compute handles unlimited concurrent users without contention. Maintenance windows, indexing strategies, and manual tuning disappear with Snowflake’s automated optimization. Cost models shift from upfront capital expenditure to consumption-based pricing. Kanerika migrates enterprises from legacy data warehouses to Snowflake with minimal disruption—request your migration assessment now.

Is Snowflake an OLAP or OLTP?

Snowflake is an OLAP (Online Analytical Processing) platform designed for complex analytical queries across large datasets. Its columnar storage architecture and MPP (massively parallel processing) engine optimize read-heavy analytical workloads rather than transactional operations. While Snowflake supports standard SQL and can handle some transactional patterns, it lacks OLTP features like row-level locking for high-frequency inserts and updates. Organizations typically use Snowflake alongside OLTP databases, loading transactional data for analytics and reporting. Kanerika designs integrated data architectures connecting your OLTP systems with Snowflake analytics—let’s discuss your data strategy.

What's unique about Snowflake architecture?

Snowflake architecture uniquely combines shared-disk and shared-nothing designs into a hybrid model. Data resides in centralized cloud storage accessible to all compute nodes, while each virtual warehouse operates independently with dedicated resources. This eliminates data movement between clusters and resource contention during peak loads. Zero-copy cloning creates instant database copies without duplicating storage. Time Travel enables querying historical data states without backup complexity. Automatic micro-partitioning removes manual indexing requirements. These innovations distinguish Snowflake from legacy and competing cloud platforms. Kanerika unlocks these architectural advantages for your enterprise—explore how with a free discovery call.

Is Snowflake a PaaS or SaaS?

Snowflake operates as a SaaS (Software as a Service) data platform delivered entirely through cloud infrastructure. Users access Snowflake through web interfaces and SQL clients without installing, configuring, or maintaining any software or hardware. Snowflake handles all infrastructure management, updates, security patches, and optimization automatically. Unlike PaaS offerings requiring application deployment and management, Snowflake provides ready-to-use data warehousing, data lake, and data sharing capabilities. The consumption-based pricing model reinforces its SaaS delivery, charging only for compute and storage used. Kanerika accelerates your Snowflake SaaS adoption with proven implementation frameworks—reach out to begin.

Is Snowflake considered ETL or ELT?

Snowflake supports ELT (Extract, Load, Transform) workflows natively through its powerful compute architecture. Raw data loads directly into Snowflake, then transforms execute using Snowflake’s scalable virtual warehouses. This approach leverages Snowflake’s elastic compute capacity and columnar processing for transformation performance that traditional ETL tools cannot match. While Snowflake integrates with external ETL platforms, its architecture favors ELT by separating storage costs from transformation compute. Organizations increasingly adopt ELT patterns to simplify pipelines and reduce data movement latency. Kanerika builds optimized ELT pipelines on Snowflake architecture—talk to our data engineers about modernizing your workflows.

Is Snowflake a database or data warehouse?

Snowflake is a cloud data warehouse built for analytical workloads, though it extends beyond traditional data warehouse capabilities. Its architecture supports structured and semi-structured data, data lake functionality, data sharing, and application development through Snowpark. While Snowflake uses SQL and stores relational data like a database, its columnar storage, MPP processing, and OLAP optimization distinguish it from transactional databases. Snowflake positions itself as a Data Cloud platform encompassing warehousing, lakes, and collaboration. The architecture serves analytics-first use cases requiring scale and concurrency. Kanerika implements Snowflake data warehouse solutions tailored to your analytical requirements—contact us for a platform evaluation.

Is Snowflake a warehouse or lakehouse?

Snowflake originated as a cloud data warehouse but has evolved toward lakehouse capabilities. Its architecture now supports external tables on data lake storage, native handling of semi-structured formats like JSON and Parquet, and Iceberg table integration. Snowflake’s data sharing and marketplace features extend beyond traditional warehousing. However, Snowflake differs from pure lakehouse platforms like Databricks by maintaining its managed storage layer rather than operating directly on open lake formats. Most organizations position Snowflake as a governed warehouse with lakehouse extensibility. Kanerika architects hybrid warehouse-lakehouse solutions on Snowflake—schedule a consultation to explore your options.

What is a key feature of Snowflake architecture?

Separation of compute and storage stands as the defining feature of Snowflake architecture. This decoupling allows organizations to scale processing power independently from data storage, paying only for resources consumed. Virtual warehouses spin up instantly, process queries, then suspend without affecting stored data or other workloads. Multiple compute clusters access the same data simultaneously without copying or moving it. This architecture eliminates resource contention, enables workload isolation, and delivers predictable performance at any scale. Traditional systems cannot match this elasticity or cost efficiency. Kanerika optimizes Snowflake compute-storage configurations for your workloads—get your architecture review today.

How is Snowflake different from SQL?

Snowflake is a cloud data platform, while SQL is a query language—they serve fundamentally different purposes. Snowflake uses ANSI SQL as its primary interface, supporting standard syntax plus Snowflake-specific extensions for semi-structured data and advanced functions. SQL Server, MySQL, and PostgreSQL are database management systems that also use SQL. Snowflake’s architecture differs through cloud-native design, automatic scaling, separated compute-storage layers, and zero administration requirements. You write SQL queries to interact with Snowflake, but Snowflake handles infrastructure that traditional SQL databases require you to manage. Kanerika helps teams migrate SQL workloads to Snowflake seamlessly—connect with our migration experts.

Is Snowflake better than Databricks?

Snowflake and Databricks excel in different scenarios rather than one being universally better. Snowflake architecture optimizes for SQL-based analytics, BI workloads, and data sharing with minimal administration. Databricks suits data science, machine learning, and streaming workloads using Spark-based processing on open lakehouse formats. Snowflake offers simpler governance and easier adoption for SQL-proficient teams. Databricks provides more flexibility for Python and Scala developers building ML pipelines. Many enterprises deploy both platforms for complementary use cases. The right choice depends on your workload mix and team skills. Kanerika evaluates both platforms against your requirements—request a comparative assessment.

What is the difference between Oracle and Snowflake architecture?

Oracle uses traditional shared-disk architecture with tightly coupled compute and storage requiring significant infrastructure management. Snowflake architecture separates these layers entirely on cloud infrastructure with automatic scaling and zero administration. Oracle requires capacity planning, manual tuning, indexing strategies, and DBA expertise. Snowflake eliminates these through automated optimization and elastic resources. Oracle licenses based on processor cores with complex enterprise agreements, while Snowflake charges consumption-based pricing. Oracle supports OLTP and OLAP workloads; Snowflake focuses on analytics. Migration involves rearchitecting schemas and queries for Snowflake’s columnar design. Kanerika executes Oracle to Snowflake migrations with validated frameworks—explore your modernization path with us.

Can Snowflake replace Oracle?

Snowflake can replace Oracle for analytical and data warehousing workloads but not for OLTP applications requiring high-frequency transactions. Snowflake’s architecture handles complex queries, reporting, and BI use cases that traditionally ran on Oracle data warehouses. However, Oracle databases serving transactional applications with row-level operations need purpose-built OLTP systems. Organizations often migrate Oracle analytics to Snowflake while maintaining Oracle or other databases for transactions, then integrate both through data pipelines. Query syntax differences and Oracle-specific features require conversion during migration. Kanerika assesses your Oracle workloads and architects appropriate Snowflake replacement strategies—start with our migration readiness evaluation.

What are the disadvantages of Snowflake?

Snowflake’s consumption-based pricing can escalate unexpectedly without proper governance—runaway queries and always-on warehouses drive costs quickly. The architecture depends entirely on cloud providers, creating vendor lock-in concerns for some organizations. Snowflake lacks native OLTP capabilities, requiring separate systems for transactional workloads. Data egress fees apply when moving data out of Snowflake. Real-time streaming requires additional tooling compared to native lakehouse platforms. Limited customization options exist compared to self-managed solutions. Performance tuning requires understanding virtual warehouse sizing rather than traditional database optimization. Kanerika implements Snowflake governance frameworks that control costs while maximizing value—let us optimize your deployment.

What is Snowflake used for?

Snowflake serves as a cloud data platform for analytics, business intelligence, data engineering, and data sharing use cases. Organizations use Snowflake architecture to consolidate data warehouses, build modern data pipelines, enable self-service analytics, and share data securely with partners. Common applications include financial reporting, customer analytics, operational dashboards, and machine learning feature stores. Snowflake handles structured data from transactional systems alongside semi-structured data like JSON logs and IoT streams. Its elastic scaling supports variable workloads from ad-hoc queries to enterprise-wide reporting. Kanerika implements Snowflake solutions across analytics use cases—discuss your data platform needs with our architects.

Is Snowflake columnar or row-based?

Snowflake uses columnar storage architecture, storing data by column rather than row. This design dramatically improves analytical query performance by reading only the columns needed for each query rather than scanning entire rows. Columnar storage also enables superior compression since similar data types cluster together, reducing storage costs and I/O operations. Snowflake automatically organizes data into micro-partitions using columnar format without requiring manual optimization. This architecture excels for aggregations, filtering, and analytical workloads while being less optimal for single-row transactional operations. The columnar approach underpins Snowflake’s OLAP performance advantages. Kanerika designs schemas optimized for Snowflake’s columnar architecture—talk to our data modeling experts.

Who is Snowflake's biggest competitor?

Databricks represents Snowflake’s most significant competitor in the cloud data platform market. Both target enterprise analytics workloads with scalable, cloud-native architectures but differ in approach—Snowflake emphasizing managed SQL analytics and Databricks focusing on unified lakehouse with ML capabilities. Amazon Redshift, Google BigQuery, and Microsoft Fabric also compete directly in cloud data warehousing. Each platform offers distinct architectural advantages: Redshift for AWS-native deployments, BigQuery for serverless simplicity, and Fabric for Microsoft ecosystem integration. Competitive positioning depends on existing cloud investments and workload requirements. Kanerika maintains expertise across these platforms and helps you select the right architecture—request a platform comparison consultation.