In today’s data-driven world, the importance of data analytics and business intelligence cannot be overstated. Organizations are constantly seeking tools that can help them make sense of the vast amounts of data they collect. Microsoft Azure, a leading cloud service provider, offers a range of solutions designed to meet these needs. Among them, Azure Databricks and Azure Data Factory stand out as powerful platforms for data analytics and integration, respectively.

The objective of this comparison is to delve into the features, capabilities, and use cases of these two platforms. Whether you are looking to perform complex data analytics, require machine learning capabilities, or need to integrate various data sources, this guide aims to provide clarity on Azure Databricks vs Data Factory.

What is Azure Databricks?

Azure Databricks is not just another data analytics platform; it’s a comprehensive solution designed to make big data analytics simple. Built on Apache Spark, the leading open-source, parallel-processing framework, Azure Databricks offers a range of functionalities that are critical for big data operations and machine learning workflows.

The platform is engineered to provide a unified data analytics solution that is both collaborative and deeply integrated with a plethora of data storage options like Azure Blob Storage, Azure Data Lake Storage, and relational databases. This makes it incredibly versatile and capable of handling various data formats and types, whether structured or unstructured.

One of the most compelling features of Azure Databricks is its machine-learning capabilities. It provides a collaborative environment where data scientists, data engineers, and business analysts can work together seamlessly. From data preparation to model training and deployment, Azure Databricks offers a streamlined workflow for machine learning projects. This makes it an ideal choice for organizations aiming to leverage machine learning to derive actionable insights from their data.

Read More – Databricks Vs Snowflake: Choosing Your Cloud Data Partner

What is Azure Data Factory?

Azure Data Factory stands as a robust data integration service that enables organizations to move and transform data from various supported data stores to a centralized data repository, where it can be readily accessed and analyzed. Unlike Azure Databricks, the primary focus of Azure Data Factory is not analytics but rather the Extract, Transform, Load (ETL) processes that are crucial for data integration.

The platform allows you to create, schedule, and orchestrate data pipelines, which can move data from disparate sources and transform it into a format suitable for analytics. It supports a wide range of data stores, including Azure Blob Storage, Azure SQL Data Warehouse, on-premises SQL Server, and many more. This makes it incredibly flexible and adaptable to different data integration needs.

Azure Data Factory also offers capabilities to transform data using compute services like Azure HDInsight Hadoop, Spark, and Azure Data Lake Analytics. This ensures that you can perform necessary data transformations and make your data analytics-ready.

Find more on Big Data Analytics (Kanerika.com)

Comparing Azure Data Factory Vs Databricks

In summary, while both platforms serve indispensable roles in the data ecosystem, their purposes diverge significantly. Azure Databricks is your go-to platform for big data analytics and machine learning, offering a unified and collaborative environment for complex analytics tasks. On the other hand, Azure Data Factory excels in data integration and ETL processes, providing a robust set of tools to move and transform data from various sources.

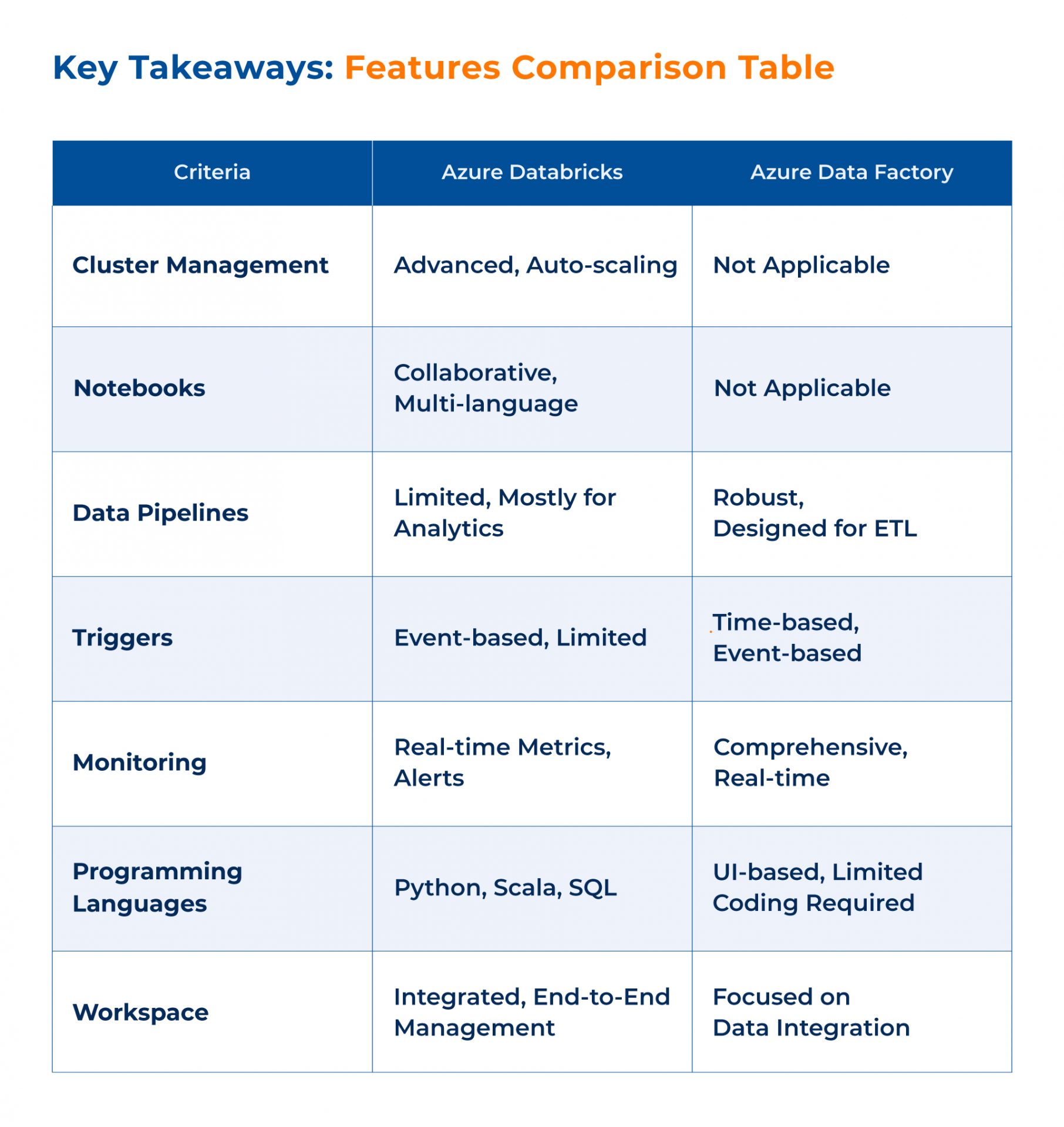

Azure Data Factory Vs Databricks Features

Azure Databricks: More Than Just Analytics

Databricks is a feature-rich platform designed to facilitate a wide range of data analytics tasks. One of its most notable features is cluster management. This allows organizations to automatically manage and scale clusters, optimizing resource usage and reducing costs. The platform’s auto-scaling capabilities ensure that you’re only using the resources you need when you need them.

Another significant feature is the collaborative notebooks. These notebooks serve as a unified workspace where data scientists, data engineers, and business analysts can collaborate in real time. This collaborative environment fosters innovation and accelerates the development of data analytics projects.

The integrated workspace in Azure Databricks is another feature that sets it apart. This workspace allows for end-to-end project management, from data ingestion and preparation to analytics and machine learning. It provides a centralized location for all your data analytics needs, making it easier to manage projects and collaborate with team members.

Azure Data Factory: The Backbone of Data Integration

Azure Data Factory is tailored for data integration, and its features reflect this focus. One of the core features is data pipelines, which allow you to create, schedule, and orchestrate data workflows. These pipelines can be complex, supporting a variety of data sources and transformations. They can be triggered based on various conditions such as schedules, data availability, or other dependencies, providing a high level of flexibility.

Another standout feature is data flow. This allows you to visually design data transformations without having to write any code. It’s a powerful tool for building ETL processes quickly and efficiently.

Monitoring is another area where Azure Data Factory excels. The platform provides robust monitoring features, including real-time performance insights and failure alerts. This ensures that you can quickly identify and address any issues, minimizing downtime and ensuring the reliability of your data integration workflows.

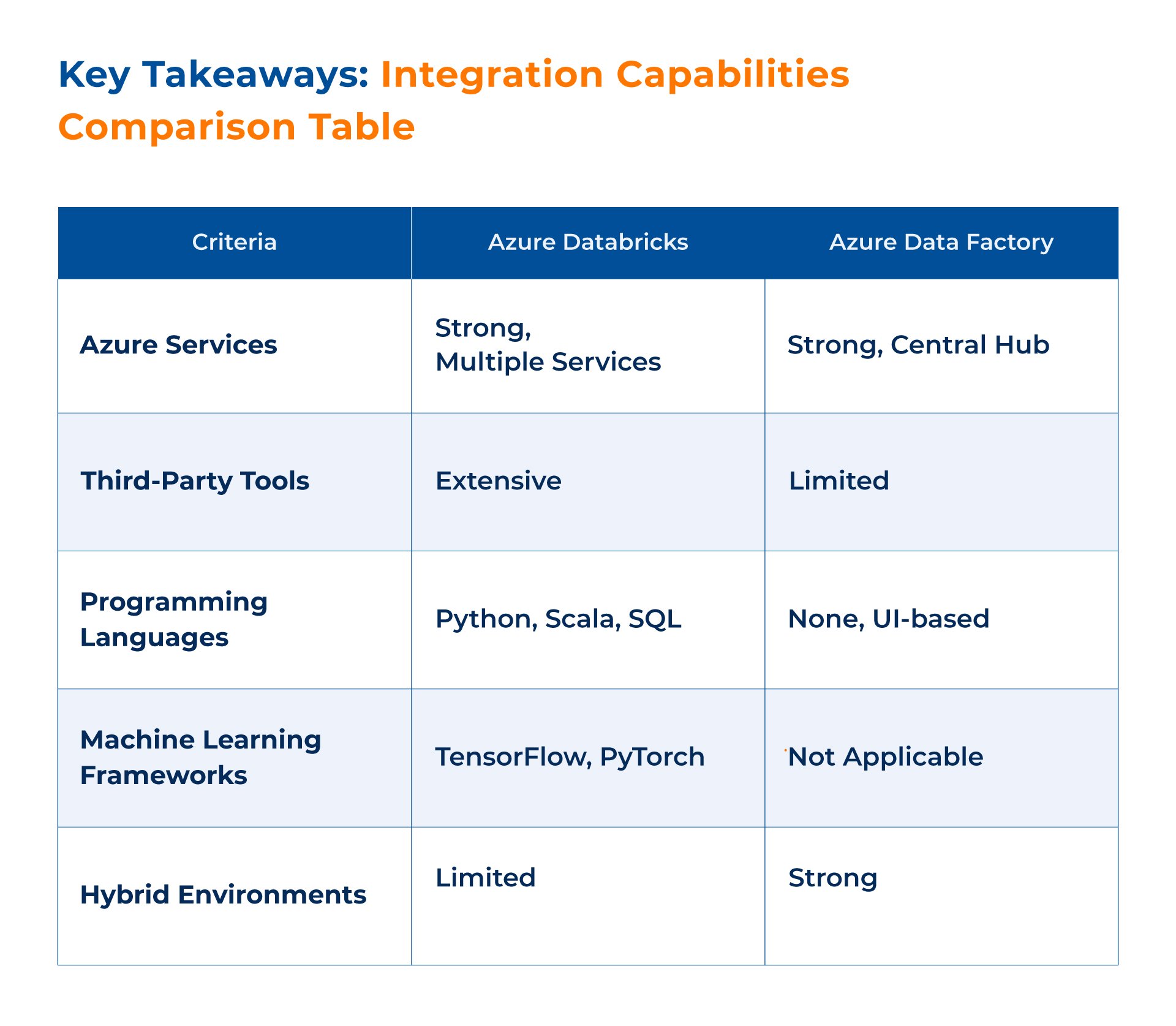

Azure Data Factory Vs Databricks: Integration Capabilities

Azure Databricks: A Seamless Integration Experience

Databricks offers robust integration capabilities, especially when it comes to Azure services and third-party tools. The platform is designed to work seamlessly with Azure Blob Storage, Azure Data Lake Storage, and Azure SQL Data Warehouse, among others. This ensures that you can easily move data between different Azure services without any hassle.

Moreover, Azure Databricks supports integration with various programming languages like Python, Scala, and SQL, making it highly versatile. It also offers libraries and APIs that allow for easy integration with machine learning frameworks like TensorFlow and PyTorch. This makes it a highly extensible platform that can fit into a wide variety of data analytics ecosystems.

Azure Data Factory: The Hub of Azure Data Services

Azure Data Factory shines when it comes to integration capabilities. It is designed to be the hub for all your Azure data services. Whether you’re using Azure Blob Storage, Azure SQL Data Warehouse, or even on-premises SQL Server, Azure Data Factory can integrate with them effortlessly.

The platform offers connectors for a wide range of Azure services, making it easier to build end-to-end data integration solutions within the Azure ecosystem. It also supports hybrid environments, allowing you to move data from on-premises data sources to the cloud and vice versa. This makes it a flexible and powerful tool for any organization’s data strategy.

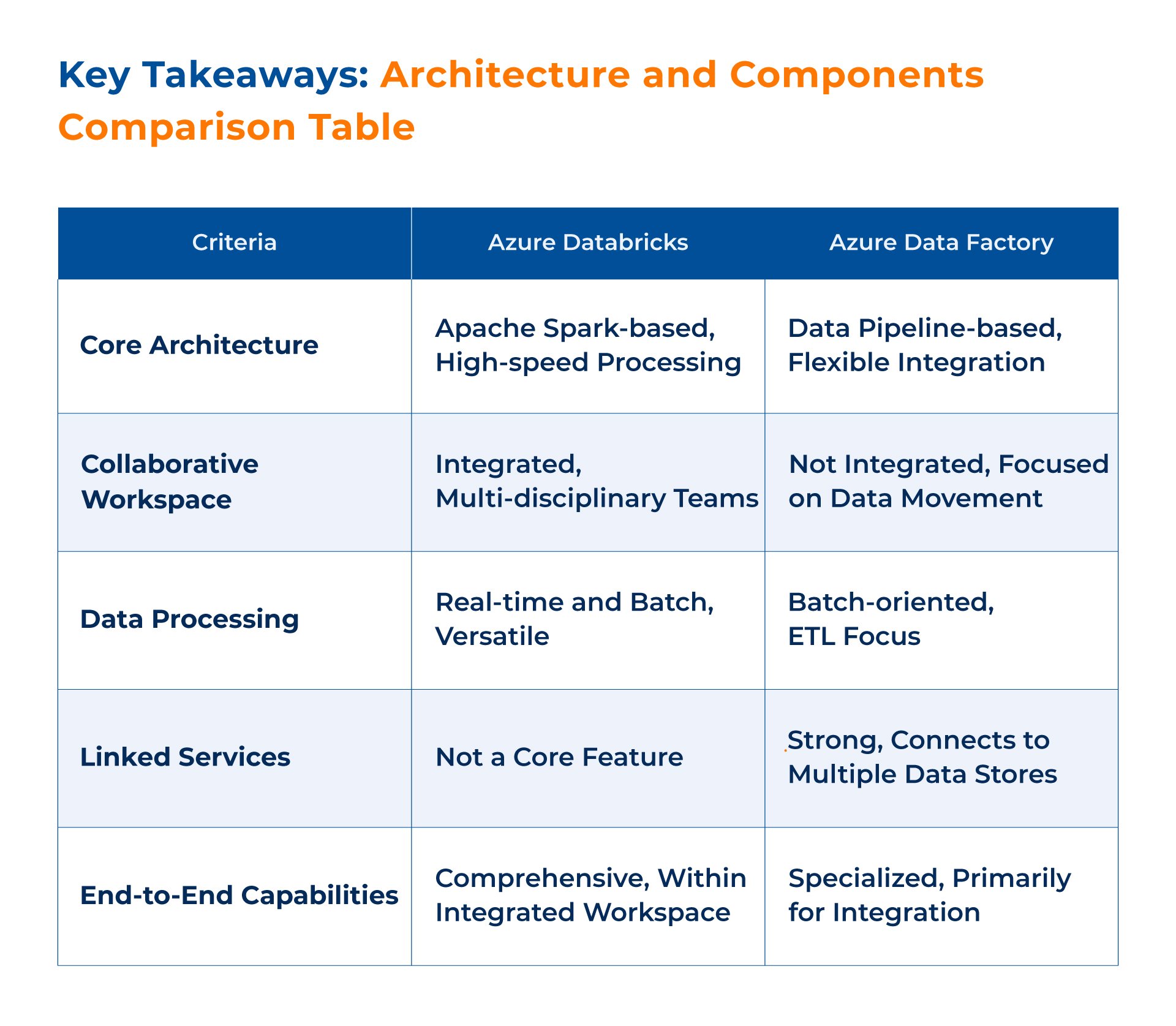

Azure Data Factory Vs Databricks: Architecture and Components

Azure Databricks: Fueled by Apache Spark

Databricks is built on the robust architecture of Apache Spark. This architecture not only allows for high-speed data processing but also provides a versatile platform for various data analytics tasks, including machine learning. The Spark-based architecture is designed to handle large volumes of data efficiently, making it a prime choice for organizations dealing with big data challenges.

The platform also incorporates a collaborative workspace, which serves as an integrated environment for end-to-end data analytics. This workspace is where the magic happens: data ingestion, data preparation, analytics, and machine learning. It’s a one-stop-shop for data scientists, data engineers, and business analysts, allowing for seamless collaboration and project management.

Azure Data Factory: Orchestrating Data Pipelines

In contrast, Azure Data Factory employs a data pipeline architecture, specifically designed to facilitate data integration tasks. These pipelines serve as the conduits for moving and transforming data from a multitude of sources to a centralized data repository. The architecture is highly flexible, allowing you to create, schedule, and orchestrate data pipelines with ease.

One of the standout features in Azure Data Factory’s architecture is the concept of linked services. These are essentially connection strings that enable the platform to connect to various data stores, whether they are in the cloud or on-premises. This feature adds a layer of flexibility and makes Azure Data Factory a highly adaptable tool for diverse data integration needs.

Azure Data Factory Vs Databricks: Beyond the Basics

Azure Databricks: Scaling with Your Needs

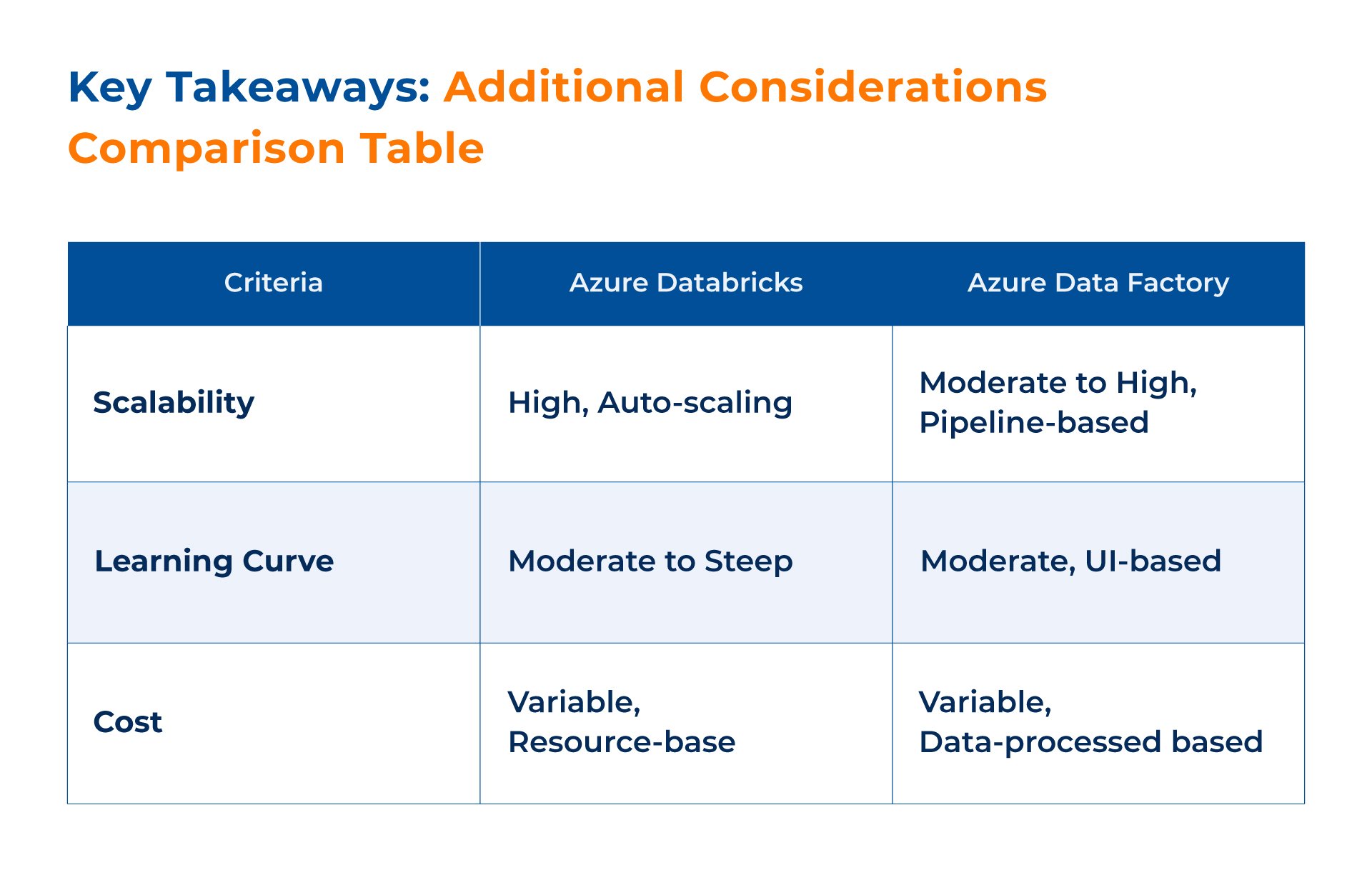

When it comes to scalability, Azure Databricks is designed to grow with your organization. Its Spark-based architecture allows for the handling of large data volumes, and its auto-scaling features ensure that resources are optimized. Whether you’re dealing with a small dataset or petabytes of information, Azure Databricks can scale to meet your needs.

Azure Data Factory: Adaptable and Cost-Effective

Azure Data Factory also offers scalability but in a different context. Its data pipeline architecture is designed to handle varying workloads and can scale to accommodate large data transfers. Moreover, its pricing model is based on the data processed, making it a cost-effective solution for many data integration scenarios.

Learning Curve: Ease of Adoption

Azure Databricks, with its rich feature set and capabilities, can have a moderate to steep learning curve, especially for those new to Spark or big data analytics. However, its collaborative notebooks and integrated workspace aim to simplify the user experience.

Azure Data Factory, on the other hand, is generally easier to adopt, especially for those familiar with data integration and ETL processes. Its user interface is designed to be intuitive, and it offers templates to speed up pipeline creation.

Which One is Right for You?

Scenarios Where Azure Databricks is More Beneficial

If your organization is heavily invested in big data analytics, machine learning, or real-time data processing, Azure Databricks is likely the better choice. Its Spark-based architecture and collaborative notebooks make it a powerful tool for complex analytics tasks. It’s particularly useful for organizations that require a unified platform for data analytics, where multiple teams need to collaborate.

Scenarios Where Azure Data Factory is More Beneficial

On the other hand, if your primary need is data integration, especially involving various data sources and ETL processes, Azure Data Factory is the way to go. Its robust data pipeline architecture and strong integration capabilities make it ideal for organizations that need to move and transform large volumes of data reliably.

Making the Informed Choice

Both Azure Databricks and Azure Data Factory are powerful platforms, each with its own set of features, capabilities, and advantages. Your choice between the two will ultimately depend on your specific needs, whether it’s complex data analytics and machine learning with Azure Databricks or robust data integration and ETL processes with Azure Data Factory.

Choosing the Right Consulting Partner: A Critical Decision

Selecting the right platform for your data analytics or integration needs is only half the battle. The other half involves choosing the right consulting partner to help you implement and manage these solutions effectively. A trusted consulting partner can offer invaluable expertise, from initial planning and strategy to implementation and ongoing support. Here are some key factors to consider when choosing a consulting partner:

- Expertise: Look for a partner with a proven track record in implementing Azure services, particularly Azure Databricks and Azure Data Factory.

- Certifications: Certifications in Azure technologies can be a good indicator of a consulting partner’s expertise and commitment to quality.

- Client Testimonials: Past client experiences can provide insights into a consulting partner’s capabilities and reliability.

- Custom Solutions: A good consulting partner should be able to offer customized solutions tailored to your organization’s specific needs.

- Ongoing Support: Post-implementation support is crucial for the long-term success of any project. Make sure your consulting partner offers this.

Kanerika: Your Trusted Partner in Azure Solutions

When it comes to implementing Azure Databricks and Azure Data Factory, Kanerika stands out as a trusted partner. With a strong focus on data analytics and integration solutions, Kanerika brings a wealth of experience and expertise to the table. Here’s why Kanerika could be the right choice for you:

- Deep Expertise: Kanerika has a proven track record in implementing Azure services, ensuring that you’re in capable hands.

- Certified Professionals: The team at Kanerika holds multiple Azure certifications, underscoring their commitment to delivering quality solutions.

- Client-Centric Approach: Kanerika prides itself on its ability to offer customized solutions, tailored to meet the unique needs of each client.

- End-to-End Support: From initial planning to ongoing support, Kanerika offers comprehensive services to ensure the success of your Azure projects.

Choosing Kanerika as your consulting partner means opting for quality, reliability, and a deep understanding of Azure services. With Kanerika, you’re not just getting a service provider; you’re gaining a partner committed to the success of your data projects.

Don’t leave your data strategy to chance. Leverage the expertise and experience that Kanerika offers by scheduling a free consultation today. In this session, you’ll have the opportunity to discuss your specific needs, challenges, and goals. Kanerika’s team of certified professionals will provide insights and recommendations tailored to your organization, helping you make an informed decision about your Azure implementation.

FAQs