Artificial intelligence is advancing rapidly, propelled by powerful language models and innovative techniques like Retrieval-Augmented Generation (RAG). Large Language Models (LLMs) are sophisticated AI systems trained on extensive text datasets to understand and generate human-like language. RAG enhances these models by integrating external knowledge sources, enabling real-time retrieval of relevant information to improve the accuracy and depth of their responses.

According to recent studies, the global AI market is expected to exceed $190 billion by 2025, with demand for more specialized AI models like RAG and LLM growing exponentially. “The future of AI isn’t about choosing between technologies, but understanding how they complement each other,” says Dr. Elena Rodriguez, AI Research Director at TechInnovate. This blog will explore the key differences between RAG and LLM, helping businesses make informed decisions on which AI model best aligns with their specific needs.

Upgrade From Tableau To Power BI!

Kanerika handles report mapping through simple structured migration tasks.

Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation (RAG) is an AI architecture that enhances large language models (LLMs) by combining their generative capabilities with information retrieval systems. Unlike standard LLMs that rely solely on their pre-trained knowledge, RAG systems dynamically access and incorporate external knowledge sources during the generation process.

How it Works

RAG operates through a two-stage process. First, a retrieval component searches through a knowledge base (which can include documents, databases, or other structured information) to find content relevant to the user’s query. Then, a generation component (typically an LLM) uses both the query and the retrieved information to produce a comprehensive response. This approach grounds the model’s output in specific, relevant information rather than relying exclusively on its parametric knowledge.

Key Benefits and Uses

RAG offers several significant advantages over traditional LLMs. It dramatically reduces hallucinations by anchoring responses to information, making AI systems more reliable for critical applications. It enables models to access up-to-date information beyond their training cutoff, solving the problem of knowledge obsolescence.

RAG also improves accuracy on domain-specific tasks by incorporating specialized knowledge bases. Additionally, it enhances transparency, as organizations can trace responses back to source documents, providing greater auditability and trust in AI-generated content.

Retrieval-Augmented Generation (RAG) System Components

1. Document Ingestion Layer

The document ingestion process prepares source documents for analysis by collecting materials from various formats. It involves parsing different file types, extracting meaningful content, cleaning text, and breaking down large documents into manageable chunks that can be effectively analyzed and retrieved.

2. Embedding Model

Embedding models transform textual information into dense numerical vector representations that capture semantic meaning. These models convert text chunks into high-dimensional vectors, enabling precise similarity comparisons and preserving the underlying contextual relationships.

3. Vector Database

Vector databases are specialized storage systems designed to handle vector embeddings efficiently. They index and store vector representations, allowing rapid semantic search and nearest neighbor comparisons across large document collections.

4. Retrieval Mechanism

The retrieval mechanism uses advanced algorithms like cosine similarity to find the most relevant document chunks. It compares query vector representations with stored document vectors, ranking and selecting the most contextually appropriate segments.

5. Prompt Engineering Module

Prompt engineering bridges retrieved information with language model response generation. This module constructs comprehensive prompts by integrating the original query, retrieved documents, and necessary metadata.

Decided RAG is the right approach?

Kanerika’s RAG development practice builds production-grade retrieval systems.

What is LLM?

LLMs, or large language models, are a kind of artificial intelligence that helps process human text. They are constantly very deep neural networks trained on a large amount of data, such as GPT-3 and GPT-4. LLMs can read and write language in a way that resembles human language comprehension, which allows a broad range of applications.

How It Works

LLMs operate by analyzing large datasets containing billions of words. During training, these models learn to recognize patterns in language, such as syntax, context, and meaning. LLMs are built using deep learning methodologies, where an intricate series of computations are generated, enabling the model to forecast the subsequent term in a string, produce comprehensible sentences, or reply to requests in a pertinent framework.

LLMs have been subsequently trained on large data sets that help them create sophisticated human-like text based on previous text.

Key Benefits and Uses

LLMs excel in tasks involving natural language understanding and generation. They are commonly used in chatbots, content creation, and summarization. They can generate high-quality text, simulate conversations, and provide personalized recommendations. LLMs are also utilized in customer support, creative writing, coding assistance, and many other domains where human-like text generation is valuable. Their ability to process and predict language has made them one of the most powerful tools in AI development.

Top 5 LLMs Making Impact Across Industries

1. OpenAI’s GPT-4o

An advanced language model with improved context handling and reasoning capabilities. Offers more precise instruction-following and expanded knowledge base. Supports larger context windows and demonstrates enhanced performance across various applications.

2. Anthropic’s Claude 3.7 Sonnet

The most recent Claude model, featuring superior analytical skills and nuanced understanding. Provides advanced multimodal capabilities with improved reasoning and efficiency. Represents a significant leap in conversational AI and complex task resolution.

3. Meta’s Llama 3.3

An open-source language model with enhanced multilingual support and improved reasoning abilities. Offers robust performance across research and practical domains. Provides increased safety features and more flexible implementation options.

4. Google’s Gemini

Google’s cutting-edge multimodal language model with advanced reasoning capabilities. Demonstrates strong performance in scientific reasoning, cross-linguistic understanding, and complex problem-solving. Represents a significant advancement in AI technology.

5. Mistral’s Mixtral 8x7B

A powerful open-weight mixture-of-experts model known for its efficiency and competitive performance. Mixtral 8x7B dynamically activates only a subset of its expert models during inference, enabling high-quality results with reduced computational cost. It’s gaining traction for its balance of performance, transparency, and open-access deployment across enterprise and research settings.

RAG vs LLM: Key Differences

| Aspect | RAG (Retrieval-Augmented Generation) | LLM (Large Language Models) |

| Definition | Combines generative models with external data retrieval to enhance response quality. | Trained on massive datasets to understand and generate human-like text. |

| Primary Function | Integrates real-time data retrieval into the generative process to provide specific and accurate answers. | Generates text based on patterns learned from data without external information retrieval. |

| Data Usage | Uses external databases, knowledge sources, or APIs to improve response accuracy. | Uses pre-existing data learned during training to generate responses. |

| Flexibility in Responses | Can respond based on up-to-date or specialized information retrieved during the query. | Responses are based on pre-trained data, without real-time information. |

| Accuracy | More accurate in niche or domain-specific queries as it retrieves information from external sources. | Accurate in general language tasks but may struggle with domain-specific information. |

| Performance with Long Contexts | Performs well in tasks requiring specific or detailed context due to the retrieval mechanism. | Can generate text fluently but may lose accuracy or context in complex, long conversations. |

| Task Specialization | Excels in tasks requiring knowledge outside of pre-trained models, such as detailed question answering. | Suitable for tasks like writing, summarizing, and general conversation but not as specific as RAG. |

| External Dependency | Dependent on access to external data sources for improved output. | Operates independently of external data sources after training. |

| Use Case | Best for applications like customer support, legal research, and medical queries, where accuracy is crucial. | Ideal for general NLP tasks, creative writing, and content generation. |

| Response Generation | Generates responses based on both pre-trained data and real-time data retrieval. | Generates responses only from pre-trained data, lacking real-time awareness. |

RAG vs. LLM: A Comprehensive Breakdown of Key Differences

1. Primary Function

- RAG:

Its core function is to improve the relevance and accuracy of generated responses by augmenting the generation process with retrieved content. This is especially valuable when the query pertains to recent events, specialized knowledge, or uncommon topics not covered in the model’s training data.

- LLM:

Primarily focused on generating human-like text based on what it has learned during training. It excels at general understanding and language tasks but cannot reference new or unseen data unless retrained or fine-tuned.

2. Data Usage

- RAG:

Actively uses external sources of information, such as search indexes, APIs, or document repositories. This allows it to deliver factually accurate and updated information in real-time or on-demand.

- LLM:

Relies entirely on the static data it was trained on. If the training data doesn’t include certain information, the model won’t be able to produce accurate responses about it — particularly for recent events or niche domains.

3. Flexibility in Responses

- RAG:

Offers dynamic response generation because it retrieves relevant content at the time of the query. This enables it to adapt to changes in information or user needs, offering more flexibility in domains like news, finance, healthcare, etc.

- LLM:

Has limited flexibility, as it can only generate responses based on what it already knows. While it’s impressive in constructing fluent and logical text, it can’t incorporate new knowledge unless retrained.

4. Accuracy

- RAG:

Generally more accurate in domain-specific or factual queries. Since it pulls data from authoritative sources in real time, it can ensure the answer is based on actual references, reducing hallucinations or incorrect facts.

- LLM:

Performs well in general use cases but may hallucinate or provide outdated/incorrect information in areas where it lacks data coverage or contextual depth.

5. Performance with Long Contexts

- RAG:

Handles long and detailed queries better because it can retrieve context-relevant snippets to base its answers on. This is beneficial in tasks like legal document analysis or research support.

- LLM:

While it can generate long-form responses, maintaining accuracy, coherence, and relevance over long spans of text or conversations can be a challenge, especially without retrieval support.

6. Task Specialization

- RAG:

Ideal for tasks requiring up-to-date or specific information, such as answering questions about newly published research, legal documents, or medical guidelines. Its ability to tap into live data gives it an edge in these areas.

- LLM:

Best suited for general-purpose NLP tasks, such as summarization, paraphrasing, translation, story writing, or chat-based assistance, where real-time data is less critical.

7. External Dependency

- RAG:

Heavily reliant on access to external data sources, such as search engines, databases, or custom knowledge bases. Without access, its performance drops closer to that of a standalone LLM.

- LLM:

Self-contained after training. It doesn’t require any external data connection and can function independently, which is useful in privacy-sensitive or offline environments.

8. Use Case

RAG:

Suited for high-accuracy, domain-specific applications like:

- Customer support with tailored or technical knowledge

- Legal research where citation and detail matter

- Medical applications where up-to-date and reliable data is crucial

LLM:

Great for creative and general tasks like:

- Content creation (blogs, scripts, stories)

- Conversational AI

- General summarization or classification

9. Response Generation

- RAG:

Responses are generated using a fusion of retrieved content and generative modeling, making them more grounded in real-world data. It essentially expands the knowledge horizon of the base LLM.

- LLM:

Generates responses solely based on internalized training data, which can lead to creative but sometimes less factual outputs.

Need a full GenAI deployment, not just a RAG evaluation?

Kanerika handles model selection through production rollout.

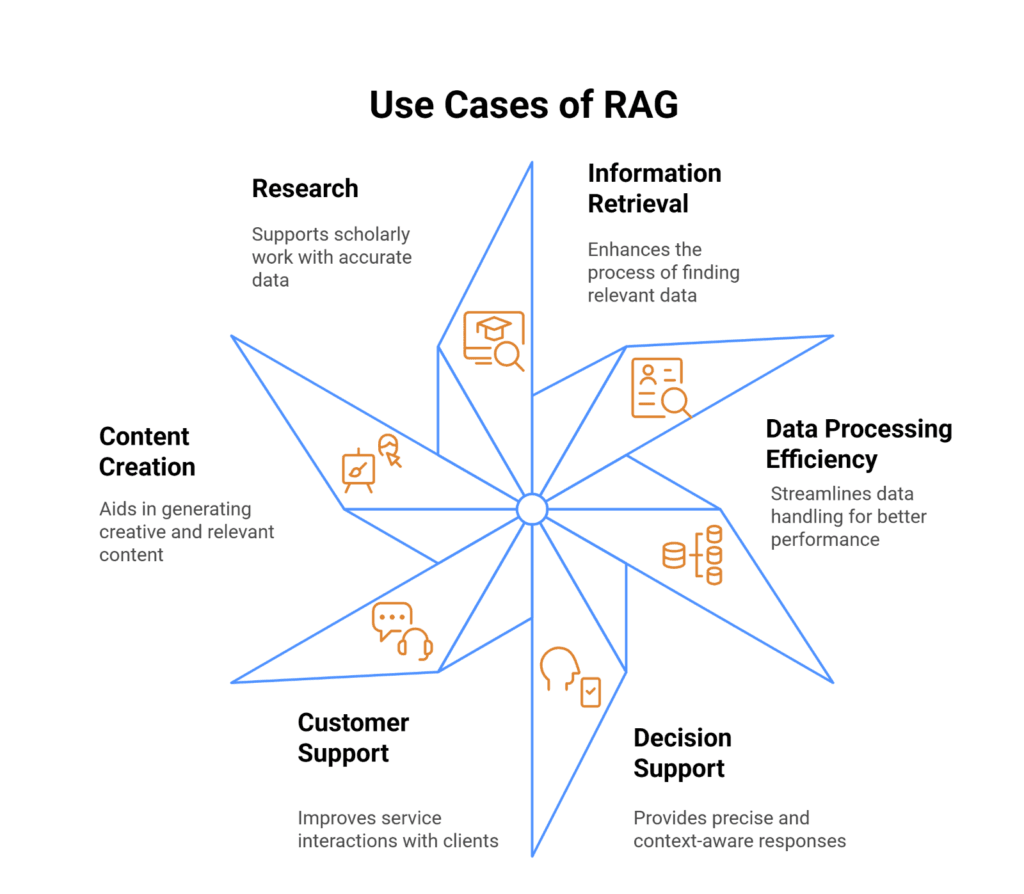

Use Cases for RAG

Retrieval-Augmented Generation (RAG) excels in scenarios demanding precise, context-specific information across various domains.

1. Enterprise Knowledge Management

Enables organizations to create intelligent knowledge bases that provide accurate, contextual responses using internal documentation. Unlike standalone LLMs, RAG systems can reference the latest company-specific documents, ensuring responses align with current organizational policies.

2. Customer Support

Benefits from RAG by retrieving specific product documentation, troubleshooting guides, and previous support interactions. This approach reduces resolution times while maintaining high accuracy across complex product ecosystems.

3. Legal and Compliance

These environments leverage RAG to navigate regulatory frameworks. Also, by connecting generative models to databases of laws and case precedents, professionals receive nuanced guidance with proper citations and references.

4. Healthcare Applications

Utilizes RAG to maintain medical accuracy. Clinical decision support systems can retrieve information from medical literature and guidelines, assisting healthcare providers with diagnostic recommendations while ensuring traceability to authoritative sources.

5. Research and Development

These teams implement RAG to stay current with scientific literature, enabling researchers to query the latest findings with direct citations to relevant papers.

6. Educational Systems

Uses RAG to create adaptive learning experiences, similar to those found on the best language learning website, drawing from textbooks and supplementary materials to provide students with accurate, tailored information.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

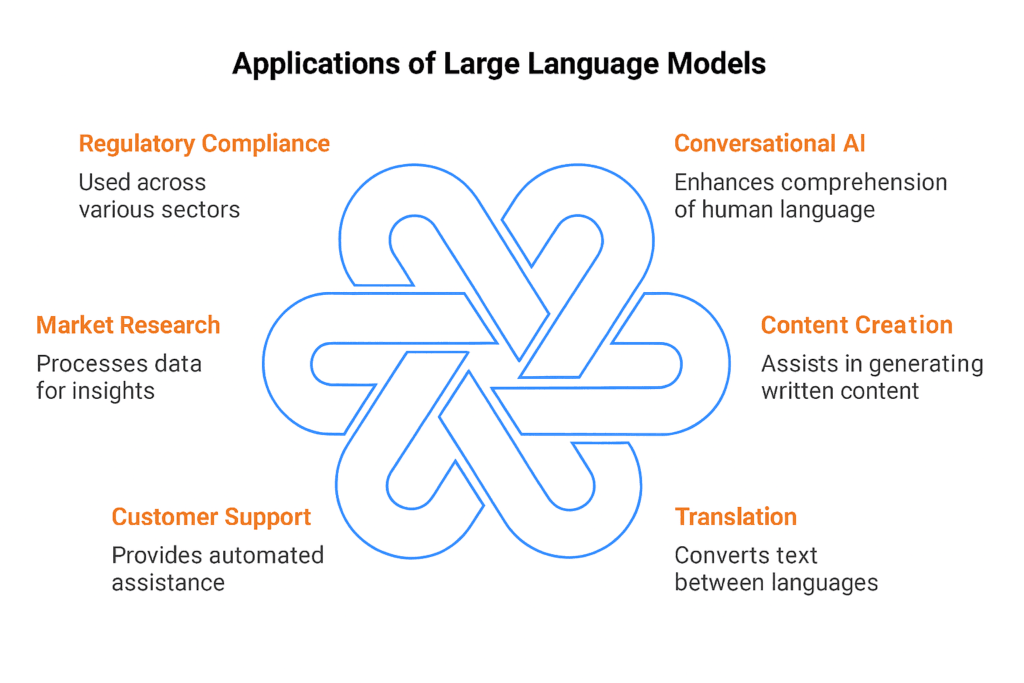

Use Cases for Large Language Models (LLM)

Large Language Models (LLMs) have shown enormous cross-domain transfer capabilities, revolutionizing how organizations communicate and analyze tasks.

1. Content Creation

Allows marketing teams to generate blog posts, social media content, product descriptions, and creative writing. LLMs quickly produce diverse content styles, scaling production while maintaining contextual relevance.

2. Code Generation

Redefines how we build and write software, using predictive text or block completions to help you along the way, boilerplate code generators, understanding the languages, documenting processes, and fixing bugs all in one place. Tools such as GitHub Copilot highlight the promise of LLMs in improving the developer experience.

3. Customer Interaction

AI-Powered Chatbots and Virtual Assistants revolutionize Customer Interaction. LLMs facilitate more conversational interactions by taking context into account, which can handle customer queries and offer relevant suggestions.

4. Language Translation

LLMs can also aid in language translation, enabling more nuanced, context-sensitive translations that consider cultural and linguistic subtleties for many language pairs.

5. Educational Support

Educational Support LLMs can also generate personalized learning materials, explain difficult concepts, offer interactive tutoring, and develop adaptive learning experiences.

6. Data Analysis

Uses LLMs to convert complex data into informative narrative reports, extracting valuable information and translating technical information into language that various audiences can understand.

7. Creative Ideation

Enables professionals to leverage LLMs as brainstorming partners, generating original ideas across design, marketing, product development, and more.

RAG vs LLM: Choosing the Right Approach for Your Business

Selecting between Retrieval-Augmented Generation (RAG) and Large Language Models (LLM) requires a strategic assessment of your organization’s specific needs, technological infrastructure, and business objectives.

When to Choose RAG?

Retrieval-Augmented Generation becomes the preferred choice when your business prioritizes:

1. Accuracy and Credibility

RAG systems excel in environments where factual precision is critical. Moreover, by retrieving information from specific, curated databases, RAG ensures responses are grounded in verified sources. Consequently, this makes it ideal for industries like legal, healthcare, and financial services where misinformation can have serious consequences.

2. Domain-Specific Knowledge

Organizations with extensive internal documentation or specialized knowledge bases benefit immensely from RAG. Also, the system can draw precisely from your organization’s unique information, providing context-aware responses that reflect your specific operational nuances.

3. Compliance and Traceability

Regulated industries require not just accurate information, but also the ability to trace the origin of that information. Additionally, RAG’s capability to cite sources makes it invaluable for compliance-driven environments where every recommendation must be substantiated.

4. Cost-Effective Customization

Instead of retraining large language models, RAG allows organizations to leverage existing knowledge repositories, making it a more economical approach to creating intelligent information systems.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

When to Choose LLM?

Large Language Models become the go-to solution when your business needs:

1. Creative Content Generation

LLMs shine in scenarios requiring original, creative content. Marketing teams, content creators, and design professionals can leverage these models to generate diverse writing styles, brainstorm ideas, and produce engaging narratives quickly.

2. Broad Language Tasks

When you need versatile language processing across multiple domains without deep specialization, LLMs provide remarkable flexibility. They can handle translation, summarization, and communication tasks with impressive breadth.

3. Rapid Prototyping

Startups and innovation-driven organizations can use LLMs to quickly prototype conversational interfaces, generate initial product descriptions, or explore conceptual ideas without significant upfront investment.

4. General-Purpose Communication

Customer service chatbots, interactive assistants, and general communication tools benefit from LLMs’ ability to understand and generate human-like text across various contexts.

Hybrid Approach: Bridging the Gap

Many forward-thinking organizations are exploring hybrid solutions that combine RAG’s precision with LLM’s generative capabilities. This approach allows businesses to:

- Maintain high accuracy through retrieval

- Leverage the creative potential of generative models

- Create more intelligent, context-aware systems

Decision Framework

Your choice should depend on:

- Specific use case requirements

- Accuracy needs

- Available data infrastructure

- Budget constraints

- Complexity of domain knowledge

The most successful implementation will align technological capabilities with your unique business strategy, operational needs, and long-term objectives.

RAG vs LLM: Implementation Considerations

1. Technical Infrastructure Requirements

RAG systems require more complex infrastructure compared to traditional LLMs. They need specialized vector databases, powerful embedding models, and robust retrieval mechanisms. Organizations must invest in high-performance computing resources capable of semantic search and efficient information retrieval.

2. Data Preparation and Management

RAG implementation involves extensive data preprocessing, including document chunking, cleaning, and embedding generation. Also, each document must be transformed into semantically meaningful vector representations. LLMs typically rely on pre-trained models with less intensive ongoing data management.

3. Integration with Existing Systems

RAG introduces more complex integration challenges, requiring seamless connections between document repositories, embedding services, vector databases, and language models. Organizations need robust API frameworks and architectural design to ensure smooth data flow and minimal latency.

4. Evaluation Metrics and Performance Monitoring

RAG performance evaluation is more nuanced, measuring retrieval accuracy, chunk relevance, and response coherence. Metrics must capture vector similarity, retrieval precision, and generated response quality. LLM evaluation focuses more on general language understanding and task completion.

Key Comparative Insights

- RAG provides more contextually grounded responses

- LLMs offer broader generative capabilities

- RAG requires more complex infrastructure

- Both approaches need continuous refinement

Move From Informatica to Talend!

Kanerika keeps your workflow migration running smooth.

Future Trends in RAG and LLM Technologies

The future of artificial intelligence converges towards more integrated AI systems where Retrieval-Augmented Generation (RAG) and Large Language Models (LLMs) will dynamically complement each other. However, emerging technological advancements are driving sophisticated hybrid models capable of more accurate and contextually intelligent information processing.

Key Emerging Trends

1. Enhanced Contextual Intelligence

Future developments will focus on improving contextual reasoning capabilities. RAG systems will evolve to provide more nuanced, real-time information retrieval, while LLMs will develop advanced reasoning mechanisms to understand complex, multi-dimensional contexts more effectively.

2. Multimodal Capabilities

Researchers are expanding technologies beyond text-based interactions, developing RAG and LLM systems that can seamlessly integrate text, image, audio, and potentially video data. Moreover, this multimodal approach will enable more comprehensive and intuitive AI interactions across diverse domains.

3. Ethical and Transparent AI

Significant research is directed towards developing more transparent, accountable AI systems. Both RAG and LLM technologies will incorporate robust mechanisms for explaining reasoning, reducing bias, and ensuring more reliable AI-generated outputs.

4. Computational Efficiency

Future trends emphasize developing energy-efficient, computationally lightweight models through neural compression, optimized embedding methods, and advanced retrieval algorithms that maintain high-performance capabilities.

Transforming Businesses with Kanerika’s Data-Driven LLM Solutions

Kanerika leverages cutting-edge Large Language Models (LLMs) to tackle complex business challenges with remarkable accuracy. Our AI solutions revolutionize key areas such as demand forecasting, vendor evaluation, and cost optimization by delivering actionable insights and managing context-rich, intricate tasks. Designed to enhance operational efficiency, these models automate repetitive processes and empower businesses with intelligent, data-driven decision-making.

Built with scalability and reliability in mind, our LLM-powered solutions seamlessly adapt to evolving business needs. Whether it’s reducing costs, optimizing supply chains, or improving strategic decisions, Kanerika’s AI models provide impactful outcomes tailored to unique challenges. By enabling businesses to achieve sustainable growth while maintaining cost-effectiveness, we help unlock unparalleled levels of performance and efficiency.

Transform Challenges Into Growth With AI Expertise!

Partner with Kanerika for Expert AI implementation Services

Frequently Asked Questions

What is the difference between a RAG and an LLM?

An LLM is a pre-trained language model that generates responses from its static training data, while RAG (Retrieval-Augmented Generation) combines an LLM with real-time retrieval from external knowledge sources. LLMs rely solely on parameters learned during training, making them prone to outdated information. RAG systems dynamically fetch relevant documents before generating responses, ensuring accuracy and currency. This fundamental difference makes RAG ideal for enterprise applications requiring up-to-date, verifiable answers. Kanerika helps organizations implement RAG architectures that deliver precise, contextually relevant AI responses—connect with our team to explore the right approach for your use case.

Why would you use RAG instead of just an LLM alone?

RAG provides access to current, domain-specific information that standalone LLMs cannot deliver from their frozen training data. When your application requires accurate answers from proprietary documents, regulatory content, or rapidly changing data, RAG retrieval ensures responses reflect the latest information. Standalone LLMs generate plausible-sounding but potentially outdated or fabricated content, creating compliance and trust risks. RAG also reduces hallucination by grounding responses in retrieved evidence, improving reliability for enterprise deployments. Kanerika designs RAG solutions tailored to your knowledge repositories—schedule a consultation to see how retrieval-augmented generation can transform your AI strategy.

Can LLM work without RAG?

Yes, LLMs function independently without RAG and power many applications successfully. Models like GPT-4 and Claude handle general knowledge tasks, creative writing, code generation, and conversational AI using only their pre-trained parameters. However, standalone LLMs cannot access real-time data, proprietary documents, or information beyond their training cutoff. For use cases requiring current facts, company-specific knowledge, or verifiable sourcing, adding RAG significantly improves output quality and reduces hallucination risk. Kanerika evaluates whether your enterprise needs standalone LLM deployment or RAG augmentation—reach out for a technical assessment of your AI requirements.

Can LLMs still hallucinate even with RAG?

Yes, LLMs can still hallucinate with RAG, though the frequency and severity decrease substantially. Hallucinations occur when the retrieval component fails to surface relevant documents, when retrieved content is ambiguous, or when the LLM misinterprets the context. Poor chunking strategies, inadequate embedding models, or low-quality source data also contribute to residual hallucination. Effective RAG implementations require robust retrieval pipelines, citation mechanisms, and confidence scoring to minimize these risks. Kanerika builds production-grade RAG systems with hallucination detection safeguards—contact us to learn how we reduce error rates in enterprise AI deployments.

Why is RAG used in LLM?

RAG is used with LLMs to overcome their knowledge limitations and improve response accuracy. LLMs store information in model weights during training but cannot update this knowledge without costly retraining. RAG solves this by retrieving current, relevant documents at inference time, allowing the LLM to generate answers grounded in external evidence. This approach enables domain-specific expertise, reduces hallucinations, and provides traceable sources for compliance-sensitive industries. RAG also lowers costs compared to fine-tuning for every knowledge update. Kanerika integrates RAG with leading LLM platforms to deliver accurate, auditable AI solutions—let us architect your retrieval pipeline.

Can I use RAG and LLM together?

Absolutely—RAG and LLM are designed to work together as complementary components. The standard RAG architecture pairs a retrieval system with an LLM, where the retriever fetches relevant documents and the LLM generates coherent responses using that context. This combination delivers the fluency of large language models with the accuracy of knowledge retrieval. Most enterprise AI implementations use this hybrid approach, connecting vector databases to models like GPT-4, Claude, or open-source alternatives. Kanerika specializes in building integrated RAG-LLM systems optimized for your data infrastructure—book a discovery call to explore implementation options.

Does LLM learn from RAG?

No, the LLM does not permanently learn or update its weights from RAG-retrieved content. RAG provides contextual information at inference time through the prompt, but this knowledge disappears after each query. The LLM’s parameters remain unchanged—it simply uses retrieved documents as temporary context to inform its response. This distinction separates RAG from fine-tuning, which does modify model weights through additional training. RAG’s advantage is enabling knowledge updates without retraining, while fine-tuning creates persistent behavioral changes. Kanerika helps enterprises choose between RAG, fine-tuning, or hybrid approaches based on your specific learning requirements—connect with our AI team for guidance.

When should I use RAG instead of LLM?

Use RAG instead of a standalone LLM when your application requires current information, proprietary knowledge, or source attribution. RAG excels for enterprise knowledge bases, customer support with product documentation, legal research, healthcare applications, and any scenario where accuracy and verifiability matter more than creative generation. If your data changes frequently or includes confidential documents that cannot be shared with model providers for fine-tuning, RAG keeps that knowledge secure within your infrastructure. Standalone LLMs suffice for general conversation, creative tasks, and code assistance. Kanerika assesses your specific requirements to recommend the optimal architecture—request a free technical evaluation today.

Is LLM better than RAG?

Neither is universally better—LLMs and RAG serve different purposes and often work best together. LLMs excel at general knowledge, reasoning, creative tasks, and code generation where broad training data suffices. RAG outperforms standalone LLMs for domain-specific accuracy, real-time information, proprietary data access, and applications requiring source citations. Comparing them directly misses the point: RAG enhances LLM capabilities rather than replacing them. The right choice depends on your accuracy requirements, data freshness needs, and compliance constraints. Kanerika evaluates your enterprise context to determine whether LLM, RAG, or a combined approach delivers optimal results—schedule a strategy session with our AI architects.

Can LLMs integrate external knowledge like RAG?

LLMs can integrate external knowledge through several methods beyond RAG, including fine-tuning, function calling, and extended context windows. Fine-tuning embeds knowledge into model weights through additional training. Function calling enables LLMs to query APIs and databases dynamically. Long-context models like Claude and Gemini can process extensive documents within single prompts. However, RAG remains the most flexible and cost-effective approach for dynamic knowledge integration, avoiding retraining costs while supporting real-time updates. Each method suits different scenarios based on knowledge volatility and accuracy requirements. Kanerika implements the optimal knowledge integration strategy for your enterprise AI—reach out to discuss your specific architecture needs.

What is the difference between RAG and CAG in LLM?

RAG retrieves external documents at query time, while CAG (Cache-Augmented Generation) pre-loads relevant knowledge into the model’s extended context before inference. RAG performs dynamic retrieval for each query, making it suitable for large, frequently updated knowledge bases. CAG loads documents into context once, reducing latency for subsequent queries within the same session but limiting knowledge scope to what fits in the context window. RAG handles broader knowledge domains; CAG offers faster responses for bounded document sets. Both approaches reduce hallucination compared to vanilla LLMs. Kanerika implements both RAG and CAG architectures depending on your latency and knowledge scope requirements—contact us for architectural guidance.

What's the best LLM for RAG?

The best LLM for RAG depends on your accuracy requirements, budget, and deployment constraints. GPT-4 and Claude 3.5 Sonnet deliver top-tier performance for complex reasoning over retrieved content. For cost efficiency, GPT-4o-mini and Claude 3 Haiku handle straightforward RAG tasks effectively. Open-source options like Llama 3, Mistral, and Mixtral enable on-premises deployment with strong RAG performance. Key factors include context window size, instruction-following capability, and latency tolerance. Models with larger context windows better utilize extensive retrieved content. Kanerika benchmarks LLM options against your specific RAG use case to identify the optimal model—request a comparative analysis for your project.

Are RAG models more computationally expensive than LLMs?

RAG systems add computational overhead beyond standalone LLM inference due to embedding generation, vector search, and document retrieval steps. However, this additional cost is typically modest compared to alternatives like fine-tuning or using larger models with extended context windows. RAG can actually reduce total costs by enabling smaller, cheaper LLMs to achieve accuracy comparable to larger models. The retrieval pipeline requires vector database infrastructure and embedding model compute, but these scale efficiently. Overall TCO depends on query volume, retrieval complexity, and your existing infrastructure. Kanerika optimizes RAG architectures for cost efficiency without sacrificing performance—let us analyze your computational requirements.

What industries can benefit the most from RAG and LLM?

Healthcare, financial services, legal, insurance, and manufacturing derive exceptional value from RAG-enhanced LLMs. Healthcare benefits from accurate clinical decision support grounded in medical literature and patient records. Financial services leverage RAG for regulatory compliance, research analysis, and customer advisory applications. Legal firms use RAG to search case law and contracts with verifiable citations. Insurance accelerates claims processing and underwriting with policy-specific knowledge retrieval. Manufacturing applies RAG to technical documentation, maintenance guides, and quality standards. Any industry with extensive proprietary documentation gains competitive advantage from RAG implementations. Kanerika delivers industry-specific RAG solutions across these sectors—explore how we can transform your knowledge workflows.

Is ChatGPT a RAG LLM?

ChatGPT is primarily an LLM, not a native RAG system, though it incorporates RAG-like features through web browsing and file upload capabilities. The base ChatGPT model generates responses from pre-trained knowledge without external retrieval. When you enable web search or upload documents, ChatGPT performs retrieval to augment its responses—functionally similar to RAG. However, enterprise RAG implementations typically use dedicated vector databases and custom retrieval pipelines rather than ChatGPT’s built-in features, offering greater control over knowledge sources and retrieval quality. Kanerika builds custom RAG systems that integrate with ChatGPT’s API or alternative LLMs for production-grade enterprise applications—discuss your requirements with our team.

Is RAG dead LLM?

RAG is far from dead—it remains essential for enterprise AI despite advances in LLM context windows and reasoning capabilities. While larger context windows reduce some RAG use cases, they cannot replace retrieval for massive knowledge bases exceeding context limits, real-time data requirements, or cost-sensitive deployments. Extended context windows are expensive to run at scale, whereas RAG efficiently processes unlimited document volumes. RAG also provides source attribution and audit trails that pure LLM approaches cannot match. The technology continues evolving with advanced techniques like iterative retrieval and agentic RAG. Kanerika implements cutting-edge RAG architectures that leverage the latest retrieval innovations—connect with us to future-proof your AI strategy.

Which model is better for customer service applications?

RAG-enhanced LLMs outperform standalone LLMs for customer service applications requiring accurate, company-specific responses. Customer support demands precise answers about products, policies, and procedures that general LLMs cannot reliably provide. RAG retrieves current documentation, FAQs, and knowledge base articles to ground responses in accurate information, reducing escalations caused by incorrect AI answers. The approach also enables citation of source materials, building customer trust. Standalone LLMs work for general conversational handling but risk hallucinating product details or outdated policies. Kanerika deploys RAG-powered customer service solutions that integrate with your existing knowledge systems—let us demonstrate how retrieval improves support quality.

Does ChatGPT use RAG or CAG?

ChatGPT uses RAG-like retrieval when web browsing is enabled, fetching and processing web content to augment responses with current information. The file upload feature functions more like CAG, loading documents into context for the conversation duration. Base ChatGPT without these features operates as a pure LLM, generating responses solely from pre-trained knowledge. OpenAI has not disclosed detailed architectural specifics, but observable behavior indicates retrieval-augmented approaches for search-enabled queries. Custom GPTs with knowledge files also demonstrate CAG-style context loading. Kanerika helps enterprises move beyond consumer ChatGPT features to production RAG systems with full control over retrieval—explore enterprise-grade implementations with our architects.