Companies that embrace Big Data and Predictive Analytics grow their profits eight times faster than those that don’t. Yet less than 30% of organizations effectively use their data to gain a competitive edge. In a world where businesses generate 2.5 quintillion bytes of data daily, failing to harness it means leaving money on the table.

Take Delta Air Lines, for example. By analyzing real-time aircraft sensor data, Delta’s predictive maintenance program reduced maintenance-related cancellations from over 5,600 in 2010 to just 55 in 2018, saving millions in operational costs and significantly improving customer satisfaction.

In this blog, we’ll explore how Big Data and Predictive Analytics are reshaping industries, improving decision-making, and driving business growth. Whether you’re in retail, finance, healthcare, or manufacturing, understanding these tools can give your business a data-driven edge in an increasingly competitive market.

Foundations of Big Data

Big Data forms the backbone of modern analytical systems, offering unprecedented insights by analyzing vast, complex datasets.

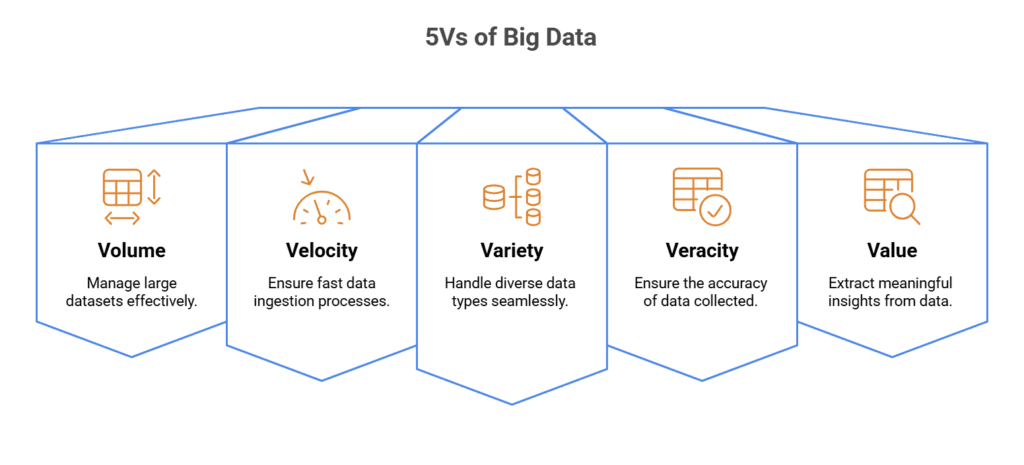

- Volume: You’ll encounter the term ‘volume’ in the context of Big Data, which refers to the immense amount of data generated every second. We’re talking about terabytes and petabytes of data from myriad sources.

- Velocity: The speed at which new data is generated and moved. You need to consider the rapid rate of data creation due to real-time processing needs.

- Variety: You’ll see data coming in all formats – structured data like databases, unstructured data like text, and semi-structured data like XML files.

- Veracity: The quality and accuracy of your data are crucial. It must be clean, precise, and reliable.

- Value: It’s not just about collecting data but extracting meaningful insights with tangible benefits.

What are Data Sources and Types?

Types of Data

- Structured: Traditional database systems are your go-to here, with data neatly stored in tables and rows.

- Unstructured: This includes text, images, videos, and anything that doesn’t fit neatly into a database.

- Semi-structured: Think of emails or XML files with some organizational properties but don’t follow a strict database structure.

Sources of Data

- Transactional data: Sales records, invoices, payments, etc.

- Social media data: Tweets, statuses, likes, shares.

- Machine-to-Machine data: Sensors, smart meters, Internet-of-Things devices.

- Biometric data: Fingerprints, genetics, facial recognition.

Big Data Technologies

- Storage: Hadoop Distributed File System (HDFS) and NoSQL databases facilitate the storage of large volumes of data in a distributed manner.

- Processing: Apache Hadoop and Spark enable the processing of Big Data using clusters of computers to handle the immense computing power required.

- Analysis: Tools such as Google BigQuery and Apache Hive allow for the querying and analysis of Big Data to derive insights.

- Visualization: Technologies like Tableau and PowerBI help present Big Data findings in a visually understandable format for better decision-making.

Enhance Decision-Making with Scalable Analytics Solutions!

Partner with Kanerika Today.

What is Predictive Analytics ?

Predictive analytics encompasses a range of statistical techniques and models that analyze current and historical facts to make predictions about future events. Your business can leverage these insights to identify risks and opportunities.

Key aspects of statistics in predictive analytics:

- Hypothesis Testing: Validates whether the patterns you observe in the data are statistically significant.

- Regression Analysis: Discern relationships between variables and how they contribute to your predicted outcome.

Machine Learning Principles

Machine learning, a subset of artificial intelligence, underpins modern predictive analytics. By feeding your systems large datasets, they learn patterns and make decisions with minimal human intervention.

- Training: Your machine learning model learns from historical data.

- Validation: Testing the model’s performance on separate data is crucial.

- Deployment: You deploy your model for real-world predictions after training and validation.

Data Collection and Processing

Practical strategies for collecting and processing data are critical in big data and predictive analytics. These processes lay the groundwork for accurate and reliable predictive models.

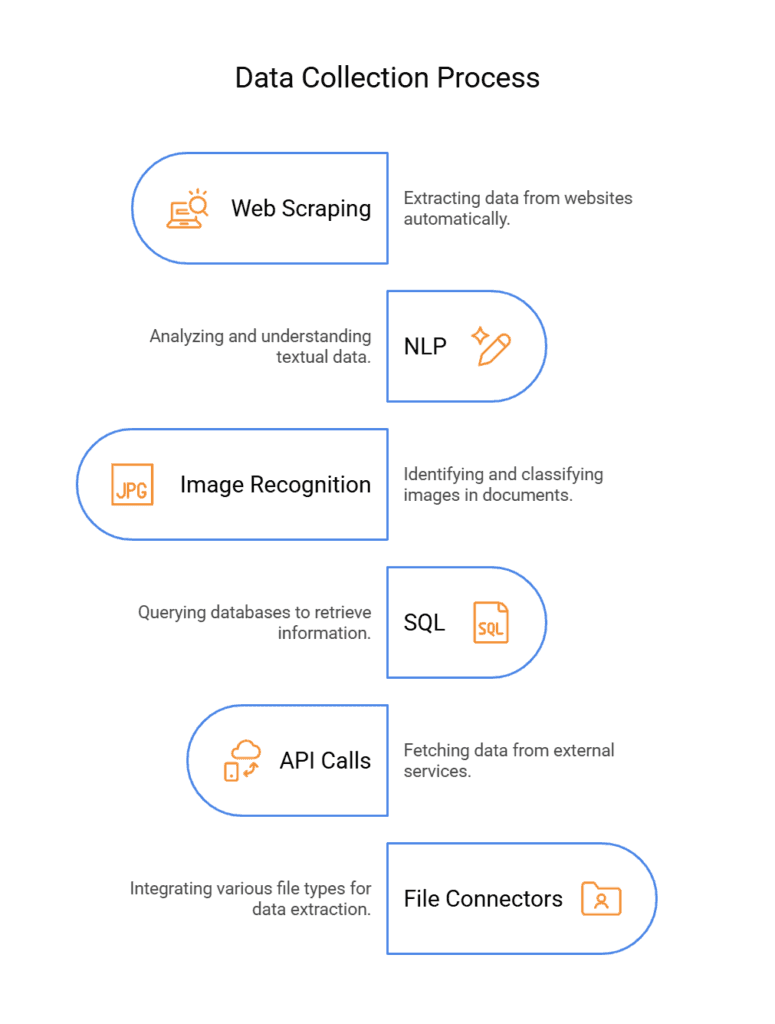

1. Web Scraping From Websites

Extracts structured and unstructured data from websites using automation tools like BeautifulSoup or Scrapy. This helps businesses gather insights from competitors, customer reviews, and market trends.

2. NLP for Textual Data

Uses Natural Language Processing (NLP) to analyze, understand, and process textual data from various sources such as customer reviews, social media, and business documents for sentiment analysis and trend prediction.

3. Image Recognition for Documents

Employs machine learning and AI-based models to extract and interpret text from images and scanned documents. Technologies like OCR (Optical Character Recognition) enable automated document processing and data extraction.

4. SQL for Database Retrieval

Retrieves and manages structured data from relational databases using SQL queries. This enables efficient data processing, reporting, and business intelligence applications.

5. API Calls for Data Fetching

Fetches real-time data from external services, applications, or databases through APIs (Application Programming Interfaces). This allows seamless data integration and automation of workflows.

6. File Connectors for Data Extraction

Facilitates seamless data extraction from different file formats such as CSV, Excel, JSON, and XML. This helps integrate various data sources into analytics and decision-making processes.

Data Mining Techniques

You will navigate a variety of data mining techniques to extract information from big data. Some standard methods include:

- Clustering: Grouping a set of objects in such a way that objects in the same cluster are more similar to each other than to those in different clusters.

- Classification: Assigning items to a predefined set of categories.

- Regression: Determining the relationship among variables.

- Association Rule Learning: Discovering exciting relations between variables in large databases.

How to Process Large Data Sets

Processing large data sets necessitates robust computing resources and efficient algorithms. You should be:

- Familiar with distributed computing frameworks such as Hadoop and Spark.

- Proficient in parallel processing and in-memory computations to expedite the data processing time.

Why AI and Data Analytics Are Critical to Staying Competitive

AI and data analytics empower businesses to make informed decisions, optimize operations, and anticipate market trends, ensuring they maintain a strong competitive edge.

Data Cleansing and Quality

Maintaining data quality is crucial for accurate analytics. Engage in data cleansing by:

- Identifying and correcting errors and inconsistencies to increase data integrity and quality

- Applying algorithms for duplicate detection, data validation, and statistical methods to clean the data set.

What is Predictive Modeling?

Predictive modeling is a statistical and machine learning technique used to analyze historical data and make future predictions. It involves creating mathematical models that identify patterns and relationships within data to forecast outcomes, such as customer behavior, market trends, or risk assessments. Businesses use predictive modeling in various fields, including finance for fraud detection, healthcare for disease prediction, and marketing for customer segmentation.

Building Predictive Models

You define the problem and prepare your data to build a predictive model. Initially, data collection is critical; you need relevant and high-quality data. The next step is data preprocessing, cleaning, and transforming the raw data. It involves handling missing values, encoding categorical features, and normalizing or scaling features.

- Data Collection: Gather historical data relevant to the outcome you want to predict.

- Data Preprocessing: Prepare your data for modeling by cleaning and formatting.

Algorithm Selection

Selecting a suitable algorithm is pivotal in predictive modeling. You choose based on the problem type—classification or regression—and consider the data’s characteristics. Simplicity and accuracy are often opposing forces you need to balance. For instance, unlike neural networks, decision trees are easy to interpret but might not work well with complex patterns.

- Classification: Algorithms like Logistic Regression, Support Vector Machine, Random Forest.

- Regression: Algorithms such as Linear Regression, Ridge Regression, and LASSO.

Model Training and Validation

Once the algorithm is selected, you train your model using a dataset split into training and testing subsets. The training phase involves adjusting the model parameters. Validation follows, requiring you to gauge the model’s performance on unseen data to ensure reliability.

- Training Phase: Fit your model to the training data.

- Validation: Assess model performance using metrics like RMSE for regression and accuracy for classification.

| Phase | Description |

| Training | Adjust the model to fit the training data. |

| Validation | Evaluate the model’s performance on testing data. |

Implementation Strategies

When implementing big data and predictive analytics, it’s imperative to address the seamless integration with existing systems, scalability, and strict data governance to ensure efficiency and compliance.

Integration with Existing Systems

Your current IT infrastructure will require careful consideration to integrate new analytics capabilities. Review existing data formats and ensure compatibility with the latest analytics solutions. Integration should be:

- Seamless: Ensure minimal disruption to existing workflows.

- Efficient: Optimized to handle increased data processing loads.

Scalability Considerations

The system must handle growing amounts of data and concurrent users as demand increases. To achieve this, you must:

- Plan for horizontal scalability (adding more machines) or vertical scalability (adding more power to existing hardware).

- Monitor performance metrics to inform necessary scalability adjustments.

Data Governance

Ensuring data quality, security, and regulatory compliance is non-negotiable. Implement stringent data governance policies:

- Data access controls: Determine who can access what data and under what circumstances.

- Compliance adherence: Regularly update your data practices to align with current laws and regulations.

| Components of Data Governance Framework | Description |

| Policies | Rules and guidelines for data management and usage. |

| Dictionaries | Reference guide for data elements, promoting common understanding. |

| Lineage | Captures data journey, ensuring traceability and compliance. |

| Catalog | A comprehensive inventory of data assets, enabling data democratization. |

| Processes | Defines operational aspects, such as stewardship and data quality management. |

Applications of Predictive Analytics

Predictive analytics is increasingly integral across various sectors, directly impacting your decisions and strategies.

1. Financial Services

In financial services, predictive analytics helps credit scoring, a critical tool for evaluating your creditworthiness. Algorithms analyze your past credit history, loan application details, and other financial behaviors to predict future credit risks. Additionally, it aids in detecting potential fraudulent activities by sifting through transaction data to flag unusual patterns, protecting your accounts from unauthorized access.

2. Healthcare

Your healthcare providers utilize predictive analytics for patient care improvement by forecasting the likelihood of disease, readmission rates, and potential outcomes of treatments. These predictions support clinical decisions and can lead to personalized care plans. Electronic health records (EHRs) are mined to identify patients at risk of chronic conditions, allowing early intervention, which can save lives and reduce healthcare costs.

3. Retail and E-Commerce

Predictive analytics in the retail and e-commerce sector enables personalized shopping experiences. It can predict purchasing patterns based on your past shopping behavior, demographics, and preferences. This data then informs inventory management, ensuring popular items are well-stocked, and helps tailor marketing efforts, delivering targeted adverts and promotions likely to resonate with your interests.

Predictive Analytics Use Case

Netflix, a leading streaming service, has transformed content recommendation and user experience through the strategic use of predictive analytics. By harnessing the power of user data, Netflix has created a personalized viewing experience, setting a new standard in the entertainment industry.

How?

Netflix collects a wide array of data from its users, including what they watch, search for, and rate, as well as when and on what device they watch. This data is analyzed using sophisticated predictive analytics algorithms. These algorithms identify patterns and preferences in user behavior, allowing Netflix to predict and recommend content that each user is likely to enjoy. The system continuously learns and evolves, refining its recommendations based on new user interactions.

Impact:

The impact of this approach is significant. Users enjoy a highly personalized experience, often discovering new content tailored to their tastes. This increases user engagement and satisfaction, leading to higher retention rates. For Netflix, it translates into better customer loyalty, reduced churn, and valuable insights for content creation and acquisition strategies. Predictive analytics has thus not only enhanced the user experience but also given Netflix a competitive edge in the crowded streaming market.

Predictive Analytics in Healthcare: Ensuring Effective Healthcare Management

Learn how Predictive Analytics in Healthcare enhances patient care, optimizes resources, and enables data-driven decision-making for better health outcomes.

What Are the Key Challenges and Factors in Big Data & Predictive Analytics?

In harnessing big data and predictive analytics, you face several key challenges and considerations that can impact the success and integrity of your initiatives.

1. Data Privacy and Security

Your data is vulnerable to unauthorized access and breaches. Implementing robust security measures is crucial. For example:

- Encryption: Protect data at rest and in transit.

- Access management: Ensure only authorized personnel can view or manipulate data.

2. Data Quality & Consistency

Inaccurate, incomplete, or inconsistent data can lead to faulty predictions and unreliable insights. Maintaining high data quality is essential. For example:

- Data cleansing: Regularly identify and remove errors, duplicates, or inconsistencies.

- Standardization: Establish uniform formats, naming conventions, and validation rules across datasets.

3. Ethical Implications

You must use predictive analytics ethically to avoid bias or discrimination. Consider the following:

- Transparency: Be transparent about how data is collected and used.

- Fairness: Regularly test algorithms for bias.

4. Regulatory Compliance

Adhering to laws and regulations like GDPR or CCPA is essential. Ensure:

- Data Protection: Implement procedures for data handling in compliance with regulations.

- Consent Management: Explicitly obtain and manage user consent for data collection and use.

5. Scalability and Infrastructure

Handling vast amounts of data efficiently requires robust infrastructure that can scale with growing needs. Without proper scaling, performance issues can arise. For example:

- Cloud computing: Utilize cloud-based solutions to expand storage and processing power on demand.

- Distributed computing: Implement frameworks like Hadoop or Spark to process large datasets in parallel.

Elevate Your Business with Kanerika’s Advanced Data Analytics Solutions

As a trusted Microsoft partner, Kanerika is committed to transforming your organization’s data strategy with cutting-edge analytics solutions powered by tools like Power BI and Tableau.From real-time data processing to predictive modeling and trend forecasting, our solutions empower industries like finance, healthcare, manufacturing, and retail to optimize performance and reduce risks. We design and implement custom analytics frameworks, scalable data pipelines, and AI-driven forecasting models tailored to each business’s unique needs.

Our team of data scientists and AI experts ensures that companies can make data-driven decisions with confidence by improving data quality, increasing analytical accuracy, and automating key business processes. Whether you need sales forecasting, fraud detection, customer behavior analysis, or supply chain optimization, Kanerika’s solutions provide the insights you need to stay ahead.

Optimize Your Data Strategy with Intelligent Analytics Solutions!

Partner with Kanerika Today.

FAQs

What is predictive analytics in big data?

Predictive analytics in big data uses statistical algorithms, machine learning models, and historical data patterns to forecast future outcomes. By processing massive datasets, organizations can identify trends, anticipate customer behavior, and predict market shifts with higher accuracy than traditional methods. This approach combines data mining techniques with advanced modeling to generate actionable predictions across sales, operations, and risk management. Enterprises leveraging predictive analytics gain competitive advantages through proactive decision-making rather than reactive responses. Kanerika’s AI and ML specialists help organizations build predictive models that transform raw big data into reliable forecasts—schedule a consultation to explore your use case.

What exactly is big data analytics?

Big data analytics is the process of examining large, complex datasets to uncover hidden patterns, correlations, and actionable insights that traditional data processing tools cannot handle. It encompasses techniques like data mining, statistical analysis, and machine learning applied to structured and unstructured data sources. Organizations use big data analytics to optimize operations, personalize customer experiences, detect fraud, and drive strategic decisions. The discipline requires scalable infrastructure, advanced algorithms, and skilled interpretation to deliver meaningful business value from petabytes of information. Kanerika’s data analytics services help enterprises extract intelligence from complex datasets—connect with our team to accelerate your analytics journey.

What is the role of big data analytics and predictive modeling in decision-making and business strategy?

Big data analytics and predictive modeling transform decision-making by replacing intuition with evidence-based insights. Executives can simulate scenarios, forecast demand, and quantify risks before committing resources. Predictive models analyze historical performance alongside real-time data streams to recommend optimal strategies for pricing, inventory, and market expansion. This data-driven approach reduces costly missteps and accelerates response times to market changes. Companies integrating analytics into strategic planning consistently outperform competitors relying on traditional methods. Kanerika partners with enterprises to embed predictive modeling into core business processes—reach out to align your analytics strategy with measurable outcomes.

What is an example of big data analytics?

A practical big data analytics example is retail demand forecasting, where companies analyze millions of transactions, weather patterns, social media sentiment, and economic indicators to predict inventory needs. Walmart processes over 2.5 petabytes daily to optimize stock levels across thousands of stores. Healthcare organizations use similar approaches to predict patient readmission risks by analyzing electronic health records, demographics, and treatment histories. Financial institutions detect fraudulent transactions in real-time by processing billions of data points against behavioral models. Kanerika delivers big data analytics solutions across industries—contact us to see how similar approaches can solve your specific business challenges.

How will big data and predictive analytics change forecasting?

Big data and predictive analytics are revolutionizing forecasting by incorporating diverse data sources, enabling real-time adjustments, and improving accuracy exponentially. Traditional forecasting relied on limited historical data and static models, while modern approaches integrate IoT sensor feeds, social signals, and market data continuously. Machine learning algorithms identify non-linear patterns humans miss, reducing forecast errors by 20-50% in many applications. Dynamic models adapt as conditions change, moving forecasting from periodic batch processes to continuous intelligence streams. Kanerika helps enterprises modernize their forecasting capabilities with advanced predictive analytics platforms—book a discovery session to transform your planning processes.

How is big data related to predictions?

Big data provides the raw material that fuels accurate predictions by supplying sufficient volume, variety, and velocity of information for pattern recognition. Machine learning models require massive training datasets to identify subtle correlations that drive reliable forecasts. Without big data, predictive algorithms lack the statistical significance needed to distinguish genuine signals from noise. The relationship is symbiotic: big data enables predictions, while predictive analytics gives big data its business value. Organizations combining both achieve superior outcomes in customer behavior forecasting, operational optimization, and risk assessment. Kanerika integrates big data infrastructure with predictive capabilities—let us show you how this combination drives measurable results.

What are the four types of big data analytics?

The four types of big data analytics are descriptive, diagnostic, predictive, and prescriptive. Descriptive analytics summarizes historical data to show what happened. Diagnostic analytics examines data to explain why events occurred. Predictive analytics uses statistical models and machine learning to forecast what will happen next. Prescriptive analytics recommends specific actions by simulating outcomes of different decisions. Each type builds upon the previous, creating an analytics maturity continuum from basic reporting to autonomous decision support. Most enterprises benefit from implementing all four types strategically. Kanerika guides organizations through the full analytics spectrum—contact us to assess where your capabilities stand today.

What is prescriptive analytics in big data?

Prescriptive analytics in big data goes beyond prediction to recommend optimal actions based on forecasted outcomes. It combines predictive models with optimization algorithms, business rules, and simulation techniques to suggest specific decisions. For example, prescriptive systems can recommend pricing adjustments, resource allocation, or supply chain modifications that maximize defined objectives while respecting constraints. This represents the most advanced analytics capability, enabling organizations to automate complex decisions at scale. Implementation requires robust data infrastructure, accurate predictive models, and clear business logic to drive meaningful recommendations. Kanerika builds prescriptive analytics solutions that turn predictions into automated actions—explore how this can accelerate your operations.

What are the three types of predictive analysis?

The three primary types of predictive analysis are regression models, classification models, and time series analysis. Regression models predict continuous numerical outcomes like revenue or demand quantities using historical variable relationships. Classification models categorize data into discrete groups, predicting outcomes like customer churn probability or fraud likelihood. Time series analysis forecasts values based on temporal patterns, accounting for seasonality, trends, and cyclical behaviors in sequential data. Each type addresses different prediction challenges, and sophisticated analytics strategies often combine multiple approaches for comprehensive forecasting capabilities. Kanerika’s data science team selects and tunes the right predictive models for your specific business questions—schedule a technical consultation today.

What are the big 3 of big data?

The big 3 of big data refers to the original three V’s: Volume, Velocity, and Variety. Volume describes the massive scale of data generated, often measured in petabytes or exabytes. Velocity captures the speed at which data streams in and requires processing, from batch to real-time. Variety encompasses the diverse data types including structured databases, unstructured text, images, and sensor feeds. These three characteristics distinguish big data from traditional data management and require specialized technologies like distributed computing and scalable storage architectures. Kanerika designs data platforms that handle all three V’s effectively—reach out to modernize your data infrastructure.

What are the 4 V's of big data?

The 4 V’s of big data are Volume, Velocity, Variety, and Veracity. Volume refers to the enormous quantities of data generated daily across digital touchpoints. Velocity describes how rapidly data arrives and must be processed for timely insights. Variety covers the range of data formats from structured databases to unstructured social media and IoT streams. Veracity addresses data quality, accuracy, and trustworthiness—critical for reliable analytics outcomes. Understanding these dimensions helps organizations design appropriate infrastructure and governance frameworks for their big data initiatives. Kanerika ensures your data platform addresses all four V’s with built-in quality and governance—request an architecture assessment.

What is predictive analytics?

Predictive analytics is a branch of advanced analytics that uses historical data, statistical algorithms, and machine learning techniques to forecast future outcomes. It transforms raw data into probability scores and predictions about customer behavior, operational performance, and market trends. Common applications include demand forecasting, risk scoring, predictive maintenance, and customer lifetime value modeling. Unlike descriptive analytics that explains the past, predictive analytics enables proactive decision-making by anticipating what comes next. Organizations across industries use these capabilities to optimize resources, reduce risks, and capture opportunities before competitors. Kanerika builds predictive analytics solutions tailored to enterprise needs—connect with our team to discuss your forecasting goals.

How is analytics related to big data?

Analytics transforms big data from raw information into actionable business intelligence. Without analytics, massive datasets remain inert storage costs rather than strategic assets. The relationship works both ways: big data provides the scale and diversity needed for statistically significant insights, while analytics techniques extract patterns and predictions from that complexity. Modern analytics platforms evolved specifically to handle big data characteristics—distributed processing for volume, stream processing for velocity, and flexible schemas for variety. Together, they enable capabilities impossible with traditional business intelligence approaches. Kanerika integrates analytics capabilities with scalable data platforms—let us help you unlock the value hidden in your data.

Why is big data growing?

Big data is growing exponentially due to digital transformation, IoT proliferation, and expanding digital interactions across every industry. Connected devices now number in the tens of billions, each generating continuous data streams. Social media, e-commerce, and digital services create massive transaction and behavioral datasets. Enterprises increasingly capture granular operational data for optimization purposes. Cloud infrastructure reduced storage costs dramatically, removing barriers to retaining historical data. Additionally, proven ROI from data-driven decision-making motivates organizations to collect more information for competitive advantage. This growth shows no signs of slowing as AI applications demand even larger training datasets. Kanerika helps enterprises scale their data infrastructure alongside this growth—discuss your expansion strategy with our architects.

What is the current state of big data?

The current state of big data reflects maturation from experimental projects to mission-critical enterprise infrastructure. Organizations have moved beyond asking whether to invest in big data toward optimizing existing implementations. Cloud-native data platforms dominate new deployments, with unified architectures replacing fragmented tool stacks. Real-time analytics capabilities are now standard expectations rather than premium features. Data governance and privacy compliance have become central concerns following regulatory developments. AI and machine learning integration represents the primary growth vector, with predictive and prescriptive analytics driving measurable business outcomes across industries. Kanerika stays current with evolving big data technologies and best practices—partner with us to keep your data strategy ahead of the curve.

How is the future for big data?

The future of big data centers on AI integration, edge computing, and automated intelligence at scale. Predictive analytics will become embedded in operational systems rather than separate reporting tools. Edge processing will enable real-time analytics closer to data sources, reducing latency for time-sensitive applications. Generative AI will transform how users interact with data through natural language queries and automated insight generation. Data fabric architectures will unify distributed sources while maintaining governance. Privacy-preserving analytics techniques will enable insights without exposing sensitive information. Organizations investing now in modern data platforms position themselves to leverage these advancements. Kanerika builds future-ready data architectures designed for emerging capabilities—explore how we can prepare your organization for what comes next.

What are the 4 pillars of big data?

The 4 pillars of big data are data collection, data storage, data processing, and data analysis. Collection encompasses capturing information from diverse sources including databases, sensors, applications, and external feeds. Storage involves scalable infrastructure capable of handling petabyte-scale datasets economically. Processing transforms raw data through cleansing, integration, and preparation for analytical consumption. Analysis applies statistical, machine learning, and visualization techniques to generate insights and predictions. Each pillar requires specialized technologies and expertise, and weakness in any area undermines the entire analytics capability. Strong governance spans all four pillars to ensure quality and compliance. Kanerika strengthens all four pillars with integrated data platform solutions—request an assessment of your current capabilities.

What is the prediction error in big data?

Prediction error in big data measures the difference between forecasted values and actual outcomes, quantifying model accuracy. Common metrics include mean absolute error, root mean squared error, and mean absolute percentage error depending on the application. Prediction errors arise from multiple sources: insufficient training data, overfitting to historical patterns, missing variables, data quality issues, and fundamental unpredictability in complex systems. Minimizing prediction error requires careful feature engineering, appropriate algorithm selection, cross-validation techniques, and continuous model monitoring against actual results. Understanding error sources helps organizations set realistic expectations and improve models iteratively. Kanerika implements robust model validation frameworks to minimize prediction error in production analytics—talk to our data science team about improving your forecast accuracy.