Data extraction for businesses is crucial to gather valuable information from numerous, often unstructured, sources such as websites, documents, or customer databases. Data extractors can efficiently retrieve essential data, saving time and resources. The primary benefits of this process include improved decision-making, increased revenue, and reduced costs while also addressing high customer expectations.

Implementing data extraction services in your organization allows for better business decisions, enhanced productivity, and valuable industry insights. Data extraction is crucial when dealing with substantial vdedicated proxyolumes of diverse data stored in various locations. With this information, you can analyze customer behavior, develop buyer personas, and refine your products and customer service, ultimately promoting growth within your organization.

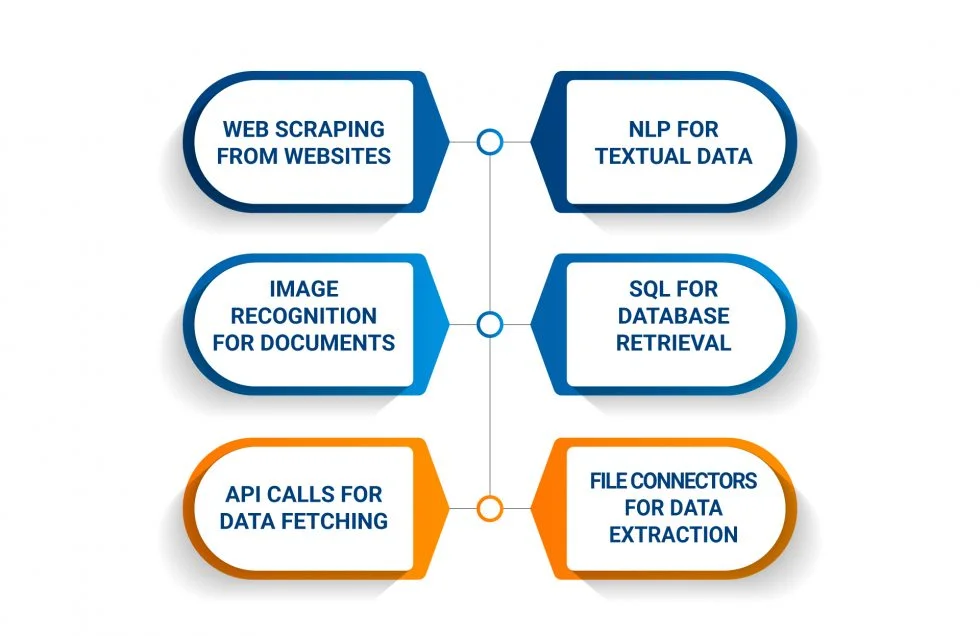

Types of Data Extraction

When it comes to data extraction, various techniques can be used depending on the type of data being extracted. Here are some of the most common types of data extraction:

- Web Scraping: This involves extracting data from websites by scraping the HTML code. Web scraping can be done manually or with the help of automated tools.

- Text Extraction: This involves extracting data from unstructured text sources such as emails, social media posts, and news articles. Text extraction can be done using natural language processing (NLP) techniques.

- Image Extraction: This involves extracting data from images using image recognition technology. Image extraction can be used to extract data from scanned documents, receipts, and invoices. You can also use that technology to convert images to text.

- Database Extraction: This involves extracting data from SQL and NoSQL databases. Database extraction can be done using SQL queries or with the help of database connectors.

- API Extraction: This involves extracting data from application programming interfaces (APIs). API extraction can be done using API calls or with the help of API connectors.

- File Extraction: This involves extracting data from various files such as PDFs, Excel spreadsheets, and CSV files. File extraction can be done using specialized software or with the help of file connectors. For instance, tools that convert PDF to Google Sheets allow users to extract tabular data from a PDF directly into a spreadsheet without any manual entry.

Each type of data extraction has its advantages and disadvantages. The choice of technique depends on the type of data being extracted and the project’s specific requirements.

The Data Extraction Process

Data extraction involves collecting or retrieving disparate types of data from various sources, many of which may be poorly organized or unstructured. This process enables the consolidation, processing, and refining of data for storage in a centralized location, preparing it for transformation.

The process of data extraction involves the following steps:

- Identifying the data sources: The first step in the data extraction process is to identify the data sources. These sources can be internal or external to the organization. Examples of internal sources include databases, spreadsheets, and files, while external sources may include social media platforms, websites, and online directories.

- Extracting the data: Identify the data sources and use the appropriate tools and techniques for extraction tailored to the type and source of the data. Methods can range from AI web scraping tools and APIs to specialized data extraction software.

- Cleaning the data: Remove duplicates, correct errors, and standardize the data post-extraction to facilitate easy analysis.

- Transforming the data: Convert the cleaned data into an easily analyzable format, such as a spreadsheet or database, in preparation for analysis.

- Loading the data: The final step in the data extraction process is to load the data into a centralized location, such as a data warehouse or a data lake. This makes it easy to access and analyze the data.

Overall, the data extraction process is an essential part of any data-driven organization. By collecting and consolidating data from various sources, organizations can gain valuable insights, make informed decisions, and drive efficiency within all workflows.

By implementing the data extraction process, your data-driven organization can achieve valuable insights, make informed decisions, and enhance workflow efficiency.

Data Extraction Examples and Use Cases

Data extraction is a powerful tool that can help businesses gather valuable information from various sources. Here are some examples of how data extraction can be used:

- Lead Generation: Many businesses use data extraction to gather contact information from potential customers. For example, a real estate company might extract contact information from online property listings to create a database of potential clients. This can help them reach out to these potential clients with their services in the future.

- Price Monitoring: Data extraction can also be used for price monitoring. For example, an e-commerce website might use data extraction to monitor the prices of its competitors’ products. This can help them adjust their prices to remain competitive in the market.

- Social Media Analysis: Data extraction can be used to gather information from social media platforms. For example, a business might use data extraction to gather information about their brand’s mentions on Twitter. This can help them understand how their audience perceives their brand and adjust their marketing strategy accordingly.

- Financial Analysis: Data extraction can gather financial data from various sources. For example, a business might use data extraction to gather financial data from their competitors’ annual reports. This can help them understand their competitors’ financial performance and adjust their strategy accordingly.

- Web Scraping: Data extraction can also be used for web scraping. For example, a business might use data extraction to scrape product information from online marketplaces. Sites like Amazon and eBay change their HTML often, so teams use a web scraping API that adapts automatically. To keep those requests reliable and avoid getting blocked, many teams run their scrapers through a dedicated proxy. This can help them gather information about their competitors’ products and adjust their product offerings accordingly.

Utilizing data extraction in these various ways enables businesses to obtain valuable intelligence from numerous sources. This information can provide critical insights into the market, competition, and customer base, ultimately allowing you to make well-informed decisions and maintain an edge over your rivals.

How Data is Extracted: Structured & Unstructured Data

Data extraction is the process of retrieving data from various sources, including structured and unstructured data. Structured data is organized and stored in a specific format, such as a database or spreadsheet. On the other hand, unstructured data is not organized in a predefined manner and can be found in sources such as PDFs, emails, and social media.

Structured Data Extraction

It is relatively straightforward since the data is stored in a specific format. The process involves identifying the data fields and extracting the relevant information. Common methods of extracting structured data include:

- Using SQL queries to retrieve data from databases

- Using APIs to extract data from web applications

- Using web scraping tools to extract data from websites

- Using ETL (Extract, Transform, Load) tools to extract data from various sources and transform it into a structured format

Unstructured Data Extraction

Extracting data from unstructured sources often presents more challenges due to the data’s disorganized nature. This process involves converting the data into a structured format for analysis and business application.

Common methods of extracting unstructured data include:

- Using natural language processing (NLP) techniques to extract information from text-based sources such as emails, social media, and news articles

- Using optical character recognition (OCR) software to copy text from image and scanned documents.

- Using machine learning algorithms to identify patterns and extract information from unstructured data sources

In conclusion, data extraction is a critical process that involves retrieving data from various sources, including structured and unstructured data. The methods used for extracting data depend on the type of data source and the format of the data. Structured data extraction is relatively straightforward, while unstructured data extraction requires more advanced techniques such as NLP and machine learning.

Using Data Extraction on Qualitative Data

When conducting a systematic review, data extraction is crucial to identifying relevant information from included studies. Data extraction, while often linked to quantitative data, also plays an essential role in extracting data from qualitative studies.

To extract data from qualitative studies, you should follow these steps:

- Develop a data extraction table with relevant categories and subcategories based on the research questions and objectives.

- Review the included studies and identify relevant data to extract.

- Extract the data by summarizing key findings and themes, including direct quotes and supporting evidence.

- Ensure the accuracy of the extracted data by having two or more people extract data from each study and compare their results.

- Analyze the extracted data to identify patterns, themes, and relationships between the studies.

Overall, data extraction for qualitative studies requires careful consideration of the research questions and objectives and attention to detail and accuracy in the extraction process. These steps ensure the extracted data is relevant, reliable, and useful for your systematic review.

Data Extraction and ETL

Looking at the ETL process might help put the importance of data extraction into perspective. To sum it up, ETL enables businesses and organizations to centralize data from various sources and assimilate various data types into a standard format.

The ETL process consists of three stages: Extraction, Transformation, and Loading.

- Extraction: It is the process of obtaining information from multiple sources or systems. To extract data, one must first identify and discover important information. Extraction enables the combining of various types of data and the subsequent mining of business intelligence.

- Transformation: Next, it is time to refine the extracted data. Data is organized, cleaned, and categorized during the transformation step. Audits and deletions of redundant items are among the measures to ensure the data is reliable, consistent, and useable.

- Loading: A single site stores and analyzes the converted high-quality data.

Benefits of Data Extraction

Data extraction tools offer many benefits to organizations. Here are some of the benefits:

- Eliminates Manual Data: There is no need for your employees to devote their time and energy to manual data extraction. It will frustrate them in sorting out unlimited data without errors. Data extraction tools use an automated process to organize data.

- Choose your Perfect Tool: The data extraction tools are developed to handle automated data extraction procedures. It can handle even complex projects such as web data, PDF, images, or any documentation or process.

- Diverse Data: The data Extraction tool can handle various data types like databases, web data, PDFs, documents, and images. There are no limitations when managing and extracting data from various sources. Many data formats are available to suit your preferences and business requirements from most service providers.

- Accurate and Specified Data: The provided information will be accurate, error-free, and reliable for your enterprise requirements. Even the minute specifications will be considered while extracting data. It improves data quality and makes data administration easier.

Data Extraction for Business Challenges

When it comes to data extraction, businesses face several challenges that can make the process complex and time-consuming.

Here are some of the common challenges businesses face when it comes to data extraction:

1. Data Quality

One of the biggest challenges businesses face with data extraction is ensuring data quality. Poor data quality can lead to inaccurate insights, negatively impacting business decisions. To ensure data quality, businesses must clearly understand the data they are extracting, including its source, format, and structure.

2. Data Volume

Another challenge businesses face with data extraction is dealing with large volumes of data. Extracting data from multiple sources often leads to the accumulation of a massive amount of data, which requires thorough processing and analysis. To overcome this challenge, businesses need the right tools and resources to handle large volumes of data.

3. Data Integration

Data extraction is just one part of the data management process. Once the data is extracted, it must be integrated with other data sources to provide a complete picture of the business. This process can be challenging, as different data sources may have different formats and structures.

4. Data Security

Data security is a critical concern for businesses regarding data extraction. Extracting data from multiple sources can increase the risk of data breaches and cyber-attacks. To ensure data security, businesses need robust security measures like encryption, firewalls, and access controls.

5. Data Privacy

Data privacy is another important concern for businesses regarding data extraction. Extracting data from multiple sources can result in collecting sensitive data, such as personal information. To ensure data privacy, businesses must comply with data protection regulations like GDPR, CCPA, and HIPAA.

Kanerika: Your Trusted Data Strategy Partner

When it comes to data extraction, having a trusted partner to guide you through the process can make all the difference. That’s where Kanerika comes in.

Kanerika, a global consulting firm with expertise in data management and analytics, excels in assisting businesses in aligning their data strategy with their overall business strategy. This approach integrates complex data management techniques with a deep understanding of business objectives.

Our no-code DataOps platform, FLIP, can transform your business.

Here are just a few reasons why Kanerika is your go-to partner for data extraction:

- Expertise in Data Management: Kanerika has a unique data-to-value model enables businesses to get self-serviced insights across their heterogeneous data sources. This means you can consolidate your data into a single repository, prepare it for analysis, share it with external partners, and store it for archival purposes.

- Enable Self-Service Business Intelligence: Kanerika can help you enable self-service business intelligence, so your team can access the data they need to make informed decisions. With Kanerika’s help, you can modernize and scale your data analytics, drive productivity, and reduce costs.

- Microsoft Gold Partner: Kanerika is a Microsoft Gold Partner, which means they have expertise in Microsoft Power BI and Azure Solutions for implementing BI solutions for their customers. This partnership ensures you have access to the latest technology and solutions for your data extraction needs.

Kanerika is your trusted partner for all your data extraction needs. With their expertise in data management, self-service business intelligence, and Microsoft partnership, you can be confident that you’re getting the best solutions for your business.

FAQs

What do you mean by data extraction?

Data extraction is the process of retrieving structured or unstructured data from various sources for further processing, analysis, or storage. This includes pulling information from databases, documents, APIs, web pages, and legacy systems into a usable format. Organizations use data extraction as the foundational step in data pipelines, enabling business intelligence, reporting, and decision-making. The extracted data can then be transformed and loaded into data warehouses or analytics platforms. Kanerika’s data extraction specialists help enterprises automate this critical process across complex source systems—connect with us for a tailored assessment.

How do you extract data?

Data extraction involves connecting to source systems, querying or scraping the required information, and converting it into a structured format. The method depends on your data source—databases use SQL queries, APIs require authentication and endpoint calls, while documents need parsing tools or OCR technology. Automated extraction tools handle repetitive tasks, reducing manual effort and errors. For enterprise-scale extraction, organizations implement ETL pipelines that continuously pull data from multiple sources into centralized repositories. Kanerika builds automated data extraction workflows tailored to your infrastructure—reach out to streamline your data operations.

What are the techniques of data extraction?

Data extraction techniques include full extraction, incremental extraction, web scraping, API-based extraction, and log-based change data capture. Full extraction retrieves entire datasets, while incremental extraction pulls only new or modified records since the last run, reducing processing time. Web scraping collects data from websites using automated scripts, and API extraction connects directly to application endpoints. Log-based CDC monitors database transaction logs for real-time changes. Each technique suits different use cases depending on data volume, freshness requirements, and source system capabilities. Kanerika implements the optimal extraction technique for your specific data environment—schedule a consultation today.

What is the difference between ETL and data extraction?

Data extraction is just one component of the ETL process, while ETL encompasses extraction, transformation, and loading as a complete data pipeline. Extraction retrieves raw data from source systems; transformation cleanses, validates, and restructures that data; loading moves the processed data into target destinations like data warehouses. Think of extraction as the first step that feeds into the broader ETL workflow. Organizations often confuse these terms, but understanding the distinction helps in architecting efficient data integration strategies. Kanerika designs end-to-end ETL solutions with optimized extraction layers—talk to our data engineers to modernize your pipelines.

What are the steps of the extraction process?

The data extraction process follows five key steps: source identification, connection establishment, data selection, extraction execution, and validation. First, identify all relevant source systems containing required data. Next, establish secure connections using appropriate protocols or APIs. Then define selection criteria specifying which records and fields to extract. Execute the extraction using scheduled jobs or real-time triggers. Finally, validate extracted data for completeness and accuracy before passing it downstream. This structured approach ensures reliable, repeatable extraction operations across enterprise environments. Kanerika’s extraction frameworks automate these steps with built-in validation—contact us to accelerate your data workflows.

Why is data extraction important?

Data extraction is critical because it enables organizations to consolidate information from disparate systems into unified repositories for analysis and decision-making. Without effective extraction, valuable data remains siloed across databases, applications, and documents, limiting business intelligence capabilities. Proper extraction ensures data accuracy, reduces manual processing errors, and accelerates time-to-insight. It forms the foundation for analytics, machine learning models, regulatory reporting, and operational efficiency. Companies that master data extraction gain competitive advantages through faster, data-driven decisions. Kanerika helps enterprises unlock trapped data through intelligent extraction solutions—request a free assessment to identify your opportunities.

What are the two types of data extraction?

The two primary types of data extraction are full extraction and incremental extraction. Full extraction retrieves the complete dataset from source systems every time, suitable for smaller datasets or initial loads. Incremental extraction captures only records that have changed since the previous extraction, significantly reducing processing time and system load for large-scale operations. Incremental methods include timestamp-based detection, trigger-based capture, and log-based change data capture. Most enterprise environments use incremental extraction for ongoing operations after an initial full load. Kanerika implements intelligent extraction strategies combining both approaches for optimal performance—connect with our team to design your solution.

What is an example of a data extraction tool?

Microsoft Fabric is an example of a powerful data extraction tool that connects to diverse sources including databases, cloud applications, and files. Other notable extraction tools include Informatica PowerCenter, Talend, Apache NiFi, and Alteryx. These platforms offer pre-built connectors, visual interfaces for mapping source-to-target fields, and scheduling capabilities for automated extraction jobs. Enterprise-grade tools also provide logging, error handling, and data quality checks during extraction. The right tool depends on your source systems, data volumes, and integration requirements. Kanerika holds expertise across leading extraction platforms—reach out to identify the best fit for your environment.

What is the ETL process for data extraction?

In the ETL process, data extraction serves as the initial stage where information is pulled from source systems before transformation and loading. The extraction phase involves identifying data sources, establishing connections, executing queries or API calls, and staging raw data in temporary storage. Well-designed ETL extraction handles schema variations, manages source system load, captures changes incrementally, and logs extraction metadata for lineage tracking. This extracted data then moves to transformation where cleansing and restructuring occur, followed by loading into target data warehouses or lakes. Kanerika architects ETL pipelines with optimized extraction layers using platforms like Databricks and Microsoft Fabric—let’s discuss your requirements.

What are some data extraction examples?

Common data extraction examples include pulling customer records from CRM systems like Salesforce, extracting transaction data from ERP platforms, scraping product information from e-commerce websites, and parsing invoice details from PDF documents using OCR. Financial services extract market data from trading APIs, healthcare organizations pull patient records from electronic health systems, and retailers extract point-of-sale data for inventory analytics. Log file extraction captures application performance metrics, while social media extraction gathers sentiment data for marketing insights. Each scenario requires tailored extraction approaches based on source complexity. Kanerika delivers industry-specific extraction solutions across banking, healthcare, and manufacturing—explore how we can address your use case.

Which data extraction tool is best?

The best data extraction tool depends on your specific requirements including source systems, data volumes, budget, and technical expertise. Microsoft Fabric excels for organizations invested in the Microsoft ecosystem, offering unified analytics with built-in extraction capabilities. Databricks suits enterprises requiring scalable lakehouse architectures with advanced transformation features. Informatica remains strong for complex enterprise integration scenarios, while Talend provides open-source flexibility. For document extraction, AI-powered tools with OCR capabilities handle unstructured data effectively. Evaluate tools based on connector availability, performance, and total cost of ownership. Kanerika evaluates and implements the optimal extraction tool for your enterprise—schedule a free tool assessment today.

What are the common data extraction tools?

Common data extraction tools include Microsoft Fabric, Informatica PowerCenter, Talend, Alteryx, Apache NiFi, and Databricks. Cloud-native options like AWS Glue, Azure Data Factory, and Google Cloud Dataflow offer serverless extraction capabilities. For document extraction, tools like ABBYY and Amazon Textract use AI to parse unstructured content. Open-source alternatives such as Apache Kafka handle real-time streaming extraction, while Fivetran and Airbyte provide pre-built connectors for SaaS applications. Enterprise organizations typically combine multiple tools based on source system requirements and data velocity needs. Kanerika has certified expertise across these platforms—contact us to build your integrated extraction architecture.

What is SQL data extraction?

SQL data extraction uses Structured Query Language to retrieve data from relational databases like SQL Server, Oracle, PostgreSQL, and MySQL. SELECT statements with WHERE clauses filter specific records, while JOINs combine data across multiple tables. SQL extraction can be full-table pulls or targeted queries based on date ranges, status flags, or other criteria. Stored procedures and views encapsulate complex extraction logic for reusability. For incremental extraction, SQL queries reference timestamp columns or change tracking tables to identify modified records. SQL remains the most direct extraction method for relational data sources. Kanerika’s data engineers optimize SQL extraction queries for performance and accuracy—reach out to modernize your database extraction processes.

What is data extraction and ETL?

Data extraction and ETL represent complementary concepts in data integration. Extraction is the process of pulling data from source systems, while ETL is the complete pipeline encompassing Extraction, Transformation, and Loading. In ETL workflows, extraction retrieves raw data, transformation cleanses and restructures it according to business rules, and loading delivers processed data to target destinations like data warehouses or analytics platforms. Modern variations include ELT, where raw data loads first and transforms within the target system. Together, these processes enable organizations to consolidate data for reporting and analytics. Kanerika delivers complete ETL solutions with intelligent extraction capabilities—start a conversation to transform your data architecture.