According to Gartner, 80% of data and analytics projects fail to deliver business value—a statistic that highlights a persistent flaw in centralized data architectures. Enter data mesh, a decentralized model that shifts data ownership to domain experts across functions like marketing, sales, and operations. By treating data as a product and enabling teams to govern, document, and share their own datasets, organizations can overcome bottlenecks, improve data quality, and scale insights faster.

First introduced by Zhamak Dehghani of ThoughtWorks, data mesh is rapidly gaining traction as a practical solution for large enterprises navigating complex data ecosystems.

One of the key benefits of Data Mesh is that it helps solve advanced data security challenges through distributed, decentralized ownership. Moreover,organizations have multiple data sources from different lines of business that must be integrated for analytics. With Data Mesh, data owners are responsible for their data products’ quality, access, and distribution. This allows for greater accountability and transparency while also reducing bottlenecks and silos that can occur in traditional centralized data architectures.

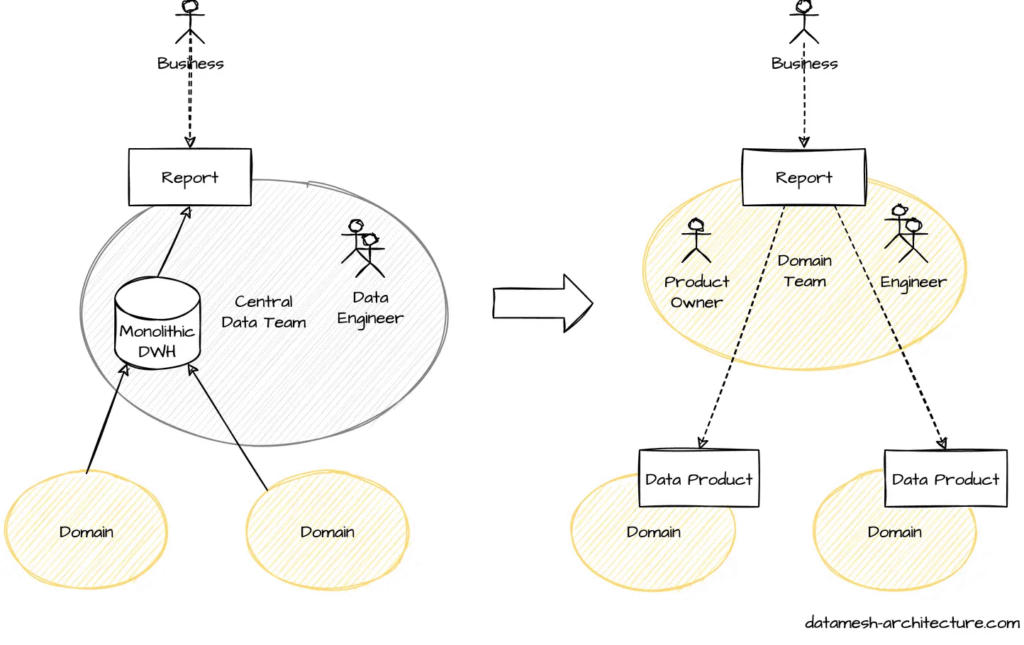

How Data Mesh Distributes Control and Responsibility

A data mesh fundamentally changes how organizations perceive and manage data—it’s no longer a passive by-product but a valuable product in itself. In this model, data ownership shifts from a centralized infrastructure team to the domain experts who generate and understand the data best. These producers become data product owners, responsible not only for creating usable datasets but also for understanding the needs of data consumers and designing APIs that support seamless, self-service access.

While domain teams handle the transformation, documentation, and stewardship of their data—including defining semantics, managing metadata, and setting access policies—a central governance function still plays a key role. It ensures consistency, compliance, and interoperability across the organization. Similarly, a central data engineering team continues to provide shared infrastructure guidance, focusing more on platform support than direct ownership of pipelines.

Much like microservices in software architecture, data mesh promotes modularity by aligning data around business functions. This domain-driven structure enables scalable, real-time integration across decentralized sources, empowering users—from analysts to data scientists—to access and leverage data products independently and efficiently.

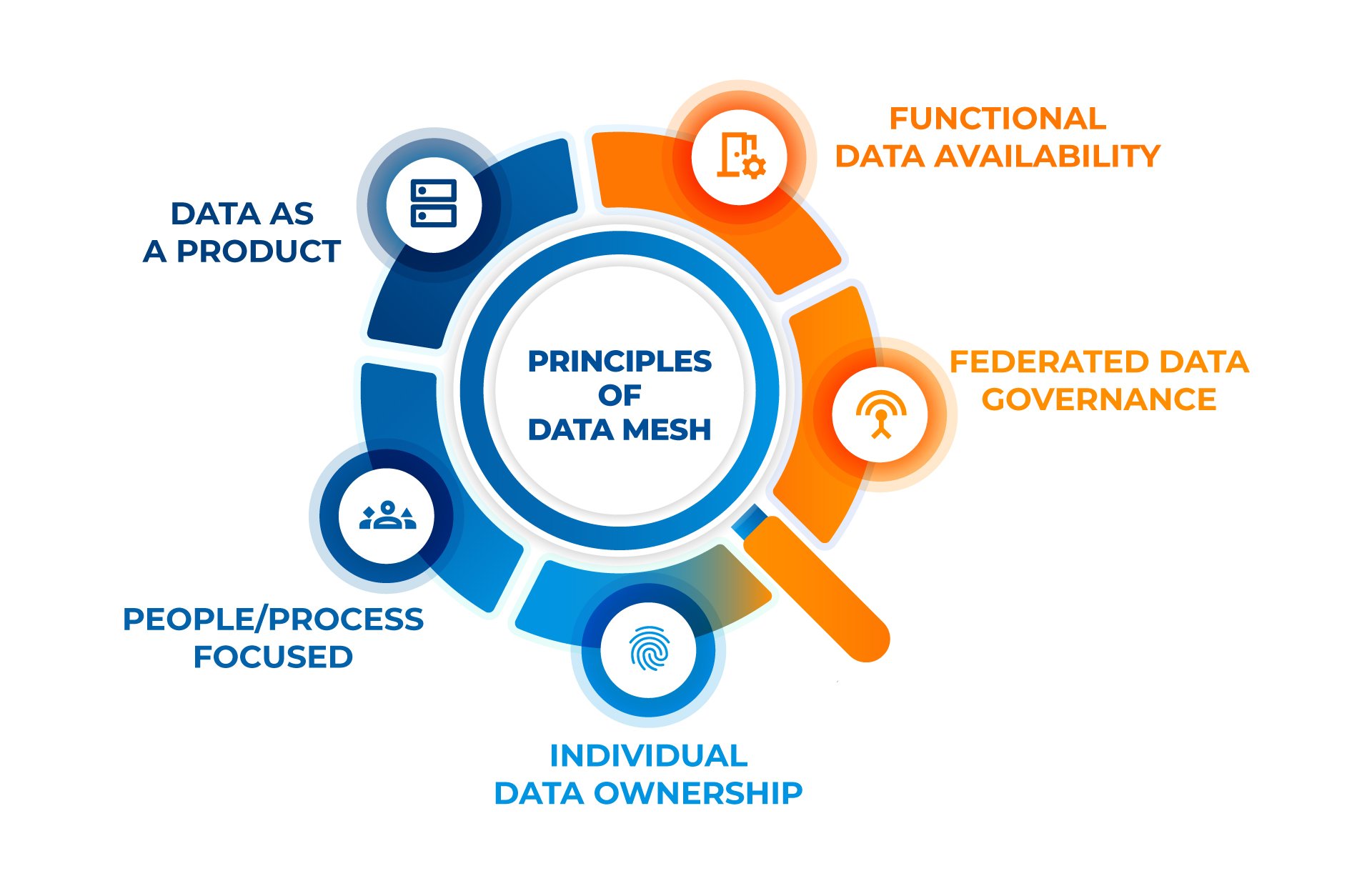

Understanding Data Mesh

Data Mesh is a holistic approach involving people and processes. It’s adaptable, allowing organizations to tailor it to their unique needs. Focusing on a few principles provides a framework for more efficient and effective data management in the modern data-driven world.

- Functional Data Availability: Ensures that data is available and functionally usable across different domains. This principle emphasizes the need for data to be easily accessible and in a format suitable for various functional requirements, enhancing its utility for diverse business applications.

- Federated Governance: Governance is spread across various domains, allowing each team to manage its data while aligning with the broader organizational standards. This promotes faster decision-making and collaborative efforts.

- Individual Data Ownership: Data is organized around business domains, not just technical functions. Each domain is managed by a data product owner, ensuring the data is accurate, timely, and relevant for business decisions.

- People/Process Focused: These platforms empower users across the organization to access and use data independently, fostering a more agile and responsive data environment.

- Treating Data as a Product: Data is viewed as a product with its lifecycle, from creation to maintenance, ensuring it remains discoverable, usable, and high-quality.

How Databricks is Reshaping Enterprise Analytics

Databricks plays a crucial role in making enterprises Data & AI ready with its Revolutionary Data Intelligence Platform

Data Mesh vs. Data Lake vs. Data Fabric

When managing large amounts of data, several modern frameworks are available, including Data Mesh, Data Lake, and Data Fabric. While all three concepts are used to improve data management, their approach and functionality differ.

Data Lake

Data Lake is a centralized repository that stores raw data from multiple sources in its native format. The primary goal of Data Lake is to provide a cost-effective way to store and process large amounts of data. Additionally, it is designed to be flexible and scalable, making it easier to store and analyze data from multiple sources.

Data Fabric

Data Fabric is a design concept and architecture that addresses the complexity of data management. It minimizes disruption to data consumers while ensuring that data on any platform from any location can be effectively combined, accessed, shared, and governed. Moreover, it is designed to be agile and flexible, allowing organizations to adapt quickly to changing business needs.

Data Mesh

Data Mesh is a new data management approach emphasizing decentralization and domain-driven design. In a Data Mesh architecture, data is treated as a product, and each domain owns and manages its data products. The goal of Data Mesh is to improve data quality, reduce data silos, and increase data ownership and autonomy.

Benefits of Data Mesh

Implementing a data mesh architecture can bring several benefits to your organization. Here are some of the most significant benefits:

1. Improved Data Access

Data mesh architecture promotes decentralized data ownership, which means data is owned and managed by the domain or business function that understands it best. This approach allows faster and more efficient access to data, as it eliminates the need for data consumers to access data through a centralized team.

2. Better Scalability

Data mesh architecture enables better scalability by allowing individual domain teams to manage their data and data pipelines. This approach ensures that the data infrastructure can scale as the organization grows without requiring a centralized team to manage everything.

3. Enhanced Data Security

Data mesh architecture promotes data security by allowing domain teams to manage their data and pipelines. This approach ensures that sensitive data is only accessible to those who need it and that data is protected from unauthorized access.

4. Increased Agility

Data mesh architecture promotes agility by enabling domain teams to move faster and experiment with new data products without requiring approval from a centralized team. This approach allows organizations to respond quickly to changing business needs and market conditions.

5. Improved Data Quality

Data mesh architecture promotes data quality by allowing domain teams to take ownership of their own data and data pipelines. This approach ensures that data is accurate, up-to-date, and relevant to the business needs of each domain team.

Implementing a data mesh architecture can bring several benefits to your organization, including improved data access, better scalability, enhanced data security, increased agility, and improved data quality.

Data Intelligence: Transformative Strategies That Drive Business Growth

Explore how data intelligence strategies help businesses make smarter decisions, streamline operations, and fuel sustainable growth.

Top Use Cases for Data Mesh

Data Mesh is a decentralized data architecture gaining popularity due to its ability to enable self-service access and provide more ownership to the producers of a given dataset. Here are some top use cases for Data Mesh:

1. Analytics

Data Mesh is particularly useful for analytics because it allows organizations to integrate multiple data sources from different lines of business. Dedicated data product owners can manage and maintain data quality by treating data as a product, making it easier to analyze. This approach also enables business units to take ownership of their data, which can lead to better decision-making.

2. Data Governance

Data Mesh can help organizations improve their data governance by providing a framework for federated governance. Simply put, each domain has its governance policies and practices, enforceable by the data owners. By treating data as a product, organizations can ensure data quality along side ethical and responsible usage.

3. Data Science

It can also be used in data science to improve the accuracy and reliability of models. By treating data as a product, data scientists can be confident that their data is high quality and properly curated. Additionally, it leads to accurate and reliable models, which is crucial for better predictions and decisions.

4. Collaboration

It can also facilitate collaboration between different business units. By treating data as a product, data product owners can work together to ensure that data is properly integrated and used consistently and standardized. This can lead to better collaboration and communication between different business units, ultimately leading to better decision-making and improved business outcomes.

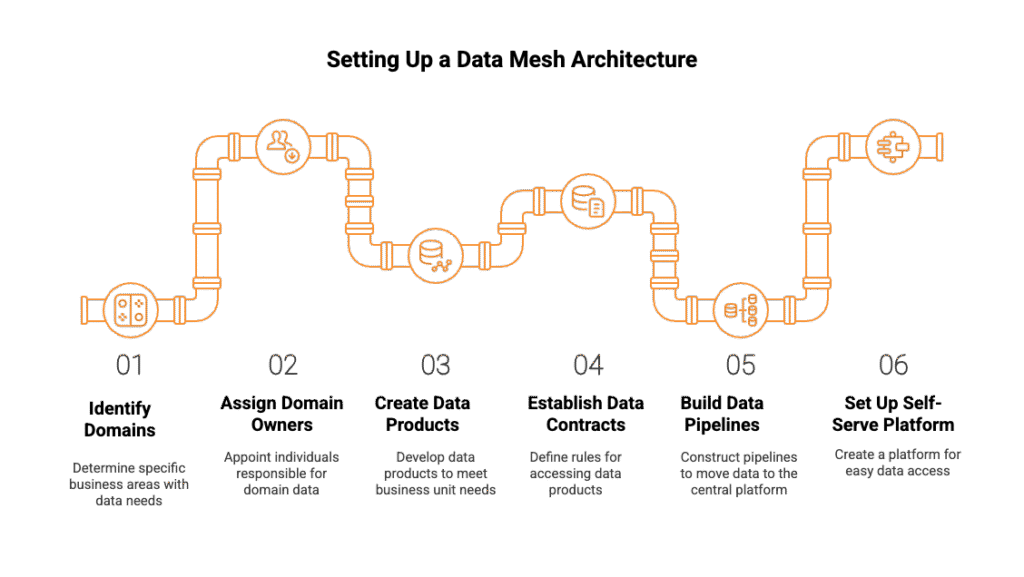

How to Set Up a Data Mesh for Your Organization

Implementing a data mesh architecture can be complex, but it can also provide significant benefits to your organization. Here are some steps to help you set up a data mesh for your organization:

1. Identify your domains:

The first step in setting up a data mesh is identifying your organization’s domains. A domain is a specific business area with data needs and requirements. For example, you may have marketing, sales, finance, and customer service domains.

2. Assign domain owners:

Once you have identified your domains, you must assign owners to each domain. Domain owners are responsible for the data within their domain and ensuring that it meets the needs of their business unit.

3. Create data products:

Each domain owner should create data products that meet the needs of their business unit. Data products can include raw data, cleaned data, and aggregated data. These data products should be stored in the domain’s data lake.

4. Establish data contracts:

Data contracts define the available data products within each domain and the rules for accessing them. Moreover, domain owners should set clear agreements about data use. These agreements need periodic review to ensure they still fit the needs of the business.

5. Build data pipelines:

Once the data products and contracts have been established, you must build pipelines to move the data from the domain’s data lake to the central data platform. Data engineers should work with the domain owners to build these pipelines.

6. Set up a self-serve data platform:

The final step is to set up a self-serve data platform. This platform should allow business units to access the needed data products without going through IT or data engineering. The self-serve data platform should also provide data discovery, exploration, and visualization tools.

Pitfalls to Avoid for Data Mesh

1. Failure to Follow DATSIS Principles

The DATSIS acronym stands for Discoverable, Addressable, Trustworthy, Self-describing, Interoperable, and Secure. These principles are essential for successful data mesh implementation. Failure to implement any part of DATSIS could doom your data mesh.

- Discoverable: Consumers must be able to research and identify data products from different domains.

- Addressable: Data products must be accessible through a standard interface.

- Trustworthy: Data must be reliable and accurate.

- Self-describing: Data products must be self-describing, including metadata and documentation.

- Interoperable: Data products must be able to work with other products and systems.

- Secure: Data must be protected from unauthorized access.

2. Ignoring Data Observability

Data observability is the ability to monitor and understand the behavior of data in a system. It is essential for ensuring data quality, reliability, and accuracy. Moreover, ignoring data observability can lead to data quality issues, which can undermine the effectiveness.

3. Lack of Governance

A data mesh requires strong governance to ensure security and privacy. Without governance, there is a risk of data misuse, which can lead to legal and reputational damage.

4. Overlooking the Importance of Culture

The success of a mesh depends on a culture of collaboration and transparency. Data owners must be willing to share their data, and business users must be willing to work with data in new ways. Overlooking the importance of culture can lead to resistance to change and a lack of adoption.

5. Underestimating Technical Complexity

Implementing a mesh can be technically complex, requiring significant system and process changes. Underestimating technical complexity can lead to delays, cost overruns, and implementation failure.

By avoiding these pitfalls, you can ensure that your data mesh implementation is successful and that you can reap the benefits of improved data access and usage.

Applying Data Mesh Principles Through Smart Engineering

Implementing a data mesh isn’t just a conceptual shift—it requires strong data engineering foundations to decentralize ownership, ensure interoperability, and maintain governance across domains. And, Kanerika has delivered measurable outcomes by applying these principles in real-world scenarios.

Logistics & Supply Chain: Enabling Federated Data Ownership

A leading logistics firm was grappling with fragmented systems that delayed access to critical insights and exposed security gaps. Kanerika addressed this by engineering a unified, domain-aligned data infrastructure, enabling business teams to own and govern their data while maintaining centralized oversight.

Results achieved:

- 42% increase in decision-making precision

- 54% improvement in data accuracy

- 61% growth in data-driven decisions

By decentralizing operational data through scalable pipelines and domain-specific reporting, this solution mirrors the self-serve, federated approach at the heart of data mesh.

Media Enterprise: Scaling Analytics Across Domains

A global media company needed to unify siloed data from Salesforce and external systems. Kanerika restructured their architecture to support domain-centric integration, allowing different business units to create and consume their own data products while aligning with organizational standards.

Results delivered:

- 75% increase in data integration capacity

- 50% reduction in report customization time

- 65% improvement in scalability for analytics

This outcome demonstrates how decentralized engineering and thoughtful orchestration enable a mesh-style architecture—where teams can move fast without sacrificing control or context.

Kanerika: Your Trusted Data Strategy Partner

At Kanerika, we specialize in transforming complex data landscapes into scalable, business-ready ecosystems. As a certified Microsoft Data & AI Solutions partner and a strategic collaborator with Databricks, we deliver end-to-end data solutions that empower organizations to harness the full potential of modern architectures like data mesh.

Our expertise spans across data integration, advanced analytics, AI/ML, and cloud-native platform development. Leveraging tools such as Microsoft Fabric, Azure Synapse, and Databricks Lakehouse, we help businesses break down silos, unify data across domains, and enable real-time decision-making.

Whether you’re just beginning your data modernization journey or looking to scale a decentralized architecture, Kanerika combines strategic consulting with deep technical delivery. Our solutions are designed to be secure, future-proof, and aligned with your unique business objectives—ensuring your data not only flows efficiently but delivers measurable impact.

Partnering with Kanerika for your data mesh strategy can provide numerous benefits. Some of these benefits include:

- Increased efficiency and agility in data management

- Reduced bottlenecks and silos in data management

- Improved data security and governance

- Better alignment between business objectives and data strategy

With Kanerika as your data strategy partner, you’re equipped to build a truly data-driven organization—one domain, one product, and one insight at a time.

FAQs

What is a data mesh?

A data mesh isn’t a single technology, but a *sociotechnical* approach to data management. It distributes data ownership and governance across domain teams, empowering them to manage their own data products. This fosters agility and reduces reliance on a central, often bottlenecked, data team. The key is collaboration and standardized data access, not centralization.

What are the 4 pillars of data mesh?

Data mesh isn’t built on physical pillars, but rather four key conceptual principles. It’s about decentralizing data ownership (domain ownership), treating data as a product, enabling self-serve data infrastructure, and establishing a federated computational governance model ensuring data quality and discoverability across the entire organization. These interwoven aspects empower agility and collaboration. Success relies on strong inter-team coordination within this decentralized structure.

What are the differences between data mesh and data lake?

A data lake is a raw, centralized storage of all your data, like a giant digital warehouse. A data mesh, however, distributes data ownership and governance across domain teams, each managing their own “data products” which might be built *on top of* a data lake (or other sources). The key difference is centralized storage versus decentralized ownership and responsibility for data quality and accessibility. Data mesh aims for greater agility and domain expertise.

What is SQL mesh?

SQLMesh isn’t just another database; it’s a clever layer that sits *on top* of your existing data sources (like different databases or spreadsheets). It unifies them, letting you query all your data as if it were one giant database, simplifying complex data workflows. Think of it as a universal translator for your data, eliminating the need to learn multiple querying languages or navigate messy data silos. Essentially, it makes accessing and analyzing distributed data incredibly easy.

What is data mesh in AWS?

Data mesh on AWS isn’t a single AWS service, but a *design principle*. It decentralizes data ownership, empowering individual teams to manage their own data products. AWS provides various services (like S3, Glue, Lake Formation) that *support* building a data mesh architecture, but doesn’t offer a pre-packaged “data mesh” solution. Think of it as a blueprint, not a building.

What is the difference between data fabric and data mesh?

Data fabric is a more holistic, integrated approach to data management, unifying access across various sources with a focus on automation and governance. Data mesh, conversely, distributes ownership of data products to domain-specific teams, emphasizing decentralized governance and self-service capabilities. Think of data fabric as a unified highway system, while data mesh is a network of smaller, interconnected roads. The core difference lies in centralized vs. decentralized control and responsibility.

Is Databricks a data mesh?

No, Databricks isn’t inherently a data mesh, but it can be *part* of a data mesh architecture. Databricks provides the foundational data lakehouse platform – the technical infrastructure – but a data mesh requires decentralized data ownership and domain-specific data products, which need to be designed and implemented separately. Think of Databricks as the engine, not the entire car.

How does data mesh differ from traditional data architectures?

Unlike centralized data architectures that rely on monolithic data warehouses or lakes, data mesh decentralizes data ownership to domain teams. This shift enables faster decision-making and reduces bottlenecks associated with centralized data management.

How does data mesh handle data governance and compliance?

Data mesh employs federated computational governance, where each domain adheres to global standards and policies, ensuring compliance and data security across the organization.

How does data mesh improve data quality?

By treating data as a product and assigning ownership to domain teams, data mesh ensures that those closest to the data are responsible for its quality, leading to more accurate and reliable data products.

What is a data mesh vs data lake?

A data mesh and a data lake are fundamentally different approaches to managing enterprise data. A data lake is a centralized repository where raw data from across the organization is stored in one place, typically managed by a central data engineering team. A data mesh, by contrast, is a decentralized architecture where individual business domains own and manage their own data as products, with no single team acting as a bottleneck. The core distinction comes down to ownership and scalability. Data lakes often struggle at enterprise scale because all data pipelines, transformations, and governance responsibilities fall on one team. This creates slow delivery cycles and quality issues. Data mesh solves this by distributing ownership to the domain teams closest to the data, who understand its context and can maintain its quality more effectively. Data lakes also tend to accumulate unstructured, poorly governed data over time, sometimes called a data swamp. Data mesh enforces accountability through domain ownership and treats data as a product with defined consumers, clear SLAs, and quality standards. That said, data mesh and data lake are not always mutually exclusive. Some organizations use a data lake as the underlying storage layer while applying data mesh principles on top to govern how data is owned, shared, and consumed across domains. Organizations working with Kanerika on modern data architecture often explore this hybrid approach to balance centralized storage efficiency with decentralized governance and agility.

Is data mesh obsolete?

Data mesh is not obsolete it is still an actively evolving framework that many large enterprises are adopting to solve the scalability and ownership problems that centralized data architectures cannot handle well. The question of obsolescence often comes up because data mesh has matured past its initial hype cycle. Early implementations exposed real challenges around governance complexity, organizational change management, and the cost of building federated infrastructure. These friction points led some teams to question its viability. But maturing frameworks face this scrutiny naturally, and data mesh has responded with clearer implementation patterns, better tooling support, and stronger alignment with data contract standards. What keeps data mesh relevant is the underlying problem it solves: as enterprises grow, central data teams become bottlenecks, data quality accountability blurs, and domain-specific context gets lost in translation. Data mesh addresses all three by distributing ownership to the teams closest to the data. That problem has not gone away if anything, it has intensified with the growth of real-time data, AI pipelines, and multi-cloud environments. Organizations working with Kanerika on data platform modernization consistently find that domain-driven data ownership, one of the core principles of data mesh, dramatically improves both data reliability and cross-functional agility. The architecture is not a trend that burned out. It is a design philosophy that continues to gain traction wherever data complexity outpaces what centralized models can manage.

What are the 4 C's of data?

The 4 C’s of data are completeness, consistency, correctness, and currency four core dimensions used to evaluate and maintain data quality across an organization. Completeness means all required data fields are populated with no critical gaps. Consistency ensures the same data values appear uniformly across different systems and datasets, which becomes especially important in distributed architectures like data mesh where multiple domain teams manage their own data products. Correctness refers to whether the data accurately reflects real-world facts, free from errors or misrepresentations. Currency (also called timeliness) measures how up-to-date the data is and whether it reflects the most recent state of the business. In a data mesh context, these four qualities become the shared responsibility of each domain team rather than a central data team. Domain owners are accountable for delivering data products that meet all four standards, which is a fundamental shift from traditional centralized governance models. Organizations implementing data mesh frameworks, like those Kanerika helps design and deploy, build these quality dimensions directly into domain-level data contracts and observability pipelines, ensuring consumers across the enterprise can trust the data they access without relying on a bottlenecked central team to validate it.

What is the purpose of data mesh?

Data mesh exists to solve the scalability and ownership problems that plague centralized data architectures in large enterprises. As organizations grow, a single data team becomes a bottleneck unable to keep up with demand from multiple business units, each with unique data needs and domain expertise. The core purpose of data mesh is to distribute data ownership to the teams that understand it best. Instead of funneling all data through a central platform, domain teams like sales, finance, or logistics own, manage, and publish their data as products. This decentralization reduces bottlenecks, improves data quality, and accelerates time-to-insight across the enterprise. Beyond ownership, data mesh also aims to establish federated governance, ensuring that distributed data still adheres to consistent standards, security policies, and interoperability requirements. This balance between autonomy and governance is what makes data mesh practical at enterprise scale rather than just theoretically appealing. For companies dealing with fragmented data pipelines, slow analytics delivery, or poor cross-domain data quality, data mesh provides a structural solution rather than just a technical one. Kanerika helps enterprises design and implement data mesh architectures that align domain ownership with real business goals, making distributed data management both operationally viable and strategically valuable.

What are the 3 C's of data?

The 3 C’s of data are completeness, consistency, and correctness three foundational quality dimensions that determine whether data is trustworthy and usable for decision-making. Completeness means all required data fields are populated with no critical gaps. Consistency ensures that the same data point holds the same value across different systems, databases, or reports so a customer’s address in your CRM matches what’s in your billing system. Correctness confirms that data accurately reflects real-world facts, free from errors, duplicates, or outdated entries. In a data mesh context, the 3 C’s take on added significance. Because data mesh distributes ownership across domain teams, each team becomes directly responsible for maintaining these three quality standards within their own data products. This shifts quality assurance from a centralized data engineering bottleneck to a domain-level accountability model, which is one of the core reasons enterprises see data mesh as a scalable long-term architecture. When data products fail on any of these three dimensions, downstream consumers analysts, data scientists, business stakeholders lose trust in the data, leading to poor decisions or redundant data cleanup work. Kanerika’s approach to data mesh implementations specifically emphasizes building quality contracts around completeness, consistency, and correctness so that domain-owned data products meet enterprise-grade standards before they’re shared across the organization.

What are the 5 layers of a data platform?

A data platform typically consists of five layers: ingestion, storage, processing, serving, and governance. The ingestion layer collects raw data from sources like databases, APIs, and streaming systems. The storage layer holds that data in structured or unstructured formats, including data lakes and warehouses. The processing layer transforms and enriches raw data through batch or real-time pipelines. The serving layer delivers processed data to consumers via dashboards, APIs, or query engines. The governance layer runs across all others, enforcing data quality, access controls, lineage tracking, and compliance policies. In a data mesh architecture, these five layers don’t disappear but get distributed across domain teams rather than managed centrally. Each domain owns its ingestion, storage, and processing pipelines, while a self-serve data platform abstracts the underlying infrastructure complexity so teams can operate independently. The governance layer shifts from centralized enforcement to federated computational policies, meaning rules are applied automatically at scale rather than through manual oversight. Understanding these layers matters because data mesh asks organizations to replicate this stack across multiple domains efficiently, which is where platform engineering and infrastructure standardization become critical success factors. Kanerika helps enterprises design and implement domain-oriented data platforms that maintain consistency across all five layers while enabling the autonomy that makes data mesh effective.

What are the 4 D's of digital transformation?

The 4 D’s of digital transformation are digitization, digitalization, disruption, and data the four interconnected forces that drive how organizations evolve their operations and business models in the digital age. Digitization refers to converting analog information into digital formats, such as scanning paper records into digital files. Digitalization goes further by using digital technologies to change business processes and workflows, improving efficiency and reducing manual effort. Disruption describes the broader market shifts that occur when digital innovation fundamentally changes industries, forcing companies to rethink their competitive positioning. Data is increasingly recognized as the fourth pillar because meaningful transformation depends on an organization’s ability to collect, manage, and extract value from data at scale. In the context of data mesh architecture, the data pillar is especially relevant. Traditional centralized data platforms often struggle to support enterprise-wide transformation because they create bottlenecks as data volumes and domains grow. Data mesh addresses this by distributing data ownership across domain teams, treating data as a product, and enabling the kind of governed, scalable data access that makes the other three D’s more effective. Organizations pursuing genuine digital transformation need a data infrastructure capable of supporting cross-functional decision-making, and data mesh provides that foundation. Kanerika works with enterprises to align data architecture decisions with broader transformation goals, ensuring data strategies support real business outcomes rather than just technical upgrades.

What are the 5 data principles?

The five core data mesh principles are domain ownership, data as a product, self-serve data infrastructure, federated computational governance, and interoperability. Here is what each means in practice: Domain ownership means individual business teams like finance, marketing, or operations own and manage their own data rather than routing everything through a central data team. This eliminates bottlenecks and keeps data closer to the people who understand it best. Data as a product shifts the mindset from treating data as a byproduct of operations to treating it as a first-class product with defined quality standards, documentation, and SLAs that internal consumers can rely on. Self-serve data infrastructure gives domain teams the tools and platforms they need to publish, manage, and consume data independently, without requiring deep engineering support for every task. Federated computational governance balances autonomy with accountability by establishing shared standards such as data contracts, security policies, and compliance rules that apply across all domains without centralizing control. Interoperability ensures data from different domains can be discovered, accessed, and combined consistently, making cross-domain analytics and reporting practical rather than painful. These principles work together to address the scaling limitations of centralized data lakes and warehouses. Enterprises adopting this model, particularly with implementation support from firms like Kanerika, typically see faster data delivery cycles and stronger data quality ownership across business units.

What skills are needed for data mesh?

Data mesh requires a combination of technical, analytical, and organizational skills spread across domain teams rather than concentrated in a central data group. On the technical side, team members need proficiency in data engineering, including pipeline development, data modeling, and working with distributed systems. Familiarity with data contracts, schema management, and API design is increasingly important since these underpin how domains expose data as products. Cloud platform knowledge across AWS, Azure, or GCP is typically essential, as most data mesh implementations run on cloud-native infrastructure. Analytical skills matter just as much. Domain teams need people who understand data quality principles, can define meaningful SLAs for their data products, and know how to measure reliability and usage. Basic data governance knowledge helps teams manage ownership responsibly without defaulting to a centralized team for every decision. Organizationally, product thinking is one of the most underrated skills in data mesh adoption. Treating data as a product means someone on each domain team needs to think about consumers, usability, and continuous improvement, much like a software product manager would. Communication skills also matter, since cross-domain collaboration requires teams to align on standards without bureaucratic overhead. For enterprises adopting data mesh, building these competencies is often the harder challenge compared to selecting the right technology stack. Kanerika helps organizations assess skill gaps and design training and enablement programs that prepare domain teams to own and deliver high-quality data products independently, which is foundational to making data mesh work in practice.

Is data mesh an agile approach?

Data mesh is inherently an agile approach to data management, applying the same decentralization and autonomy principles that agile software development brought to application teams. Instead of routing every data request through a central team, data mesh gives domain teams ownership of their own data products, letting them iterate, update, and improve independently without waiting on a bottleneck. The agile parallel runs deep. Just as agile broke monolithic software delivery into small, cross-functional teams working in parallel, data mesh breaks monolithic data pipelines into domain-owned data products managed by teams closest to the source. This reduces dependencies, shortens delivery cycles, and makes the overall data organization more responsive to changing business needs. Federated governance adds structure without sacrificing speed, similar to how agile frameworks like Scrum provide discipline within flexible team structures. Teams follow shared standards for data quality, security, and interoperability, but retain autonomy over how they build and manage their specific data products. For enterprises dealing with slow, centralized data pipelines that struggle to keep pace with business demands, data mesh offers a practical path forward. Kanerika helps organizations design and implement data mesh architectures that balance domain autonomy with enterprise-wide governance, making the transition to agile data management practical rather than theoretical.

What is SAP data mesh?

SAP data mesh is an architectural approach that applies data mesh principles specifically within SAP environments, enabling organizations to decentralize their SAP data assets across domain-specific teams rather than consolidating everything into a central data warehouse or data lake. In practice, SAP data mesh connects SAP systems like S/4HANA, BW/4HANA, and SAP Datasphere into a federated data ecosystem where individual business domains, such as finance, supply chain, or procurement, own and manage their SAP data products independently. SAP Datasphere serves as the primary technical enabler here, offering built-in support for data product creation, federated access, and cross-domain data sharing without requiring raw data movement. This approach solves a common enterprise problem where centralized SAP data management creates bottlenecks, slows analytics delivery, and reduces data quality accountability. By assigning domain ownership, teams closest to the SAP data are responsible for its accuracy, freshness, and usability, which directly improves downstream analytics and reporting outcomes. For large enterprises running complex SAP landscapes, implementing SAP data mesh also reduces dependency on central IT teams for every data request, accelerating time-to-insight across business functions. Kanerika helps organizations design and implement SAP data mesh architectures that align SAP data product ownership with broader enterprise data governance frameworks, ensuring interoperability across both SAP and non-SAP data sources.