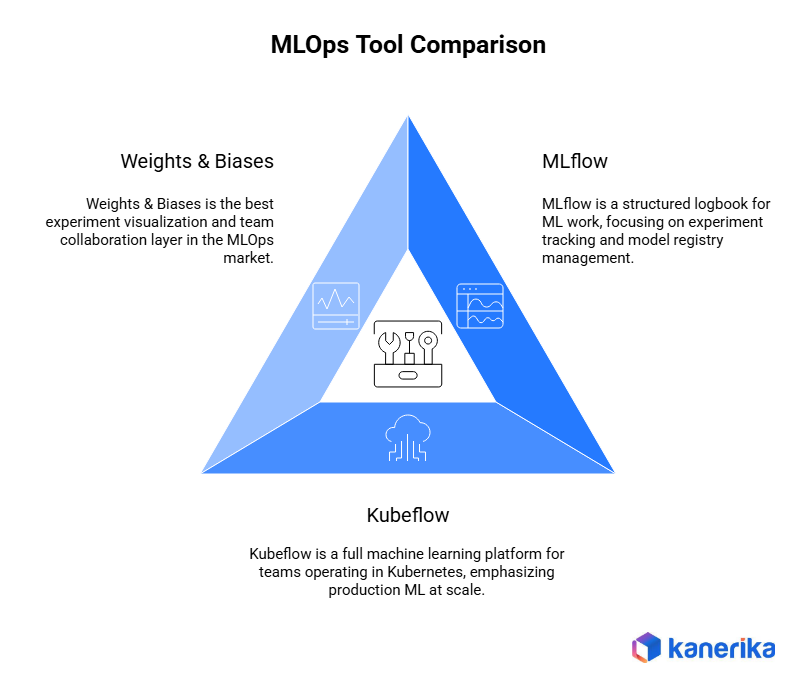

TL;DR: MLflow, Kubeflow, and Weights & Biases solve fundamentally different problems. MLflow is your experiment ledger. Kubeflow is your production orchestrator. W&B is your collaboration and visualization layer. Most enterprise teams end up using two of them together — the mistake is treating this as a pick-one decision without a plan for how they fit together.

Key Takeaways

- Tool-team fit matters more than raw capability. The most powerful MLOps platform is not the right one if your team lacks the infrastructure maturity to run it.

- Kubeflow carries the highest total cost of ownership — not in licensing, but in Kubernetes infrastructure and the DevOps headcount required to operate it.

- W&B leads on LLM observability through its Weave product, making it the strongest choice for generative AI and LLM-first teams building production applications today.

- MLflow wins on simplicity and ubiquity — zero infrastructure overhead, free open-source licensing, and native integration with nearly every ML framework in production use.

- Regulated industries need extra compliance engineering regardless of tool choice. None of the three provide audit-ready governance out of the box.

- Most mature enterprise ML teams use two of these tools by design — the stack architecture matters as much as the individual tool.

Accelerate Your Data Management with Scalable DataOps Tools!

Partner with Kanerika Today!

When the “Best MLOps Tool” Becomes the Wrong Choice

A data science lead at a mid-sized financial services firm once made a decision that looked reasonable on paper. The team needed an MLOps platform. A conference speaker had called Kubeflow “enterprise-grade,” the documentation looked thorough, and the feature set was impressive. So they committed.

Six months later, two of their eight engineers were spending 40% of their time maintaining the Kubernetes cluster — not building models. Nobody on the team had Kubernetes production experience when the project started. The most powerful tool in the room had quietly become the biggest bottleneck.

This is exactly what standard MLOps tool comparisons miss. The MLOps market is projected to reach $12.8 billion by 2030 (MarketsandMarkets, 2024), and yet according to widely cited VentureBeat research, 87% of data science projects never make it into production. The bottleneck is rarely the model itself. It’s the mismatch between the platform a team selects and the operational reality they’re working inside.

MLflow, Kubeflow, and Weights & Biases represent three different philosophies about how ML teams should operate. Understanding those philosophies — not just the feature checklists — is what this comparison is actually for.

What Each MLOps Tool Is Actually Built For

MLflow: ML Experiment Tracking and Model Registry

MLflow is a structured logbook for ML work. It is the most widely deployed open-source MLOps component in the world, and that adoption reflects exactly what it does well: experiment tracking, model versioning, and model registry management with near-zero setup friction.

Built by Databricks in 2018, MLflow has four core pillars: Tracking (log parameters, metrics, and artifacts), Projects (reproducible code packaging), Models (model format standardization), and Registry (centralized model store with staging lifecycle management). It integrates natively with scikit-learn, TensorFlow, PyTorch, and Hugging Face. Starting with version 2.8, MLflow added native LLM tracking, a prompt engineering UI, and integrations with LangChain and OpenAI — capabilities that have continued to mature through subsequent releases.

Community signal: MLflow has over 18,000 GitHub stars and hundreds of contributors as of 2025. It is among the most-mentioned MLOps tracking tools on Stack Overflow and consistently appears in practitioner surveys.

Honest limitations: MLflow needs Apache Airflow, Prefect, or Kubeflow alongside it for pipeline orchestration — it does not handle workflow scheduling natively. The UI is functional but visually dated compared to W&B. Standalone MLflow also has minimal enterprise security controls without the Databricks platform wrapper, and the model serving story is thin for teams outside the Databricks ecosystem.

When NOT to use MLflow as your primary MLOps platform: your team needs end-to-end pipeline automation with no additional tooling; rich stakeholder dashboards and collaborative reporting are a primary requirement; or you need mature LLM evaluation pipelines without substantial custom engineering effort.

Kubeflow: Production ML Pipeline Orchestration at Scale

Kubeflow is a full machine learning platform for teams that operate in Kubernetes. It was built for production ML at scale — not for experimentation speed, not for solo researchers, and not for teams without dedicated platform engineering support.

Originally developed at Google and now a CNCF incubating project (accepted 2023), Kubeflow is Kubernetes-native by design. Its core components include Pipelines (workflow orchestration), Katib (automated hyperparameter tuning), KServe (model serving), Training Operators (distributed training for PyTorch, TensorFlow, MXNet, XGBoost, and JAX), and Notebooks (Jupyter integration). It is the only tool of the three built for full end-to-end ML pipeline automation, and its on-premises deployment capability makes it the strongest architectural choice for regulated industries where data cannot leave organizational boundaries.

2024 platform update: Kubeflow 1.9, released in September 2024, focused on hardening and stabilizing existing components. Key highlights included Training Operator 1.8 with improved PyTorch support, Pipelines 2.3 with improved caching, and updated Notebooks with a refreshed UI.

Community signal: Kubeflow has over 14,000 GitHub stars. Its CNCF status signals long-term organizational backing, but also the governance overhead that comes with enterprise-grade open-source adoption.

Honest limitations: Setup time is measured in days or weeks, not hours. G2 reviewers in 2024 consistently flag it as impractical for teams without a dedicated DevOps engineer. Infrastructure costs on managed Kubernetes services like GKE or EKS are substantial and vary widely based on workload. The Kubernetes learning curve is steeper than any competing MLOps platform in the market.

When NOT to use Kubeflow: your team has fewer than two engineers with Kubernetes production experience; you need results in days — cluster setup alone will consume your timeline; or budget for ongoing platform engineering headcount is uncertain or unavailable.

Weights & Biases: ML Experiment Visualization, Collaboration, and LLM Observability

Weights & Biases is the best experiment visualization and team collaboration layer in the MLOps market. And since the launch of W&B Weave, it has become a leading choice for LLM observability and generative AI application monitoring.

Founded in 2018 by Lukas Biewald, Chris Van Pelt, and Shawn Lewis — previously at CrowdFlower/Figure Eight — W&B’s core components include Experiments (experiment tracking), Sweeps (automated hyperparameter optimization), Artifacts (dataset and model versioning), Reports (collaborative stakeholder sharing), Registry (model management), and Weave (LLM observability and evaluation). The visualization quality, real-time dashboards, and collaborative reporting are best-in-class across the MLOps landscape. W&B holds SOC 2 Type II certification, offers a HIPAA BAA for its Enterprise tier, and provides a private cloud deployment option for data-sensitive environments.

Recent platform updates: W&B Weave expanded its evaluation framework to include structured agent trace logging and multi-turn conversation evaluation, making it meaningfully more capable for production agentic AI workflows.

Community signal: W&B has over 9,000 GitHub stars on its core SDK. Adoption has accelerated sharply in generative AI teams since 2023, and it is widely used across AI research teams globally.

Honest limitations: Per-seat pricing at $50 per user per month (Teams tier, billed annually) scales uncomfortably for large organizations. The free tier usage limits are too restrictive for production use cases. The cloud-first architecture also raises data residency concerns for regulated industries without explicit private cloud configuration.

When NOT to use W&B as your primary MLOps platform: your budget is constrained and the team has more than 20 active users; data cannot leave your organizational boundary without explicit architectural controls; or you need native ML pipeline orchestration without layering in additional tooling.

Head-to-Head MLOps Tool Comparison: The Dimensions That Actually Matter

Setup Time and Time-to-Value

MLflow and W&B both take under an hour to set up for a first tracked experiment. Kubeflow is an infrastructure commitment — for most teams, getting a first production experiment running on a properly configured cluster is a week-two milestone at best. For organizations under pressure to demonstrate MLOps value quickly, Kubeflow is the wrong starting point regardless of its ceiling capabilities.

Experiment Tracking and Visualization Quality

W&B wins this dimension clearly. Real-time dashboards, interactive charts, and collaborative Reports are best-in-class among all major MLOps platforms. MLflow is functional but visually limited — G2 reviewers note the UI has not kept pace with W&B’s development. Kubeflow does not meaningfully compete here; experiment tracking is not its primary design goal.

The visualization gap matters more than it sounds. When data literacy is uneven across a team, strong dashboards reduce friction between data scientists, engineers, and business stakeholders who need to interpret model behavior without reading raw logs.

Pipeline Orchestration and Workflow Automation

Kubeflow Pipelines is the only native ML pipeline orchestration solution among the three. MLflow handles model packaging and serving but not workflow scheduling or automation. W&B has no native pipeline orchestration at all. If orchestration is the core requirement, Kubeflow is the clear answer — provided the team has the infrastructure maturity to run it.

Scalability and Distributed Training

Kubeflow’s Training Operators manage distributed PyTorch, TensorFlow, MXNet, XGBoost, and JAX workloads natively. W&B scales well for experiment tracking and hyperparameter sweeps. MLflow scales reasonably for tracking but has meaningful limits for model serving outside the Databricks platform.

Kubeflow’s Kubernetes-native design is the most natural fit for teams managing model training across hybrid cloud environments — some workloads on-premises, others in public cloud. It abstracts the compute layer cleanly across providers in a way neither MLflow nor W&B can match.

LLM and Generative AI Readiness

This is the dimension most MLOps comparisons miss entirely. W&B Weave is the most purpose-built LLM observability layer among the three: trace logging, prompt management, evaluation pipelines, and agent monitoring in one integrated interface. MLflow has added strong LLM tracking with LangChain and OpenAI integrations — a competitive option for teams already on Databricks. Kubeflow supports distributed LLM fine-tuning and large model serving through Training Operators and KServe, but has no native LLM observability tooling.

For teams building on generative AI or private LLMs, the capability differences here are significant enough to warrant their own framework. LLM work has a different operational profile than classical ML — prompt versioning, trace logging, multi-turn evaluation, and agent monitoring are not things you can bolt on later.

| LLM and GenAI Capability | MLflow | Kubeflow | W&B Weave |

| Prompt versioning and tracking | Yes (native) | No | Yes (native) |

| LLM evaluation pipelines | Partial | No | Yes (native) |

| Agent trace logging | Partial | No | Yes |

| Multi-turn conversation evaluation | No | No | Yes |

| LangChain integration | Native | No | Native |

| OpenAI API call tracking | Native | No | Native |

| Large model serving infrastructure | Via Databricks | Yes (KServe) | No |

| LLM inference cost tracking | Partial | No | Yes |

W&B Weave is the clear leader for teams primarily building, evaluating, and monitoring LLM-based applications. MLflow is a credible alternative for teams embedded in the Databricks ecosystem. Kubeflow’s role in LLM workflows is narrow but real: if you need to serve large models at production scale, KServe is genuine infrastructure.

Vendor Lock-in Risk

This dimension almost never appears in MLOps platform comparisons. Once experiment history, production pipelines, and collaborative reports accumulate over 18 months, switching tools becomes a significant engineering project. The switching cost is asymmetric — it looks manageable at the start of an evaluation and becomes genuinely expensive later.

| Lock-in Dimension | MLflow | Kubeflow | W&B |

| License type | Apache 2.0 (open-source) | Apache 2.0 (open-source) | Proprietary SaaS |

| Data portability | High | High | Medium |

| Experiment history export | Standard open formats | Standard open formats | API export (partial) |

| Pipeline portability | N/A | Low (DSL not portable) | N/A |

| Artifact and report formats | Open | Open | Proprietary |

| Cloud dependency | None (OSS) or Databricks (managed) | Kubernetes-native (any K8s provider) | Cloud-first |

| Migration effort (rough estimate) | Low | High (pipeline rewrites) | Medium to High |

| Overall lock-in risk | Low | Low to Medium | Medium to High |

MLflow is the most portable option by design. Kubeflow introduces lock-in not at the vendor level but at the infrastructure level — its Pipeline DSL is not portable across orchestration systems, so teams with 20 or more production pipelines face significant rewrite costs if they ever migrate. W&B presents the highest proprietary lock-in: experiment data lives in W&B’s cloud, collaborative Reports are not portable, and Sweeps history does not migrate cleanly to alternative platforms.

Security, Compliance, and Data Privacy

Kubeflow’s fully on-premises deployment makes it the strongest baseline for environments where data cannot leave organizational boundaries. W&B Enterprise offers SOC 2 Type II and a HIPAA BAA, but its cloud-first architecture requires careful security assessment for regulated contexts. MLflow on Databricks provides workspace-level isolation and audit logging; standalone MLflow has minimal enterprise security controls without the platform wrapper.

One fact most comparisons skip: all three tools require significant security engineering on top of the default installation to meet enterprise compliance standards. None provide audit-ready compliance out of the box.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Total Cost of Ownership: What MLOps Tooling Actually Costs

Licensing is almost never the real cost. The table below lays out the full picture — including the cost categories that routinely catch teams off guard. Kubeflow appears free until you price the Kubernetes infrastructure and the platform engineer required to run it. W&B appears affordable per seat until you multiply by 40 people and add artifact storage fees.

| Cost Component | MLflow | Kubeflow | W&B |

| License | Free (open-source) | Free (open-source) | ~$50/user/month (Teams tier, annual billing) |

| Infrastructure | Low (single server or VM) | High (production Kubernetes clusters can run into thousands per month) | Low (SaaS, no infrastructure to manage) |

| Setup and implementation | Low (hours) | High (weeks; professional services often needed) | Low (hours) |

| DevOps and platform engineering | Low | High (often 0.5–1 FTE dedicated) | None |

| Storage at scale | Low to medium | Medium (artifact and pipeline storage) | Medium (artifact storage billed separately) |

| Enterprise support | Via Databricks | Community plus vendor support contracts | Included in Enterprise tier |

| Small team estimate (10 people) | $1,200–$5,000/year | $24,000–$36,000/year | $6,000/year |

| Growth team estimate (50 people) | $30,000–$60,000/year | $60,000–$200,000+/year | $30,000/year |

| Enterprise estimate (200 people) | Scales with compute | $200,000–$400,000+/year | $120,000+/year |

The cost story inverts at different team sizes. For small teams, W&B and MLflow are both affordable and Kubeflow is expensive relative to value delivered. At growth stage, all three converge in total cost — but Kubeflow’s costs are mostly invisible in the infrastructure and headcount budget rather than the software line. At enterprise scale, W&B’s per-seat model becomes the largest recurring line item.

MLflow on Databricks is often the most cost-predictable option at large scale, since compute costs are the primary driver and can be right-sized to workload. The real question is not which platform costs less — it is which cost structure fits your budget model over a three-year horizon.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

How to Choose an MLOps Platform: A Decision Framework by Maturity Level

The right frame for tool selection is team MLOps maturity level crossed with team archetype. Google’s MLOps maturity model defines three levels: Level 0 for manual workflows with no automation, Level 1 for automated training pipelines, and Level 2 for full CI/CD for machine learning. Each level calls for different tooling and different infrastructure investment.

Before any feature comparison, these four questions should eliminate at least one option for most teams:

- Does your team have at least one engineer with Kubernetes production experience? If not, Kubeflow as the primary platform is the wrong starting point.

- Is per-seat SaaS pricing sustainable as the team scales past 30 people? If not, model W&B’s cost trajectory explicitly before committing.

- Does your data have regulatory constraints that prevent cloud storage? If yes, Kubeflow on-premises or MLflow on a private Databricks instance are the architecturally defensible choices.

- Are you primarily building LLM or generative AI applications? If yes, W&B Weave’s observability layer is worth the pricing premium at small-to-medium team sizes.

Most teams know their headcount but underestimate the maturity question. A 25-person team with no dedicated platform engineer is operationally a small team regardless of org chart size. Honest maturity assessment matters more than headcount alone.

| Team Archetype | MLOps Maturity | Recommended Primary Tool | Recommended Secondary Tool | Tool to Avoid |

| Solo researcher or 1–5 person team | Level 0 | MLflow | W&B (if dashboards needed) | Kubeflow |

| Growth-stage ML team, cloud-native (5–20 people) | Level 0 to 1 | W&B | MLflow (model registry) | Kubeflow (until DevOps capacity is hired) |

| Enterprise platform team (20+ people, production ML) | Level 1 to 2 | Kubeflow | MLflow or W&B for tracking | — |

| Regulated industry (healthcare, finance, insurance) | Level 1 to 2 | Kubeflow (on-premises) | MLflow on Databricks | W&B (unless private cloud is configured) |

| LLM-first or generative AI team | Level 0 to 1 | W&B Weave | MLflow (for Databricks teams) | Kubeflow (unless serving large models at scale) |

| Research lab with stakeholder reporting requirements | Level 0 to 1 | W&B | MLflow | Kubeflow |

The maturity level is the variable that overrides everything else. A team operating at Level 0 with Kubeflow is paying production infrastructure costs to maintain a platform their workflows do not yet justify.

How Enterprise Teams Combine MLflow, Kubeflow, and W&B in Practice

Most comparison articles treat this as a pick-one decision. Real production ML environments do not work that way. The pattern that emerges across mature enterprise ML teams is not “which tool won” but “which tool owns which layer.”

Combination design matters as much as individual tool selection. Teams that adopt tools reactively — one for this project, another for that team — routinely end up with five to seven disconnected systems, no single model registry, and no coherent audit trail across the ML lifecycle.

| Stack Pattern | Tools Used | Who It Fits | What Each Tool Owns |

| Research-to-Production Bridge | W&B + MLflow + Kubeflow | Mid-to-large teams with distinct research and platform engineering functions | W&B: research tracking and stakeholder collaboration; MLflow: handoff layer and model registry; Kubeflow: production pipeline orchestration and serving |

| Databricks-Native Stack | MLflow (managed) + W&B (optional) | Organizations already invested in Databricks | MLflow: tracking, registry, governance; integrates with Databricks Lakeflow for end-to-end pipeline management |

| Kubernetes-Native Enterprise Stack | Kubeflow + W&B + KServe | Platform-mature organizations with strong DevOps capability | Kubeflow: orchestration backbone; W&B: experiment tracking and visualization; KServe: production model serving |

| Lightweight Hybrid | MLflow + W&B | Small-to-mid teams needing tracking and dashboards without orchestration overhead | MLflow: model registry and staging lifecycle; W&B: experiment visualization and hyperparameter sweeps |

| On-Premises Regulated Stack | Kubeflow (on-premises) + MLflow | Healthcare, finance, and insurance teams with strict data residency requirements | Kubeflow: orchestration and governance backbone; MLflow: tracking layer; no SaaS components in the architecture |

The Research-to-Production Bridge is the most common pattern at mid-to-large enterprises. It lets research and platform teams operate with different tools without creating governance gaps, as long as MLflow acts as the explicit handoff and registry layer. The Databricks-Native Stack carries the lowest operational overhead for teams already paying for Databricks.

The On-Premises Regulated Stack is the most restrictive but the only defensible architecture when data residency requirements are non-negotiable. And the warning that applies to all patterns: the combination must be designed intentionally — with explicit ownership of the model registry and audit trail — before any tools are deployed, not after three teams have adopted three different systems independently.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

MLOps Tool Integration Matrix: What Connects to What

Tool selection in enterprise environments is rarely a greenfield decision. Most teams have existing cloud infrastructure, data platforms, and orchestration tooling already in place. A tool that requires significant integration work to fit an existing cloud environment adds hidden cost that does not appear in any licensing comparison.

| Integration Target | MLflow | Kubeflow | W&B |

| AWS SageMaker | Native | Via Kubernetes | SDK integration |

| Azure ML | Native | Via AKS | SDK integration |

| Google Vertex AI | Partial | Native (GKE) | SDK integration |

| Databricks | Native (managed) | Partial | SDK integration |

| Apache Airflow | Common integration pattern | Built-in Pipelines (replaces Airflow) | No native integration |

| Hugging Face | Native | Via Training Operators | Native |

| LangChain | Native | No | Native (Weave) |

| dbt | Partial | No | No |

| Apache Spark | Via Databricks | Via Training Operators | Limited |

| Terraform | Community-supported | Strong (Kubernetes-native) | Limited |

The absence of native dbt integration across all three tools is a real gap for teams managing feature engineering in dbt — expect custom pipeline work regardless of which platform is selected.

Data Automation: A Complete Guide to Streamlining Your Businesses

Accelerate business performance by systematically transforming manual data processes into intelligent, efficient, and scalable automated workflows.

MLOps Tool Migration Paths: What Switching Actually Costs

Teams rarely talk publicly about what it costs to migrate between MLOps platforms. But migration decisions happen — and the cost is consistently underestimated. Evaluating migration cost before committing is the right time — not 18 months into production use.

| Migration Path | Effort Level | Primary Friction Points | Realistic Time Estimate |

| MLflow to W&B | Low to Medium | Model registry migration, dashboard rebuild | 1 to 3 weeks |

| W&B to MLflow | Medium to High | Proprietary artifact and report formats; some historical data loss likely | 2 to 4 weeks |

| MLflow to Kubeflow (additive) | Low to Medium | Integration design, pipeline setup — MLflow continues as tracking layer | 2 to 4 weeks |

| Kubeflow to Vertex AI or SageMaker Pipelines | Very High | Pipeline DSL rewrite required across all production pipelines | 2 to 4 months |

| W&B to self-hosted alternative (e.g., MLflow) | Medium to High | Experiment history export via API only; Reports do not port | 2 to 4 weeks plus data loss |

| Kubeflow to ZenML (abstraction layer) | Medium | ZenML wraps Kubeflow backend; existing pipelines need adaptation | 4 to 8 weeks |

The additive path — adopting Kubeflow on top of an existing MLflow setup — is the most common migration direction for growing enterprise teams and carries the lowest risk. MLflow continues to own tracking; Kubeflow takes over orchestration. Most teams complete the initial integration in two to four weeks.

The most expensive migration by far is moving off Kubeflow to another orchestration system. Its Pipeline DSL is not portable, meaning production pipelines require full rewrites. Teams with 20 or more active production pipelines should treat Kubeflow as a long-term architectural commitment. W&B’s proprietary data formats make it the riskiest tool to exit among the three — budget for engineering time and accept some historical data loss before committing at scale.

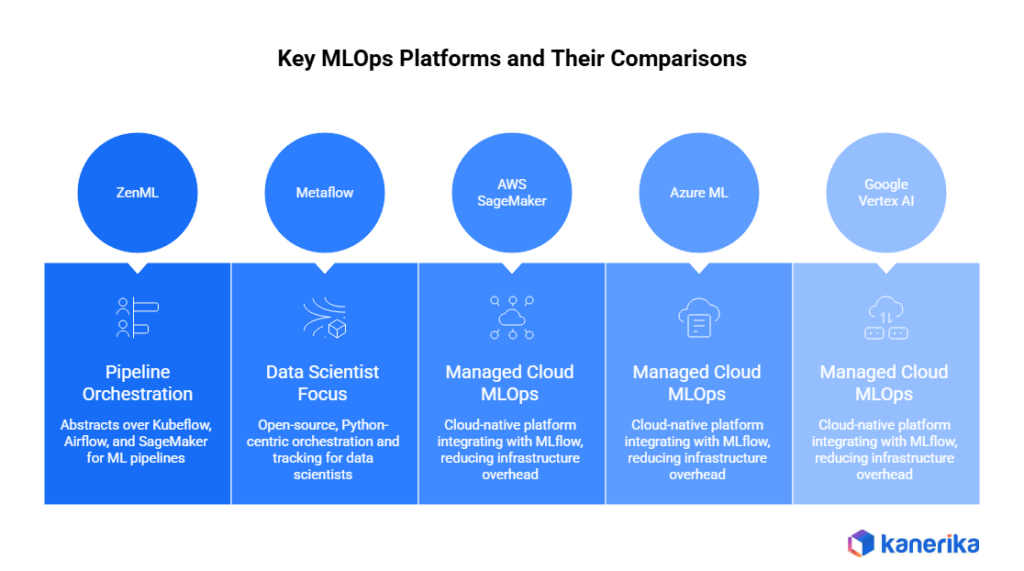

How MLflow, Kubeflow, and W&B Compare to Other MLOps Platforms

MLflow, Kubeflow, and W&B are not the only options in the machine learning operations space. Understanding where they sit relative to adjacent tools clarifies the decision — especially for teams that have already evaluated or ruled out alternatives.

ZenML is a pipeline orchestration framework that abstracts over multiple execution backends, including Kubeflow, Airflow, and AWS SageMaker Pipelines. It is less operationally demanding than running Kubeflow directly and is worth evaluating for teams that want ML pipeline orchestration without the full Kubernetes infrastructure commitment. Its tracking and visualization depth does not match MLflow or W&B.

Metaflow (open-source, originally from Netflix) is optimized for data scientists — minimal infrastructure knowledge required, strong Python ergonomics, and a unified interface for both orchestration and tracking. It is less capable than Kubeflow at production scale but faster to adopt than any of the three tools in this comparison.

AWS SageMaker, Azure ML, and Google Vertex AI are managed cloud MLOps platforms that each integrate with MLflow natively. They reduce infrastructure overhead significantly compared to self-managed Kubeflow. The trade-off is cloud vendor lock-in and cost structures that can exceed self-managed alternatives at large scale.

| Alternative Tool | Best For | Weakest At | How It Compares |

| ZenML | Teams wanting orchestration without full Kubernetes commitment | Tracking depth and visualization | Easier entry point than Kubeflow; less capable at production scale |

| Metaflow | Data scientists with minimal infrastructure knowledge | Production scale and enterprise governance | Fastest to adopt of any option; least powerful at scale |

| AWS SageMaker | AWS-native teams that want managed MLOps | Multi-cloud flexibility | Lowest operational overhead; highest cloud vendor lock-in |

| Azure ML | Azure and Databricks teams | Open-source flexibility | Integrates natively with MLflow; reduces the case for standalone Kubeflow |

| Google Vertex AI | GKE-native teams | Portability across cloud providers | Kubeflow’s natural managed cloud complement |

The three tools in this comparison are most commonly evaluated together because they span experiment tracking, pipeline orchestration, and visualization in a way no single managed cloud platform matches — for teams that need flexibility and portability across providers. But they are not automatically the right choice over simpler or more managed alternatives, especially for earlier-stage teams.

Choosing between Kubeflow and SageMaker Pipelines is really choosing between operational control and operational simplicity. Neither is wrong. The answer depends entirely on whether the team has the platform engineering capacity to justify the control.

The Gaps That MLflow, Kubeflow, and W&B Leave Unresolved

Being honest about what all three tools leave unresolved is as important as understanding what they do well. These are not edge cases — they are consistent gaps across real enterprise deployments.

End-to-end model governance is not a native feature in any of the three platforms. Audit trails, model approval workflows, and regulatory reporting all require deliberate architectural decisions and custom implementation on top of whichever tool is selected. Teams that assume the platform handles compliance are building on a false foundation.

The MLOps landscape is monitored consistently across underserved across. All three tools surface technical metrics, but none natively ties model performance drift to business KPIs — revenue impact, downstream decision error rates, customer outcomes — without additional engineering work.

Cross-tool data lineage breaks down when teams use two or three of these platforms in combination. Tracking lineage from raw data through feature engineering, training, and production prediction becomes a manual exercise across tool boundaries.

Organizational alignment is the gap no MLOps platform addresses at all. Getting data science, engineering, and business stakeholders aligned on a shared toolchain is a change management challenge, not a technical one. The right tool selection addresses roughly 40% of the MLOps problem. The other 60% is architecture, implementation, governance, and the organizational discipline to operate the stack consistently over time.

How Kanerika Approaches MLOps Stack Design for Enterprise Teams

Kanerika is a Microsoft Solutions Partner for Data and AI, with delivery experience across financial services, healthcare, manufacturing, and supply chain. MLOps architecture recommendations come from what these industries actually require operationally — not what performs well in benchmark comparisons or conference presentations.

Every engagement begins with an MLOps maturity assessment: evaluating where a team actually sits on the maturity curve before recommending any tooling. Tool recommendations follow architecture requirements, not the other way around. That means recommending MLflow when simplicity and cost efficiency fit the context, W&B when collaboration is the bottleneck, and Kubeflow when production scale genuinely demands it.

The pattern seen consistently across enterprise engagements: teams select a tool for its ceiling rather than its fit. The fix is about right-sizing the scope of what each tool is asked to do and designing the governance layer that sits above all of them.

Quick Reference: MLflow vs Kubeflow vs W&B Platform Comparison

For teams that have worked through the full analysis and need a single reference to share internally, the table below consolidates the key dimensions across all three platforms.

No single row determines the right choice. The dimensions that matter most vary by team context. But if one row deserves the most weight for most enterprise readers, it is vendor lock-in risk — it is the dimension that looks least important at the start of an evaluation and most important 18 months into production use.

| Comparison Dimension | MLflow | Kubeflow | Weights & Biases |

| Primary function | Experiment tracking and model registry | ML pipeline orchestration and production-scale ML | Experiment tracking, team collaboration, and LLM observability |

| Setup complexity | Low | Very high | Low |

| Best team size | 1 to 10 people | 20+ (with dedicated DevOps) | 5 to 30+ |

| Pipeline orchestration | No (requires external orchestrator) | Yes (native Kubeflow Pipelines) | No |

| LLM and GenAI support | Strong (current versions) | Partial (KServe for model serving) | Best-in-class (W&B Weave) |

| On-premises deployment | Yes | Yes | Enterprise tier only |

| Cost model | Free open-source | Free open-source + substantial infrastructure costs | $50/user/month (Teams tier, annual billing) |

| Vendor lock-in risk | Low | Low to Medium | Medium to High |

| Regulated industry fit | Moderate (strong on Databricks) | Strong | Moderate (Enterprise tier with private cloud) |

| Best role in a hybrid stack | Model registry and tracking layer | Orchestration backbone | Visualization, hyperparameter sweeps, and LLM observability |

| Most common complaint (G2, 2024) | Limited UI depth; no native alerting | Steep Kubernetes learning curve; complex setup | Pricing at scale; data privacy concerns |

The Bottom Line on MLflow vs Kubeflow vs Weights & Biases

MLflow, Kubeflow, and W&B represent three different philosophies: reproducibility and simplicity, production-scale pipeline orchestration, and collaborative visibility with LLM observability. The selection decision is not a feature comparison exercise — it is an assessment of team maturity, infrastructure capacity, and governance requirements, in that order.

Most enterprise teams that operate machine learning at scale end up using two of these tools together by design, not one tool that does everything. That is not a failure of the platforms. It reflects the reality that no single tool spans the full lifecycle from experimentation to production to business-layer monitoring and governance.

The real risk is not picking the wrong tool. It is picking any tool without an implementation strategy, a governance model, and an organizational alignment plan wrapped around it. Choosing an MLOps platform is the beginning of the work — not the end of it.

Speed Up Your Data Management with Powerful DataOps Tools!

Partner with Kanerika Today!

FAQs

What are the best MLOps platforms?

The best MLOps platforms include MLflow for experiment tracking, Kubeflow for Kubernetes-native orchestration, Amazon SageMaker for AWS-integrated workflows, Azure Machine Learning for Microsoft ecosystems, and Weights & Biases for collaborative experimentation. Each platform excels in different areas—MLflow offers flexibility and open-source freedom, while managed services like SageMaker reduce infrastructure overhead. Enterprise teams often combine multiple tools to build comprehensive machine learning pipelines covering training, deployment, and monitoring. Kanerika helps organizations evaluate and implement the right MLOps platform stack based on your specific infrastructure and compliance needs—schedule a consultation today.

Which tools are used for MLOps?

MLOps tools span several categories including experiment tracking with MLflow and Weights & Biases, orchestration using Kubeflow and Apache Airflow, model serving through TensorFlow Serving and Seldon Core, and containerization via Docker and Kubernetes. Feature stores like Feast manage data pipelines, while monitoring tools such as Evidently AI detect model drift in production. Most enterprise ML teams combine open-source and commercial solutions to create end-to-end machine learning operations workflows. Kanerika’s AI specialists can architect a tailored MLOps toolchain that integrates seamlessly with your existing data infrastructure—reach out for a technical assessment.

What is MLOps software?

MLOps software automates and streamlines the machine learning lifecycle from data preparation through model deployment and monitoring. These platforms provide capabilities for version control, experiment tracking, automated retraining, and production model management. Unlike traditional software development tools, MLOps solutions handle unique ML challenges including data versioning, model reproducibility, and performance drift detection. The software bridges the gap between data science experimentation and reliable production systems, enabling teams to deploy models faster while maintaining governance standards. Kanerika implements MLOps software solutions that accelerate your AI initiatives—connect with our team to explore options for your organization.

Is MLOps just DevOps?

MLOps extends DevOps principles but addresses challenges unique to machine learning systems. While DevOps focuses on code deployment and application reliability, MLOps must also manage data versioning, model training pipelines, experiment reproducibility, and continuous model monitoring for drift. ML systems behave differently than traditional applications because model performance degrades as data distributions change over time. MLOps incorporates CI/CD practices but adds ML-specific components like feature stores, model registries, and automated retraining triggers. The discipline requires expertise spanning software engineering, data engineering, and data science. Kanerika bridges these domains to build robust MLOps frameworks—let us assess your current ML operations maturity.

What is an example of MLOps?

A practical MLOps example involves an e-commerce company deploying a product recommendation model. The workflow starts with automated data pipelines pulling customer behavior data into a feature store. Data scientists experiment in MLflow, tracking hyperparameters and metrics. Once validated, the model registers in a central repository, triggering CI/CD deployment to Kubernetes via Kubeflow. In production, monitoring tools track prediction latency and detect accuracy drift. When performance drops below thresholds, automated retraining initiates using fresh data. This end-to-end machine learning pipeline ensures reliable, scalable recommendations. Kanerika builds similar production-grade MLOps workflows—talk to us about implementing automated ML pipelines for your use case.

What is the main difference between MLflow and Kubeflow?

MLflow focuses on experiment tracking, model versioning, and registry functions, making it ideal for managing the data science workflow. Kubeflow orchestrates entire machine learning pipelines on Kubernetes, handling distributed training, workflow scheduling, and model serving at scale. MLflow works independently of infrastructure and runs on any environment, while Kubeflow requires Kubernetes expertise and cluster management. Teams often use MLflow for experimentation and Kubeflow for production orchestration. MLflow suits smaller teams starting their MLOps journey; Kubeflow benefits organizations with existing Kubernetes infrastructure needing enterprise-scale ML operations. Kanerika helps enterprises select and integrate the right MLOps tools for their technical environment—request a personalized comparison.

Which MLOps platform is best for regulated industries like healthcare or finance?

Regulated industries require MLOps platforms with robust audit trails, access controls, and model explainability features. Azure Machine Learning and Amazon SageMaker offer enterprise-grade compliance certifications including HIPAA, SOC 2, and GDPR readiness. These managed platforms provide built-in model lineage tracking, data encryption, and role-based permissions essential for healthcare and financial services. Dataiku and Domino Data Lab also serve regulated sectors with governance-first architectures. Open-source tools like MLflow can work when deployed within compliant infrastructure, though they require additional security configuration. Kanerika specializes in implementing compliant MLOps architectures for regulated enterprises—contact us to discuss your specific compliance requirements.

Is Kubernetes part of MLOps?

Kubernetes serves as foundational infrastructure for many MLOps implementations but is not an MLOps tool itself. It provides container orchestration that enables scalable model training, reproducible environments, and reliable model serving. Platforms like Kubeflow run natively on Kubernetes, leveraging its scheduling and resource management capabilities. Organizations use Kubernetes to deploy containerized ML workloads, manage GPU resources efficiently, and scale inference endpoints based on demand. However, smaller teams successfully implement MLOps without Kubernetes using managed cloud services or simpler deployment approaches. Kubernetes becomes essential when ML workloads require enterprise-scale orchestration. Kanerika architects Kubernetes-based MLOps solutions optimized for your infrastructure scale—schedule a technical discovery session.

Is Docker an MLOps tool?

Docker functions as an enabling technology within MLOps rather than a dedicated MLOps tool. It provides containerization that ensures consistent environments across development, testing, and production stages of machine learning workflows. Data scientists package models with their dependencies into Docker images, eliminating environment discrepancies that cause deployment failures. MLOps platforms like MLflow and Kubeflow integrate Docker to create reproducible training runs and portable model artifacts. While Docker alone does not provide experiment tracking or model monitoring, it remains essential infrastructure for production ML systems requiring environment consistency. Kanerika leverages Docker within comprehensive MLOps architectures—explore how we containerize ML workflows for reliable deployments.

Can MLflow and Kubeflow be used together in the same MLOps stack?

MLflow and Kubeflow complement each other effectively within unified MLOps stacks. Teams typically use MLflow for experiment tracking, model versioning, and artifact storage during development, then leverage Kubeflow Pipelines for production orchestration and distributed training at scale. MLflow models can deploy through Kubeflow Serving, combining MLflow’s registry capabilities with Kubeflow’s Kubernetes-native serving infrastructure. This integration provides comprehensive coverage across the machine learning lifecycle—experimentation flexibility from MLflow and production scalability from Kubeflow. Many enterprises adopt this combined approach to maximize tool strengths while avoiding vendor lock-in. Kanerika designs integrated MLOps architectures combining best-in-class tools—let us build your unified ML platform.

What are the main weaknesses of Kubeflow as an MLOps platform?

Kubeflow presents significant operational complexity requiring dedicated Kubernetes expertise for installation, configuration, and maintenance. Teams without strong DevOps capabilities struggle with cluster management, networking, and resource optimization. The platform lacks polished user interfaces compared to commercial MLOps tools, making adoption challenging for data scientists unfamiliar with Kubernetes concepts. Component integration varies in maturity—some features remain experimental while others lack documentation. Resource consumption runs high even for modest workloads, increasing infrastructure costs. Organizations must weigh these trade-offs against Kubeflow’s powerful orchestration capabilities and open-source flexibility. Kanerika helps enterprises navigate Kubeflow complexity or identify better-suited alternatives—request a platform evaluation consultation.

Is Weights & Biases better than MLflow for enterprise teams?

Weights & Biases offers superior collaboration features with real-time experiment dashboards, team workspaces, and polished visualization tools that enterprise teams appreciate. Its managed cloud service eliminates infrastructure maintenance overhead. MLflow provides greater flexibility as open-source software, avoiding vendor lock-in while enabling on-premises deployment for data-sensitive organizations. MLflow integrates broadly across the ML ecosystem, while Weights & Biases delivers deeper functionality within its scope. Enterprise teams prioritizing ease-of-use and collaboration often prefer Weights & Biases; those requiring deployment flexibility and cost control favor MLflow. Both tools successfully support large-scale ML operations. Kanerika evaluates your team structure and requirements to recommend optimal experiment tracking solutions—book a comparison workshop.